The issues discussed in "AI plagiarism" (1/4/2024) are rapidly coming to a boil. But somehow I missed Margaret Atwood's take on the topic, published last summer — "Murdered by my replica", The Atlantic 8/26/2023:

Remember The Stepford Wives? Maybe not. In that 1975 horror film, the human wives of Stepford, Connecticut, are having their identities copied and transferred to robotic replicas of themselves, minus any contrariness that their husbands find irritating. The robot wives then murder the real wives and replace them. Better sex and better housekeeping for the husbands, death for the uniqueness, creativity, and indeed the humanity of the wives.

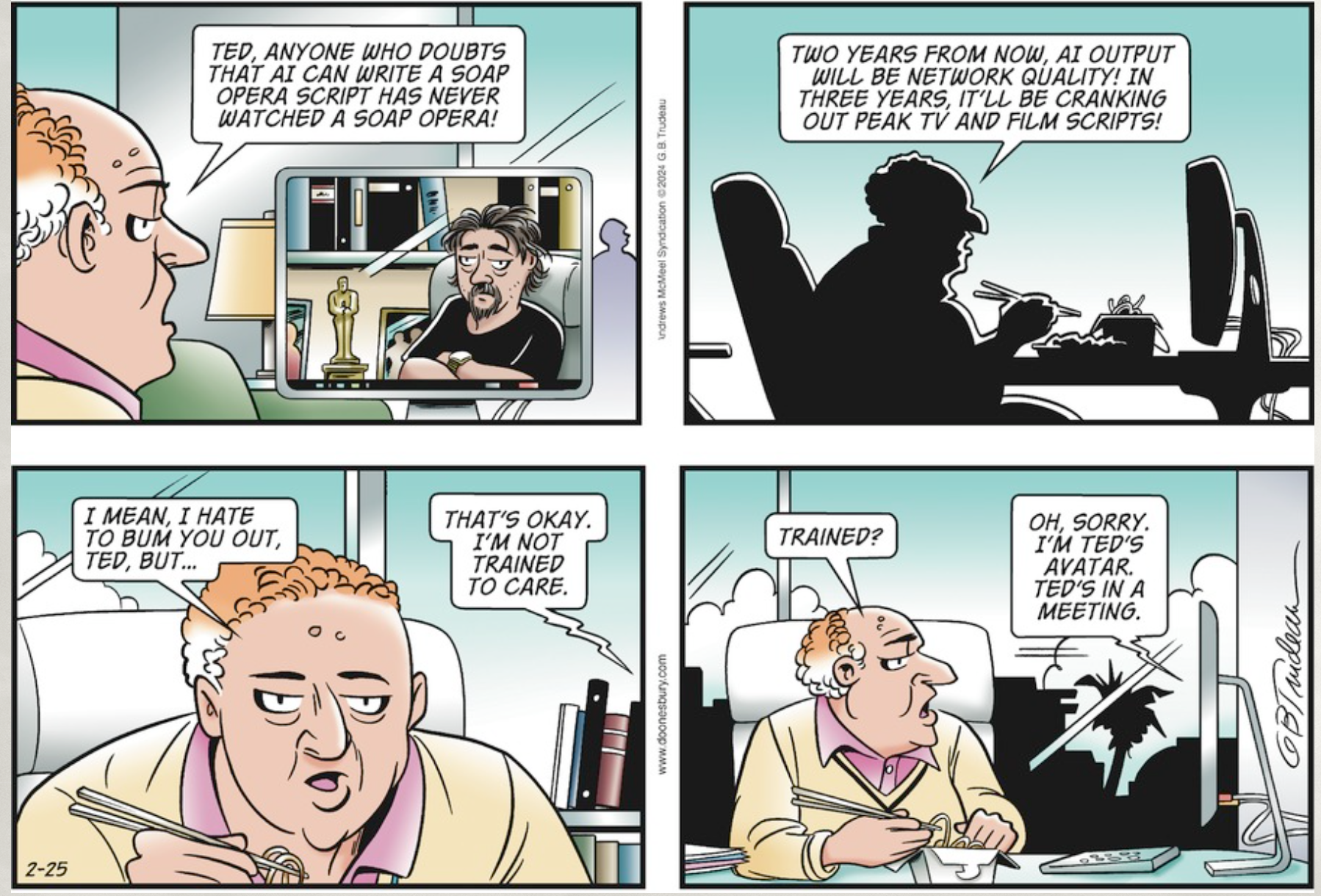

The companies developing generative AI seem to have something like that in mind for me, at least in my capacity as an author. (The sex and the housekeeping can be done by other functionaries, I assume.) Apparently, 33 of my books have been used as training material for their wordsmithing computer programs. Once fully trained, the bot may be given a command—“Write a Margaret Atwood novel”—and the thing will glurp forth 50,000 words, like soft ice cream spiraling out of its dispenser, that will be indistinguishable from something I might grind out. (But minus the typos.) I myself can then be dispensed with—murdered by my replica, as it were—because, to quote a vulgar saying of my youth, who needs the cow when the milk’s free?

To add insult to injury, the bot is being trained on pirated copies of my books. Now, really! How cheap is that? Would it kill these companies to shell out the measly price of 33 books? They intend to make a lot of money off the entities they have reared and fattened on my words, so they could at least buy me a coffee.

Read the rest of this entry »