Inter-syllable intervals

« previous post | next post »

This is a simple-minded follow-up to "New models of speech timing?" (9/11/2023). Before getting into fancy stochastic-point-process models, neural or otherwise, I though I'd start with something really basic: just the distribution of inter-syllable intervals, and its relationship to overall speech-segment and silence-segment durations.

For data, I took one-minute samples from 2006 TED talks by Al Gore and Tony Robbins.

I chose those two because they're listed here as exhibiting the slowest and fastest speaking rates in their (TED talks) sample. And I limited the samples to about one minute, because I'm interested in metrics that can apply to fairly short speech recordings, of the kind that are available in clinical applications such as this one.

Listen to the samples and you'll certainly hear differences in phrasing and pausing and timing — and to a lesser extent within-phase speaking rate — which do result in a modest overall words-per-minute difference: Tony Robbins produces 254 words in 64.04 seconds, or 238 words per minute, while Al Gore produces 206 words in 64.68 seconds, or 191 wpm.

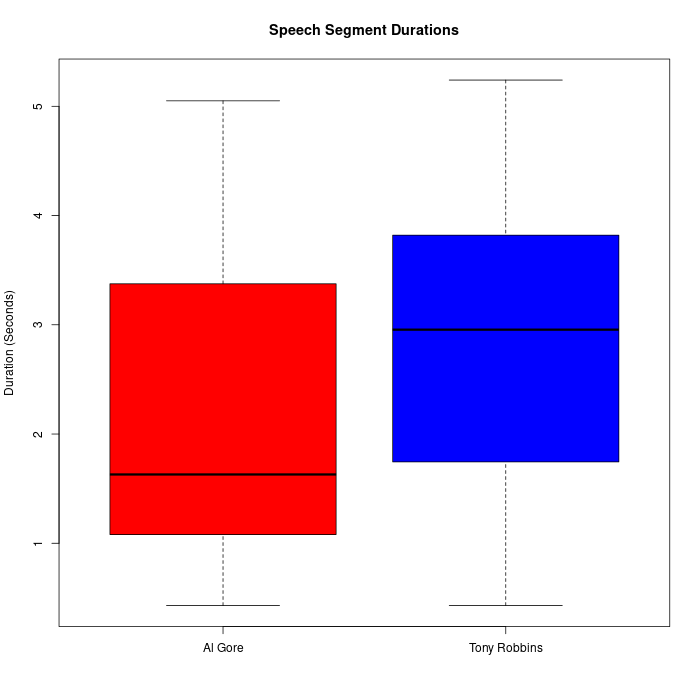

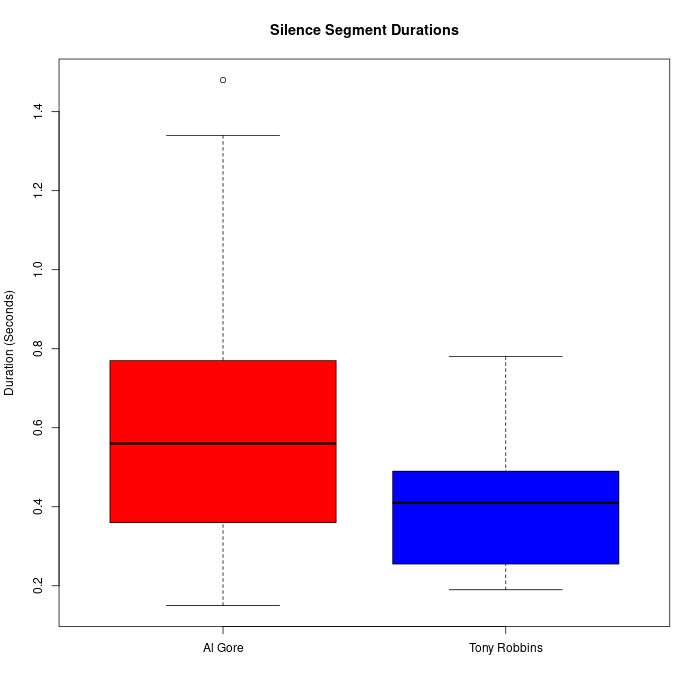

As in other examples I've analyzed in the past, the length of speech segments and silence segments in these samples is interestingly decoupled from the overall words-per-minute rates:

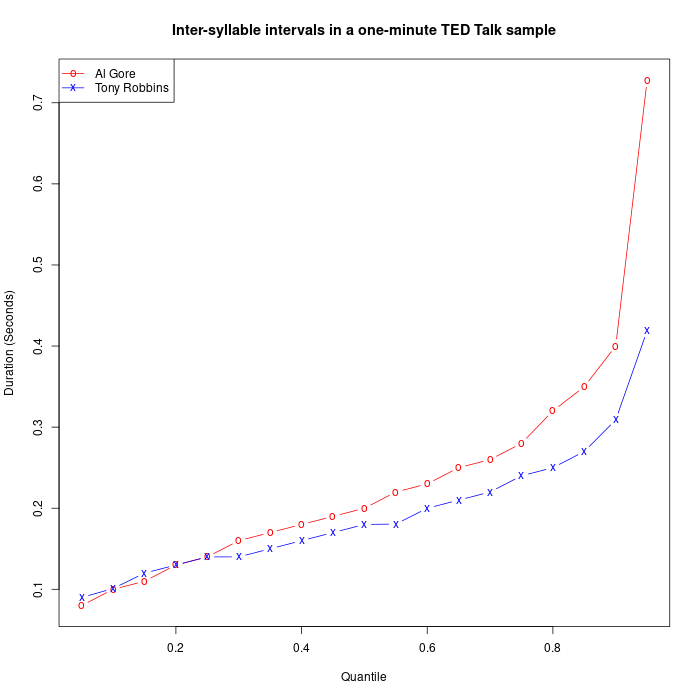

And as suggested in Monday's post, it's possible to interpret the speech-segment/silence-segment differences as an aspect of the syllable-level timing, as in this plot of the quantiles of time between adjacent syllables:

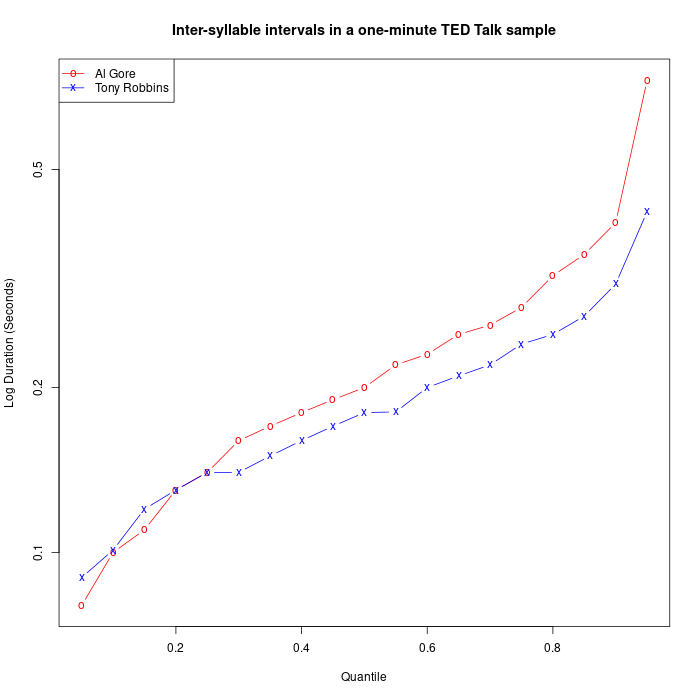

Or with the durations on a log scale:

It remains unclear whether (and how) such patterns can be reduced to a few diagnostically-insightful dimensions.

[Note — the "syllables" were identified by this simple automatic method…]

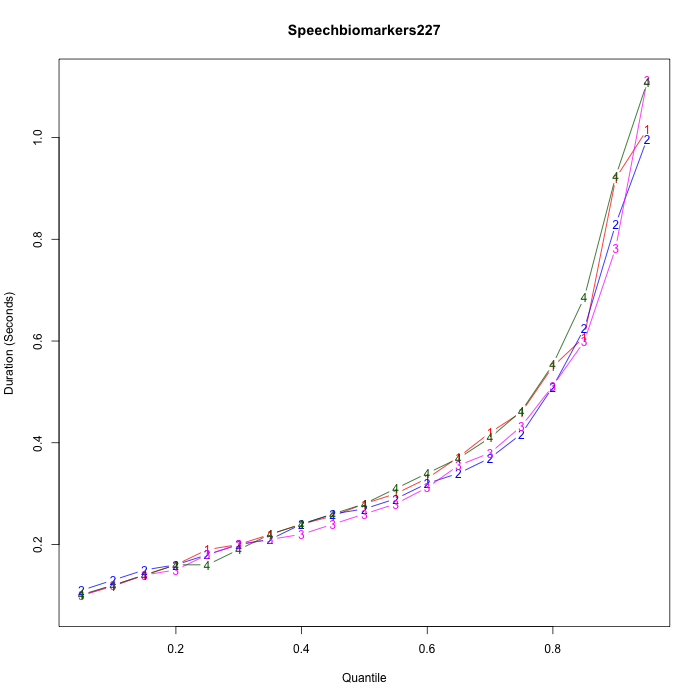

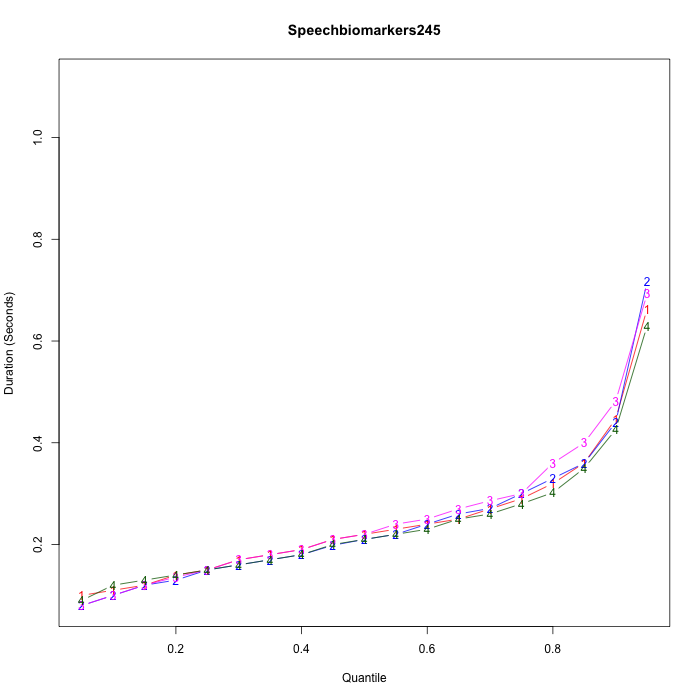

Update — Similar plots for four "picture description" recordings from two subjects in our "Speech Biomarkers" collection, chosen so that one speaks relatively fast (subject 245) and the other speaks relatively slowly (subject 227):

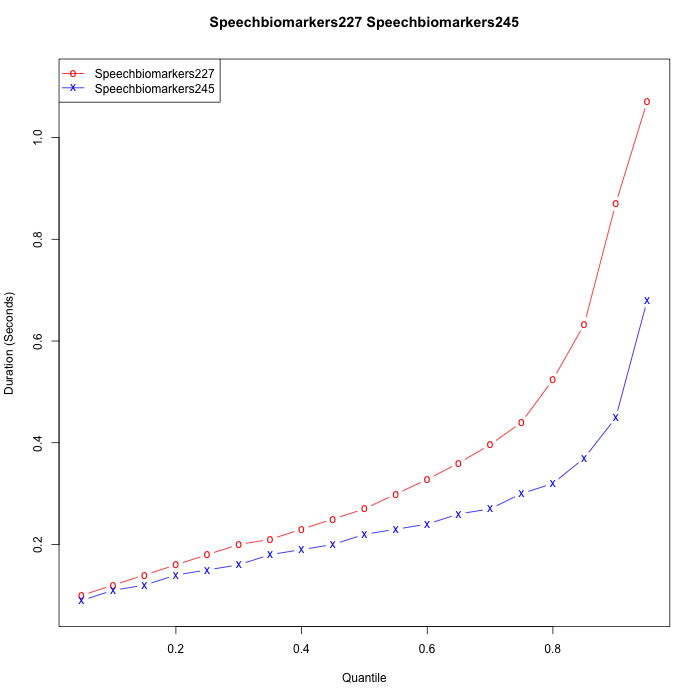

Combining all four recordings for each subject, and plotting them on the same graph:

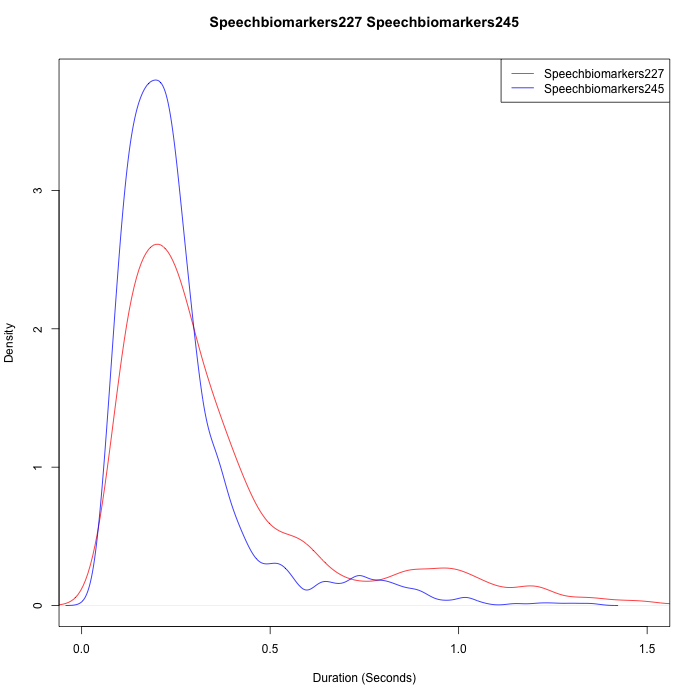

And a comparison of density plots:

Again, this way of looking at things displays syllable-to-syllable timing and phrase-pause timing on the same scale, without paying any attention to where there are (or aren't) silent pauses. Whether that's a meaningful (or at least useful) thing to do is not yet clear to me.

Update — Some neat distribution-modeling by Tarun Raheja.

He tried a number of ways to fit such curves, and ended up with

which produces fits like this:

He then applied T-SNE to the table of four parameters across the (I think individual) picture description recordings, and got this:

…which basically looks like a swirly one-dimensional distribution, and might be a useful parameter across individuals and occasions.

Benjamin E. Orsatti said,

September 15, 2023 @ 7:47 am

So, how long before businesses start using AI during job interviews to screen out applicants whose speech patterns evidence that they are "different" and, therefore, too "risky"?

Mark Liberman said,

September 15, 2023 @ 11:38 am

@Benjamin E. Orsatti: "how long before businesses start using AI during job interviews to screen out applicants whose speech patterns evidence that they are "different" and, therefore, too "risky"?"

Not long enough, obviously — but keep in mind that interviewers have always done exactly this using their own (often biased and wrong) interpretation of an interviewee's speech patterns…

Update — and also, this page notes that "one-third of HR professionals are using personality assessment tests and pre-employment testing during the hiring and interview process for executive roles."

Benjamin E. Orsatti said,

September 15, 2023 @ 12:40 pm

Prof. Liberman,

This is true. So, can I hire you as an expert in the event I should ever have to cross-examine a chat bot?

What also struck me, related to your other similar post (https://languagelog.ldc.upenn.edu/nll/?p=60543), is that it wouldn't be too far-fetched to imagine companies, looking to weed out non-"neurotypicals" (e.g. ASD, etc.), simply finding a way to "peg" speech timing to, say, a Meyers-Briggs classification, and take the position: "Well, we're only looking for ESTJ's, and the "quantif[ied] temporally local variation in the relative rates of speech signals" of this applicant indicated INFP, so, he's SOL.