QWERTY: Failure to replicate

« previous post | next post »

Following up on "The QWERTY effect", 3/8/2012, I got this email earlier today from Peter Dodds:

I just saw your piece on these curious QWERTY findings re positivity. I see you used the ANEW data set, and this has prompted me to point to our much larger data set (just over 10,000 words) that we have for positivity/happiness (I've been meaning to write for a while). I've attached the scores and the relevant paper is here:

Dodds et al., "Temporal Patterns of Happiness and Information in a Global Social Network: Hedonometrics and Twitter", PLoS ONE 2011.

There's a lot in this paper which is focused on measuring happiness in real time using Twitter. But a few quick key points:

1. Our scores correlate very well with those of the original ANEW study.

2. We compiled our list of words using the 5000 most frequently used words in four corpora: Twitter, NY Times (20 years), music lyrics (50+ years), and Google Books (100s of years).

3. Because of 2., our coverage of texts is much more complete.

4. We found a natural way to select stop words.

5. The resulting instrument for measuring happiness is much improved over the previous one which we based on the ANEW study. It feels very similar to making a better physical instrument (e.g., a microscope) and being able to then see more detail and structure.

A related shorter paper you might find of interest is here:

Kloumann et al., "Positivity of the English Language", PLoS ONE 2012.

Here we show that there is a positive bias to at least the `atoms' of language, and that this bias is independent of frequency of usage (the Pollyanna Hypothesis).

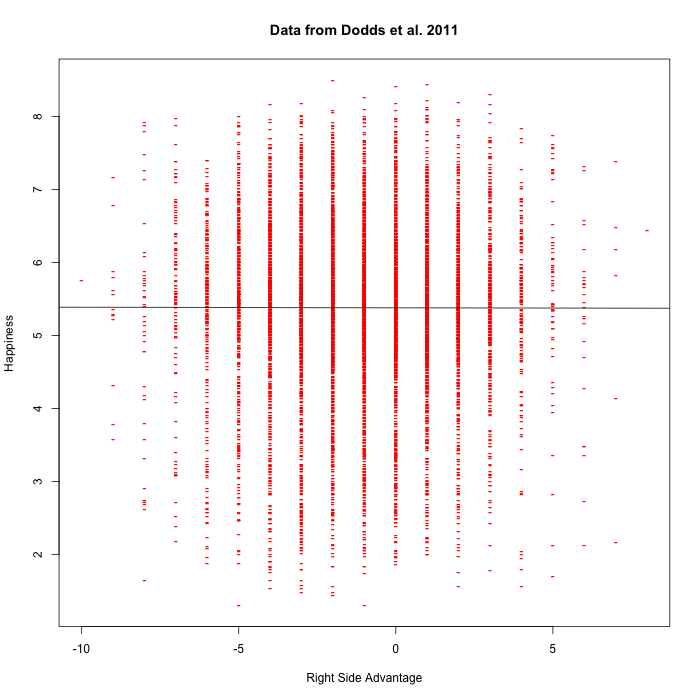

I removed a few of the words in their list that contain non-alphabetic characters (e.g. "7th", "how's"), leaving 9912 word types (compared to 1032 in ANEW). A plot of the relationship between "right-side advantage" and "happiness" for those words is here:

The slope of the fitted regression line is -0.0008636 ± 0.0044066. This linear regression based on the (QWERTY) "right-side advantage" accounts for about four one-millionths of the variance in "happiness" scores. For what little it's worth, the probability of doing this well by chance was estimated at 0.845.

Perhaps the regression should be weighted by word frequency, but I'm done for now.

Update — more from Dodds' lab is here.

RW said,

March 13, 2012 @ 7:38 pm

I wondered again whether taking average values of valence for each RSA might reveal patterns that are otherwise obscure. As before, I find nothing. Plotting average+-std.dev valence against RSA shows clearly that there is no relationship. From a linear fit I get mean valence=5.348-0.011*RSA, and the uncertainty on the slope is reported as NaN by gnuplot.

Neuroskeptic said,

March 14, 2012 @ 3:45 am

Nice post.

The interpretation seems odd anyway. You could equally well say, I don't know, right-dominant words are typed with the right hand, the right hand is controlled by the left side of the brain, and that's the "happy half", (there's actually some evidence for that now I come to think about it…)

Or most people are right handed so they find it easier to type right sided words.

Dr. Decay said,

March 14, 2012 @ 5:49 am

I love it. It feels like those 3-sigma bumps that so often later disappear in high energy physics. Except that here one e-mail provided an almost 10-fold increase in the amount of data.

Jason said,

March 14, 2012 @ 8:13 am

Perhaps box plots would be easier to interpret here? Or is the effect really too small to see by eyeballing it no matter how you chart it?

[(myl) Here's a violin plot:

(Note that the data is a bit thin for the RSA values at the left and right edges…)]

Jeff Binder said,

March 14, 2012 @ 8:49 am

I'm pretty sure the conclusion is that there is no effect.

JW Mason said,

March 14, 2012 @ 2:56 pm

This is beautiful — both the QWERTY guys insistence on regarding this clearly null finding as being significant, and Mark Liberman's patient demolition job.

D.O. said,

March 14, 2012 @ 10:05 pm

I decided to do a quick paper for Annals of Absurd Research and calculated information gain for letter frequencies conditional on happiness in Dodds' data. As base frequencies I took Cornell dataset .

Here are the results:

happiness index, information gain(bit/letter), error, number of letters, number of words

-10 1.220 1.455 10 1

-9 0.631 0.411 145 13

-8 0.565 0.183 378 41

-7 0.513 0.090 882 106

-6 0.372 0.090 2004 250

-5 0.284 0.079 3678 498

-4 0.212 0.074 5446 807

-3 0.115 0.071 7323 1125

-2 0.078 0.069 8592 1419

-1 0.053 0.068 9408 1598

0 0.070 0.072 8556 1494

1 0.151 0.078 6620 1138

2 0.268 0.086 4558 744

3 0.411 0.098 2553 409

4 0.589 0.115 1164 174

5 0.682 0.137 495 63

6 0.846 0.277 200 25

7 1.163 0.613 50 6

8 1.789 1.376 10 1

I think, I am totally on to something.

The Bad Science Reporting Effect - Lingua Franca - The Chronicle of Higher Education said,

March 16, 2012 @ 2:40 am

[…] one but roughly agreeing with it. He re-ran the experiment on this improved data. The result: total absence of the alleged right-hand bias (0.000004 of the variance; chances of a similar result by mere chance are about 85 percent). There […]

Desain Website said,

March 18, 2013 @ 11:15 pm

The slope of the fitted regression line is -0.0008636 ± 0.0044066. This linear regression based on the (QWERTY) "right-side advantage" accounts for about four one-millionths of the variance in "happiness" scores. For what little it's worth, the probability of doing this well by chance was estimated at 0.845.

Does QWERTY Affect Happiness? | Computational Story Lab said,

March 29, 2013 @ 4:47 am

[…] taken place between Mark Liberman of the Language Log blog and the authors of the study. See post1, post2, the response from J&C, and the response back. After being informed by (our) Peter Dodds of […]