Bonferroni rules

« previous post | next post »

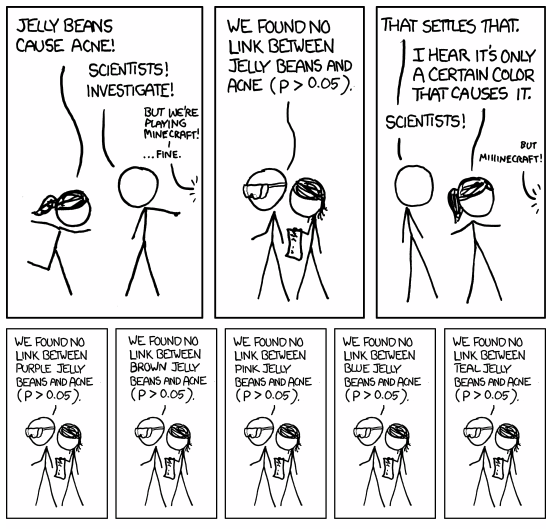

The most recent xkcd illustrates the problem of multiple comparisons:

(As usual, click on the image for a larger version.)

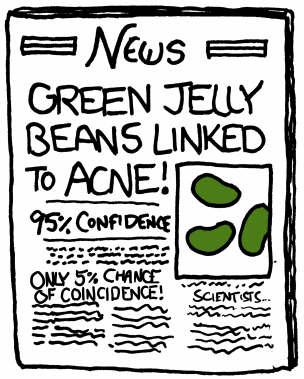

After 14 more "not significant" results,

The mouseover title:

"'So, uh, we did the green study again and got no link. It was probably a–' 'RESEARCH CONFLICTED ON GREEN JELLY BEAN/ACNE LINK; MORE STUDY RECOMMENDED!'"

As Wikipedia explains,

The Dunn-Bonferroni correction is derived by observing Boole's inequality. If you perform n tests, each of them significant with probability β, (where β is unknown) then the probability that at least one of them comes out significant is (by Boole's inequality) ≤ n⋅β. Now we want this probability to equal α, the significance level for the entire series of tests. By solving for β, we get β = α/n.

Relevant background reading: M R Munafò et al., "Bias in genetic association studies and impact factor", Molecular Psychiatry 14: 119–120, 2009, discussed here.

Among R.A. Fisher's several works of public-relations genius, the greatest was the appropriation of the word significant to mean "having a low probability of occurrence if the null hypothesis is true".

Update — If you'd like to do a version of the jelly-bean experiments yourself, without cutting into your Minecraft time, you could try something like this (in R), which generates a couple of sets of random numbers (from an underlying distribution that never changes) and then tests the null hypothesis that the means of the two sets are equal.

NSAMPLES <- 15

NTESTS <- 100

pvalues <- 1:NTESTS

for(n in 1:NTESTS){

y1<- runif(NSAMPLES); y2 <- runif(NSAMPLES)

X <- t.test(y1,y2,var.equal=TRUE)

pvalues[n] <- X$p.value

}

Then sum(pvalues<0.05) turns out to be (the first few times I ran it) 7,7,6,7,3,4,5, …

In other words, about 5% of the time, the null hypothesis is estimated to have a probability below 5%. (We should resist the temptation to violate Charlie Sheen's trademark on "Duh".)

Moacir said,

April 6, 2011 @ 7:27 am

Small point: I was completely confused by the cartoon from the edited version given above, which does not include the panel where green jelly beans are tested and yield a p < .05.

Ellen K. said,

April 6, 2011 @ 7:28 am

It's 14 (not 15) more "no link", and one "found a link" (for green).

Rodger C said,

April 6, 2011 @ 7:37 am

Still smaller point: I went straight from XKCD to this site, and at first I thought I was suffering a computer glitch.

Chris said,

April 6, 2011 @ 7:38 am

Can't help but be reminded of the recent Supreme Court brief decrying the misunderstanding of significance testing (pdf).

[(myl) One of the authors of that brief has written a lovely pamphlet "The Secret Sins of Economics", discussed here. One of the sins is "significance" testing without a loss function.]

Lauren said,

April 6, 2011 @ 8:24 am

@Roger: I went from XKCD directly here KNOWING I would find a post about it!

:-)

alex boulton said,

April 6, 2011 @ 10:12 am

p>0.05?

p<0.05

[(myl) I suspect that this reversal is a case of misnegation, caused by the fact that the journalist phrases the question in a positive way ("Jellybeans cause acne!"), but the scientists give it back in a negative form ("we found no link between jellybeans and acne").

Either way, this illustrates an interesting point about statistical inference. What the journalist wants — and what Randall's scientists say they're giving her — is an evaluation of the hypothesis that jellybeans cause acne. But what their statistical test (whatever it happens to be) actually gives them has nothing to do with causation. It's an evaluation of the null hypothesis that the acne measures of the jelly-bean subjects and the non-jelly-bean subjects could have been the result of sampling twice from the same population.

As the cartoon illustrates, if you keep making repeated random samples from the same population, about 5% of the time you'll get a result whose probability of being the result of random sample from the same population is less than 5%. However, a correct statement of what the statistical test actually tests is too cognitively complex for most people to be comfortable with it — and I suspect that this includes many biomedical and other researchers.

Of course, even if we believe that the rejection of the null hypothesis is probably right, this leaves open many alternatives, such as (in this case) the possibility that acne causes jelly-bean consumption, or that some third factors influences both.]

John Cowan said,

April 6, 2011 @ 10:21 am

In the Secret Sins post, you wrote: "And in fact I think that there are some remarkably similar difficulties in contemporary academic linguistics, a point that might be worth taking up in some future post."

Did that ever happen? (Hint, hint.)

Mr Fnortner said,

April 6, 2011 @ 10:34 am

On a distantly related question, if you're looking for something that may not exist, does the probability of finding it increase as your search exhausts places to look?

Rob P. said,

April 6, 2011 @ 11:03 am

@Chris – The S.Ct. did uphold the 9th circuit, but did not appear to rely much on the analysis in that brief. Instead, the court noted that, "The FDA similarly does not limit the evidence it considers for purposes of assessing causation and taking regulatory action to statistically significant data. In assessing the safety risk posed by a product, the FDA considers factors such as “strength of the association,” temporal relationship of product use and the event,” “consistency of findings across available data sources,” “evidence of a dose-response for the effect,” “biologic plausibility,” “seriousness of the event relative to the disease being treated,” “potential to mitigate the risk in the population,” “feasibility of further study using observational or controlled clinical study designs,” and “degree of benefit the product provides, including availability of other therapies.”

Because regulatory agencies and medical professionals might rely on other than statistically significant studies, it is reasonable for an investor to do so as well. That is, they didn't concentrate much on what statistical significance really means, but instead determined that statistical significance is not a good bright line rule as to what might be material to an investor's decision to buy a security.

Matt Heath said,

April 6, 2011 @ 11:12 am

@Mr Fnortner: The probability of finding it at all or the probability of finding it in the next place you look?

The former will decrease since when you strike off an option then all remaining options (including the thing not existing) will have their probabilities revised upwards according to Bayes' theorem.

The latter would rise if all locations were equally likely to begin with. If the prior probabilities fell away too quickly (and you were checking the most likely places first) it would fall.

Steve Kass said,

April 6, 2011 @ 1:15 pm

I agree with the part after the dash.

Unfortunately, the jargon of statistics is dreadful — significant is only one example of statistical terminology with a disciplinary meaning that’s counter-intuitive to the vernacular. This creates an greater obstacle than essential mathematical sophistication, I think, to the clear expression and understanding of statistical concepts.

Earlier this week, the Wall Street Journal’s Numbers Guy, Carl Bialik, wrote about statistical significance and the Court’s recent decision. Bialik, like most journalists, failed to express the statistics correctly. My guess is not that the concepts were cognitively too complex for Bialik to be comfortable with them, but that he failed to attribute much importance to being precise about them.

KevinM said,

April 6, 2011 @ 1:19 pm

"statistical significance is not a good bright line rule as to what might be material to an investor's decision to buy a security."

Yes; it's possible to quantify in dollars the reduction in value of a house that people think is haunted. The stock market is a Keynesian beauty contest, governed not by what the judges think but by what they think other judges think (about what other judges think about what other judges think ….) The interesting question is whether the courts should be strictly market-neutral or whether there are good institutional reasons why they should resist letting people sue and recover damages on this basis.

Carl said,

April 6, 2011 @ 3:11 pm

The moral of the story is that the higher a p-value a field allows, the higher the percentage of material produced by the field will be wrong—even under the best case scenario (no fraud, no experimental error, etc.).

D.O. said,

April 6, 2011 @ 4:59 pm

I think that among other udoubtly correct things about misunderstanding and misuse of statistics that were already mentioned, there is one thing that was not pointed out. I think that looking first for type I error probability is reasonable approach when evaluating a Good Thing (green jelly beans cure acne!), if this test is passed than one should look at other things. But with Bad Things (green jelly beans cause acne!) it is more reasonable to start with estimating type II error.

Rubrick said,

April 6, 2011 @ 5:54 pm

Not directly addressed in the xkcd is the fact that there's nothing special about the multiple comparisons being "of the same sort". If you take any 20 studies which yield a conclusion with p > .05 — even if they're completely unrelated — one of them will likely be due to chance. This is of course why it's so crucial that studies be repeated; but I think that in a lot of fields it often doesn't happen. (Who wants to spend their precious grant money repeating a study that's already been done, and for which someone else has already reaped the glory?)

This issue and much else are addressed in this essay, which I think has been mentioned previously on LL.

Jonathan D said,

April 6, 2011 @ 8:07 pm

The "Duh" is even more obvious when you realise that the p-value does not give an estimate of the probablity of the null hypothesis, but simply the probability, assuming the null hypothesis, of getting the results your did or something even further from expectated.

Mark F. said,

April 6, 2011 @ 11:48 pm

A NY Times article about a possible discovery at Fermilab had this passage:

The difference in the threshold for being interesting is striking, but I guess it probably just follows from the large number of trials that are being figured into a Bonferroni calculation.

army1987 said,

April 7, 2011 @ 5:46 am

IMO, frequentist probability theory is conceptually backwards when you're trying to figure out a mechanism from its behaviour (rather than vice versa, as when calculating the probability of dice toss results when you already know the dices are unbiased). That's what Bayesian probability theory is for. I think the only reason people (not counting those who don't understand the difference) use it is that when N is big enough and the distributions are all in the ballpark of being Gaussian it gives similar results with simpler maths.

Signifikant | Texttheater said,

April 7, 2011 @ 7:46 am

[…] Akt gelungener göttlicher Kommunikation zu sehen. Daran fühlte ich mich erinnert, als ich gestern im Language Log las, die Schöpfung des Begriffes statistisch signifikant (von lat. signum: Zeichen!) sei der […]

Brett said,

April 7, 2011 @ 8:14 am

@Mark F.: In particle physics, the standard for discovery of a new spectral feature (e.g. a new particle type) is always quoted as requiring 5 sigma. Confirmation of a discovery is sometimes said to require 10 sigma. The existence of a huge number of simultaneous trials is exactly why.

Phil said,

April 7, 2011 @ 8:46 am

@Mark F: In particle physics we frequently get interested by effects at the "2 sigma" level, but the standard to be able to claim a discovery is almost universally agreed to be 5 sigma (i.e., p<3e-7). This is partly because of multiple comparisons (although this tends to be corrected for) but mostly because our results often depend strongly on corrections taken from models of processes which might not be well known, and the idea is that requiring 5 sigma significance puts you beyond the level where problems with the models are likely to cause a fake signal.

@army1987: I agree, although the situation is a bit different in particle physics, where nearly everyone favours frequentist methods (see, eg, Section 6 of http://www.physics.ox.ac.uk/phystat05/proceedings/files/oxford_cousins_final.pdf ), even for small N

Rebecca said,

April 7, 2011 @ 1:24 pm

On a more minor point of your post–

Naomi Baron has studied cultural differences in cell phone use and in views of the (in)appropriateness of speaking and texting in public. From her website (http://www.american.edu/cas/faculty/nbaron.cfm):

Rebecca said,

April 7, 2011 @ 1:26 pm

Whoa, sorry. Wrong post.

Theo Vosse said,

April 10, 2011 @ 3:03 am

On a more linguistic point, and related to the comment on statistical jargon: shouldn't the statement in the 2nd panel (and following) be: we did not find a link …? To truly establish that there is no link would require a power analysis; just p > 0.05 is not enough.

mgh said,

April 10, 2011 @ 8:35 am

Among R.A. Fisher's several works of public-relations genius, the greatest was the appropriation of the word significant to mean "having a low probability of occurrence if the null hypothesis is true".

In fact, I've dealt with copy-editors at journals who insist on using "significant" only when discussing statistics. This is one of the few copy-editorisms I go along with, since it's easy to substitute "substantial" for the other use, and since statistics are used confusingly enough without adding uncertainty as to whether they're being used at all!

Randal said,

April 14, 2011 @ 3:24 am

The discussion of "significant" reminds me of a passage in Edward Tufte's rant "The Cognitive Style of PowerPoint" in which he dissects a NASA slide given by engineers to managers while the Columbia was still in orbit following the foam strike:

The vaguely quantitative words "significant" and "significantly" are used 5 times on this slide, with de facto meanings ranging from "detectable in largely irrelevant calibration case study" to "an amount of damage so that everyone dies" to "a difference of 640-fold." None of these 5 usages appears to refer to the technical meaning of "statistical significance."

~~Randal