Consonant effects on F0 of following vowels

« previous post | next post »

I spent the past couple of days at a workshop on lexical tone, organized by Kristine Yu at UMass. A topic that came up several times was the question of whether "segmental" influences on pitch — for instance, the fact that voiceless consonants are typically associated with a higher pitch in the first part of a following vowel — might be diminished or even eliminated in languages with lexical tone. Several participants observed that the evidence for this is not very strong: the classical paper on the subject studied a small number of utterances from one speaker in Thai, for example.

So for this morning's Breakfast Experiment™, I wrote a little script that calculates and displays (one way of looking at) these effects in the TIMIT dataset, which includes 10 English sentences spoken by each of 630 speakers. (Specifically, there are two sentences spoken by all 630 speakers; 450 sentences spoken by 7 speakers each; and 1890 sentences spoken by a single speaker.)

I had to go to a meeting before I had a chance to write up the results, but the meeting ended early enough for me to find 15 minutes before lunch, so:

My script pitch-tracked all the sentences, and located all the places where one of the consonants "b", "d", "g", "k", "m", "n", "p", "t" was followed by one of the vowels "aa", "ae", "ah", "ay", "eh", "er", "ey", "ih", "ix", "iy" (in ARPABET). I pulled out the first 50 msec. of estimated F0 values from the designated vowels — 10 estimates at a 5-msec. frame advance. I expressed the F0 estimates in each 10-element vector as ratios to the mean value of that vector.

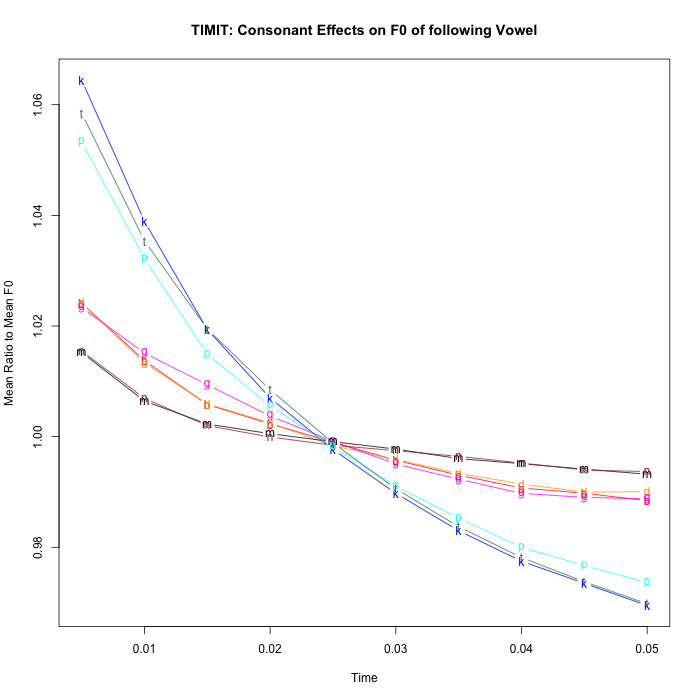

A plot of the results:

This clearly shows the expected effects, with /p/ /t/ /k/ showing an average fall of about 10%, whereas /b/ /d/ /g/ show about a 3% fall, and /m/ /n/ show even less.

It's nice to see that such a crude technique produces such clean results. This is presumably due to the size of the dataset (small by today's speech-technology standards, but enormous by the standards of most phonetics research), and perhaps the dataset's balanced character (though I suspect that conversational or broadcast-news datasets will show similar effects, if they're large enough). The counts involved in this case (after automatically removing examples with period-doubling or other pitch-tracking errors):

| CONSONANT | COUNT |

| b | 1786 |

| d | 2314 |

| g | 649 |

| p | 1361 |

| t | 2615 |

| k | 2347 |

| m | 2837 |

| n | 2055 |

No doubt there are effects of vowel, of stress, of syllabification, of word and phrase position, of speaker, etc. — which can be explored via regression in a dataset of this type, though another breakfast or two would be required.

Now if we only had TIMIT-like datasets for French, German, Chinese, Thai, Yoruba, Chinantec, Pashto, etc. !

Someday, I hope, we will…

Update — for the record, here are the scripts involved — it would be nice if there were an easier and more standard way of doing it. Again, someday…

This script does the pitch tracking,

#!/bin/sh # for f in `cat filelist` do __echo $f __get_f0 -P /home/myl/bin/params60_650 new/$f.wav new/$f.f0 __fea_print /home/myl/bin/layout new/$f.f0 >new/$f.af0 done

where filelist is a list of pathnames to the 6300 TIMIT file prefixes, e.g.

TEST/DR1/FAKS0/SA1 TEST/DR1/FAKS0/SA2 TEST/DR1/FAKS0/SI1573 TEST/DR1/FAKS0/SI2203 TEST/DR1/FAKS0/SI943

and get_f0 and fea_print are part of the esps package. The params60_650 file is

float min_f0 = 60.0; float max_f0 = 650.0; float wind_dur = 0.01; float frame_step = 0.005;

and the layout file is

layout=f0 F0[0] %.2f prob_voice[0] %.1f rms[0] %.2f ac_peak[0] %.3f\n

Then this shell script finds the cited CV pairs, and then this R script makes the plot.

Stephen R. Anderson said,

June 5, 2014 @ 11:17 am

I hope you'll get this into the literature in a way that would be more straightforward to refer to than LanguageLog… I don't know what other references to the differential effects in tone vs. non-tone languages, but some data on Yoruba vs. English are found in an old paper by Jean-Marie Hombert (Hombert, Jean-Marie. 1976. Consonant types, vowel height and tone in Yoruba. UCLA Working Papers in Phonetics 33. 40–54). Again, just a few utterances, and within the limitations of such work at the time.

Christopher Cieri said,

June 5, 2014 @ 12:17 pm

While we are trying to promote multilingual TIMITs, we note that West Point has contributed some data sets to LDC that have TIMIT-like properties. Languages are: American English, Arabic, Brazilian Portuguese, Croatian, Korean, Russian Spanish. One thing I like about the West Point data sets is the extraction of (presumably more natural) phonetically rich/balanced sentences from text rather than constructing them.

[(myl) Good idea. There are also some broadcast news collections to compare, especially including Mandarin.]

chh said,

June 5, 2014 @ 4:15 pm

This is really neat. If you did compare the English with some other languages, how would you deal with the difference in VOT times for the voicing categories and the sometimes different number of contrasting classes, since the VOT time itself has an effect on the pitch perturbation?

You wouldn't just compare the graph above with a graph of Thai /pʰtʰkʰ/ vs /bdg/, right?

Since the VOT difference between voiced and voiceless aspirated categories is way bigger than the one in English, you would expect the effect on F0 of the following vowels to be bigger independent of any effect of the presence of lexical tone.

[(myl) Good questions! I don't have determinate answers — one option would be to take account of the relationship between VOT and some measure of the F0 effect.]

Andries Coetzee said,

June 5, 2014 @ 11:00 pm

Two colleagues (Pam Beddor from Michigan, and Daan Wissing from North-West University in South Africa) and I just finished data analysis on a project that looked at this phenomenon in Afrikaans. Afrikaans used to have a prevoiced vs. voiceless unaspirated contrast — i.e. /b d/ vs. /p t/ realized as [b d] vs. [p t]. But the voicing contrast is being lost. Younger speakers (below 25 years of age) voice only around 20% of their historically voiced /b d/. The rest they realize as voiceless unaspirated [p t] with no VOT difference between [p t] coming from historically /b d/ and [p t] coming from historically /p t/. (Older speakers, over 50 years of age, still voice around 60% of their historically /b d/ tokens.) The contrast between historically /b d/ and /p t/ is being preserved, however, in F0 on the following vowel. F0 on vowels following historically /b d/ is about 40Hz lower than F0 on vowels following historically /p t/ — a neat example of tonogenesis as it is happening.

I am wondering what the raw Hz difference is the English in TIMIT? Is it smaller than the 40Hz that we found in Afrikaans? Of the same size, roughly? Is it limited to vowel onset, or does it persist throughout the vowel? In Afrikaans, the difference persists throughout the vowel, although it does become smaller towards the end of the vowel.

This 40Hz lower F0 is observed after historical /b d/ irrespective of whether /b d/ is realized as actual voiced plosives [b d] or as their voiceless counterparts [p t]. The F0 on the following vowel is hence determined by the historical (or phonological, if you want to use that term) voicing of the consonant, and NOT by the phonetic voicing of the consonant. So, though the difference may have had its origin in the phonetic voicing contrast, it is no longer dependent on the phonetic voicing of the consonants.

The lower F0 is also observed after ALL voiced consonants — i.e. we find the same F0 value after historical /b d/ as we find after /m n/, for instance. This leads us to think the lower the F0 after historical /b d/ did have its origin in the naturally lower F0 after voiced consonants. It's just that the phonetic voicing is no longer needed for the lower F0 in the /b d/ contexts.

So, if we were consider Afrikaans as a tonal language, then maybe Afrikaans would be a language with F0 effects even though it is a tonal language? That is, we see the effects of voicing on F0 after nasals in Afrikaans, although Afrikaans could now be considered as language that uses F0 (i.e. tonal) properties to differentiate minimal pairs.

(Example of relevant minimal pair: What used to be [dak] 'roof' and [tak] 'branch', is now [tàk] and [ták].)

[(myl) Neat!

But why would you assume that the size of the effect is constant in Hz. rather than being proportional to the basic pitch being modulated? Pitch in music is multiplicative rather than additive; in speech there seems to be an additive as a well as a multiplicative component, but in any case it would be odd to characterize (say) the difference between tone 1 (high) and tone 3 (low) in Mandarin Chinese in terms of a difference in F0 alone, rather than a multiplicative scaling or a multiplicative+additive scaling.

And in this case, the effects seem to be almost purely multiplicative — see here.]

Bob Ladd said,

June 6, 2014 @ 3:46 am

@chh,

James Kirby and I are also working on this (James was one of the people Mark talked to at UMass) and should have some results to report on fully voiced vs. voiceless unaspirated stops (negative VOT vs. short-lag VOT) soon. Basically you're right that it's important to compare like with like, but we've found that it's not a simple matter to define likeness for these purposes.

@Andries Coetzee

Why do you assume that you are dealing with an effect of voicing? Mark's graph (and also a 2009 study by Helen Hanson) suggest that the effect is primarily a matter of F0 raising due to voicelessness. If you think about what's happening in Afrikaans in those terms, the paradox you seem to detect goes away – the phonetic effect of voicelessness in tak gets phonologised when the VOT difference between tak and dak is lost.

Hugo Quené said,

June 6, 2014 @ 5:25 am

Nice and clear results, thanks!

On a side note, one could make the size of the plot symbols proportional to the (square of) the count, so as to visually include the information of the counts table into the graph.

James Kirby said,

June 6, 2014 @ 6:14 am

I think it's worth reiterating that prosodic prominence hasn't been controlled for in the current Breakfast Experiment™, which several studies (Kohler 1982, Ladd & Silverman 1984, Hanson 2009) have shown can have a rather large influence on the magnitude of the intrinsic F0 effect (in some cases obliterating it entirely). But there's no doubt that even as a rough and ready measure, this is an incredible cost-effective technique compared to what is typically undertaken in phonetic studies.

[Could you say more about the references you cite? Kohler 1982 (which I presume is "F0 in the Production of Lenis and Fortis Plosives") show that F0 perturbation effects exist in German in various different pitch contours, but I don't think that he looks at prominence effects; Ladd & Silverman 1984 (which I presume is "Vowel Intrinsic Pitch in Connected Speech") looked only at vowel effects, not at consonant effects, and found that "the size of the differences between high and low vowel fundamental frequency is somewhat smaller [in paragraph reading] than in carrier sentences" — I don't see anything there about prosodic prominence. And I can't find a relevant publication by John Hanson in 2009 at all…]

@Stephen R. Anderson: Two very nice recent studies of intrinsic F0 effects in tone languages include Francis et al 2006 (Francis A. L., Ciocca V., Wong V. K. M., Chan J. K. L. 2006. Is fundamental frequency a cue to aspiration in initial stops? JASA 120(5), 2884–2895) and Chen 2011 (Chen Y. 2011. How does phonology guide phonetics in segment–f0 interaction? JPhon 39: 612-625). As Bob notes above, we're also in the midst of conducting similar studies for a number of languages, both tonal and non-tonal.

@chh: It's not entirely clear if VOT duration itself correlates particularly well with the magnitude of intrinsic F0 effects, or if we should expect it to. Existing studies (many reviewed in Chen 2011) are split on their finding as to whether (voiceless) aspirated stops have greater or lesser F0 perturbation compared to (voiceless) unaspirated stops. This is perhaps not surprising given that the source of intrinsic F0 differences ultimately lies in the configuration of the larynx, particularly the tension of the vocal folds, and the timing of the laryngeal gesture(s) relative to the release of the supraglottal constriction.

Moreover, while these F0 perturbations are clearly physiologically driven at some level, their cross-linguistic patterning suggests that speakers have some degree of control over them: for example, while English 'voiced' (phonetically voiceless unaspirated) /b d g/ don't perturb onset F0 much more than do nasals (see also Hanson 2009, but cf. Mark's findings here), we've found the same pattern in Italian, where (phonologically) voiced stops are phonetically *pre*voiced. All of which is to say that VOT itself at best just a proxy (and perhaps not a particularly good one) for whatever is going on under the hood.

@Andries Coetzee: there's another discussion here (raised both at UMass and SCIHS) about whether these kinds of situations should be considered 'tone' or 'tonogenesis' at all. I would submit that use of F0 alone does not a tone language make.

Jerry Friedman said,

June 6, 2014 @ 3:40 pm

Are the pronunciations at forvo of any use for this kind of thing?