Consonant effects on F0 in Chinese

« previous post | next post »

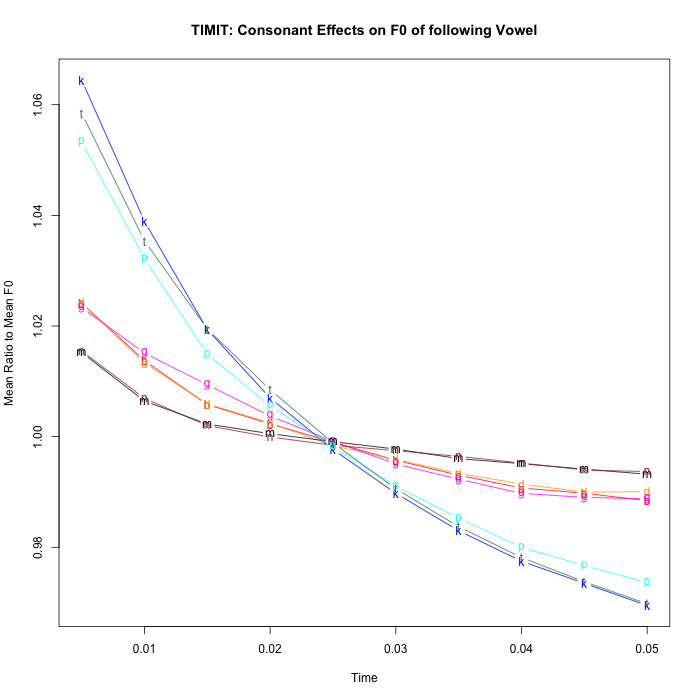

Following up on two earlier Breakfast Experiments™ ("Consonant effects on F0 of following vowels", 6/5/2014; "Consonant effects on F0 are multiplicative", 6/6/2014), here are some semi-comparable measurements of consonant effects on fundamental frequency (F0) in Mandarin Chinese broadcast news speech.

[As I warned potential readers of those earlier posts, this is considerably more wonkish than most LLOG offerings.]

Why do people care about the effects of consonant features on F0? The main reason is that tonogenesis — the historical development of lexical tones — often arises from re-interpretation of "micromelodies" of this kind, typically driven by laryngeal features of consonants such as voiceless vs. voiced (e.g. p,t,k,s vs. b,d,g,z). So it's natural to wonder whether languages where this has already happened, like Mandarin Chinese, retain or suppress such effects.

According to one line of argument, the consonant-induced micromelodies are a natural and more-or-less inevitable consequence of the fact that voice pitch and consonant voicing are implemented by the same laryngeal apparatus, so that F0 differences will always accompany voiced/voiceless distinctions. If some of these tonal differences have been re-interpreted as the primary or only features distinguishing words, with the original consonantal distinctions being lost, this won't have any effect on the consonants of retained or new consonant voicing distinctions.

Another line of argument points out that the historical process that transforms segmental micromelodies into lexical tone must involve an exaggeration of the segmental effects at the same time as the loss of the original consonantal cause. And if the effects can be exaggerated (or at least implemented independent of the original consonantal distinction), maybe they're not so automatic after all, and can be attenuated as well, so as not to confuse the cues for tonal distinctions.

The data for today's experiment came from a collection of 1997 Mandarin Broadcast News Speech (LDC98S73) published by the Linguistic Data Consortium in 1998. The selection process is described as follows, in Jiahong Yuan, Neville Ryant, and Mark Liberman, "Automatic Phonetic Segmentation in Mandarin Chinese: Boundary Models, Glottal Features and Tone", ICASSP 2014:

We extracted the “utterances” (the between-pause units that are time-stamped in the transcripts) from the corpus and listened to all utterances to exclude those with background noise and music. Utterances from speakers whose names were not tagged in the corpus or from speakers with accented speech were also excluded. The final dataset consisted of 7,849 utterances from 20 speakers.

This same collection was used in two recent papers on automatic tone classification: Neville Ryant, Jiahong Yuan, and Mark Liberman, "Mandarin Tone Classification Without Pitch Tracking", ICASSP 2014; and Neville Ryant, Malcolm Slaney, Mark Liberman, Elizabeth Sriberg, and Jiahong Yuan, "Highly Accurate Mandarin Tone Classification In the Absence of Pitch Information", Speech Prosody 2014. The dataset (as used in the three cited papers) is in the queue to be published by the LDC later this year.

In this experiment, I used the training-set part of this collection, comprising 7,549 utterances and 6.05 hours of speech. The translation from Hanzi (Chinese-character) transcripts to pinyin with tone was done automatically for this material, as was the alignment of the tone-pinyin transcription with the audio. Checking against a test corpus of 300 hand-transcribed and hand-aligned utterances, we found 93.1% agreement of alignment within 20 msec., and 1.2% wrong assignment of tones.

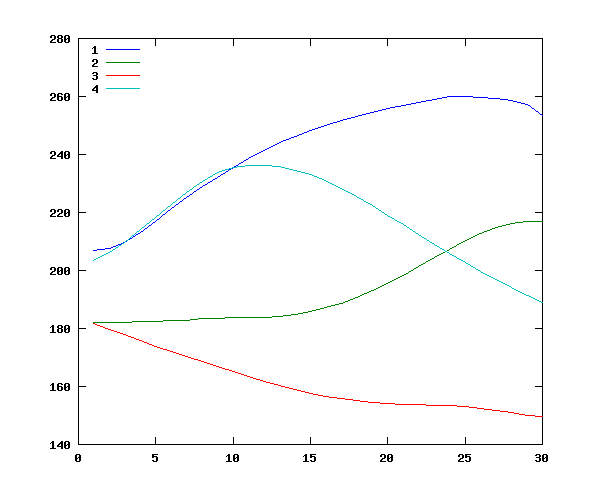

As a reminder, here are average F0 contours for the four main Mandarin Chinese tones, taken from 7,992 syllables produced in sentence context from 8 speakers — the data comes from Jiahong Yuan's PhD dissertation ("Intonation in Mandarin Chinese: Acoustics, perception, and computational modeling", Cornell University 2004).

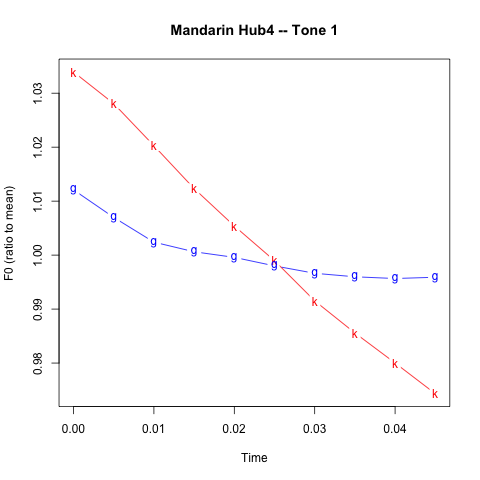

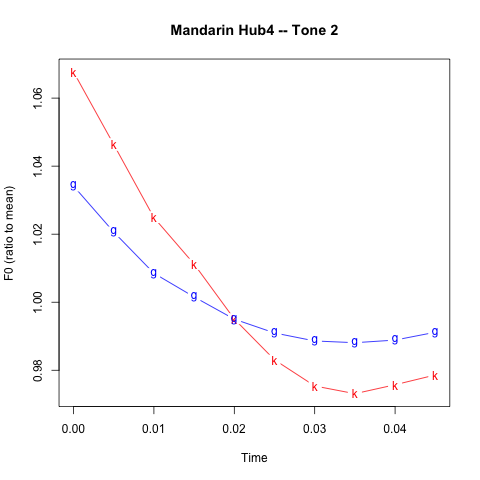

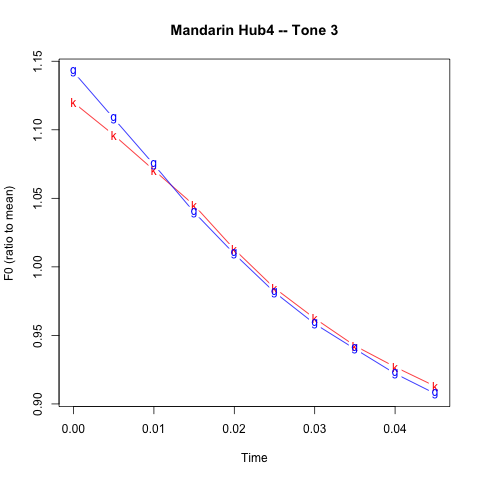

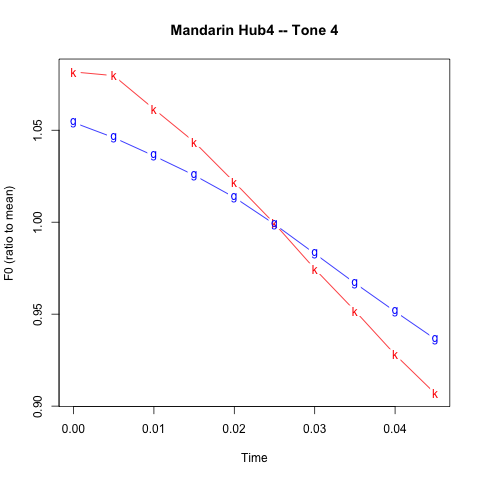

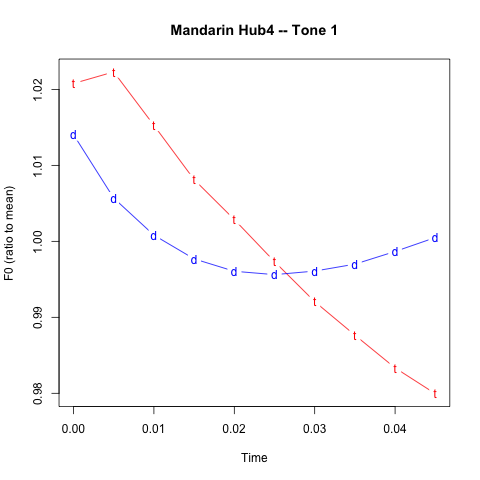

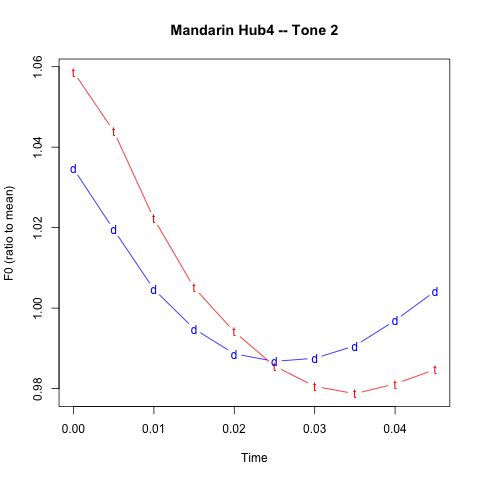

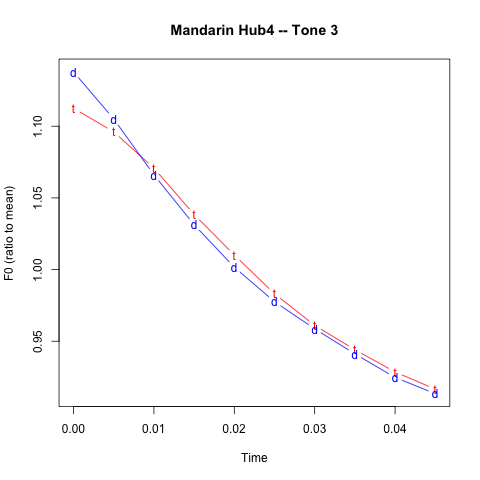

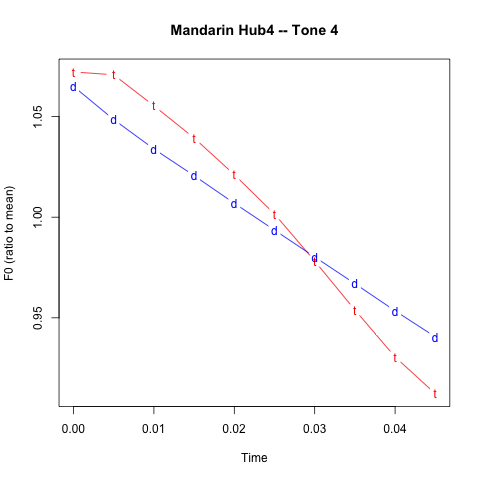

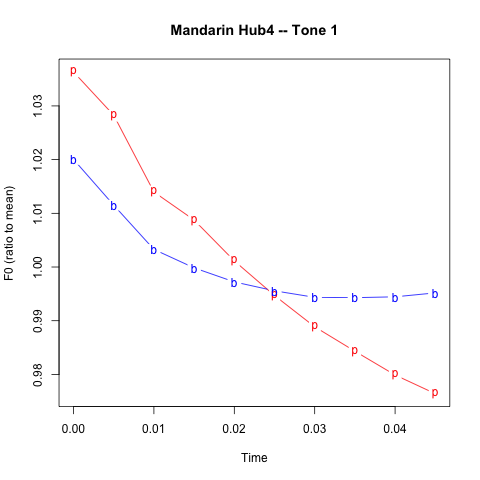

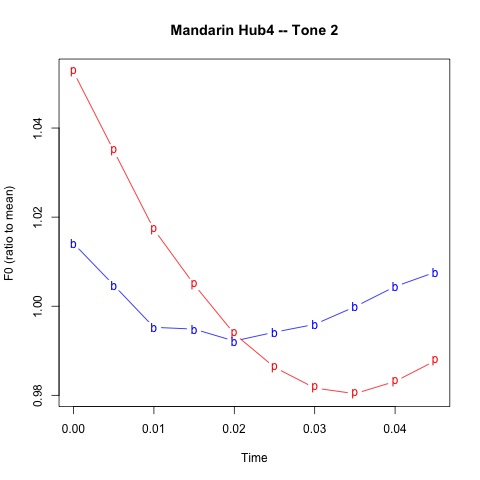

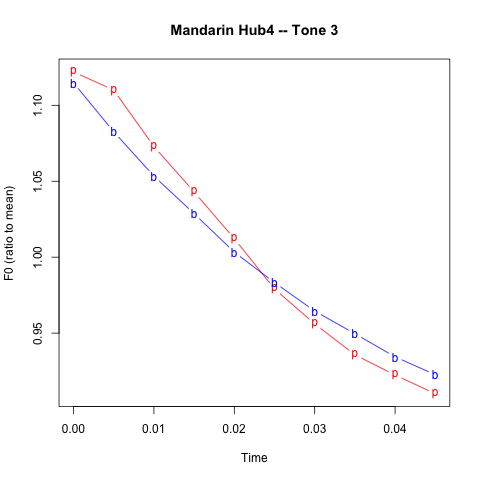

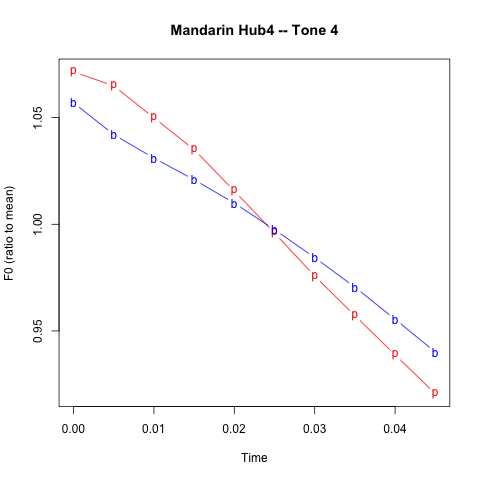

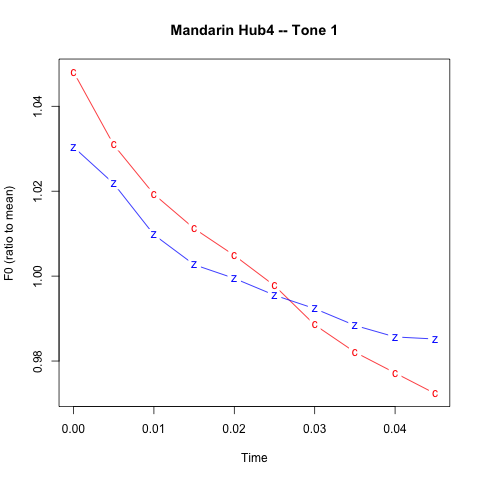

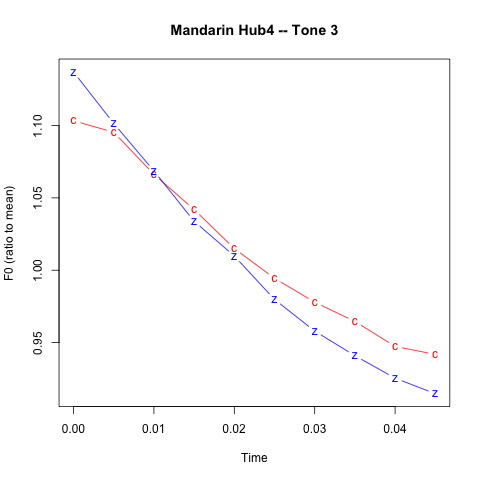

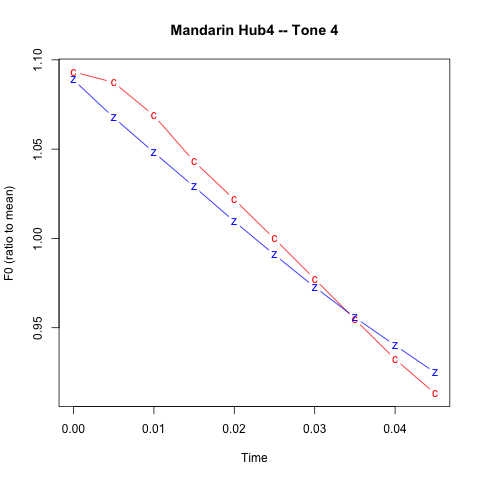

For exploring consonant effects on F0 in Mandarin, I've broken things out by tone as well as by consonant — tones 1, 2, 3, 4 in syllables whose initial consonants are /k/ vs. /g/, /t/ vs. /d/, /p/ vs. /b/, /s/ vs. /z/. As I did in the case of the English data, I've taken the first 50 msec. of F0 estimates from each vowel — a sequence of 10 estimates — and scaled those ten sequential estimates by their average value. Thus a value of 1.05 for the first F0 estimate in a particular vowel means that the value at that point was 5% greater than the average of the values over the first 50 msec. of that vowel. I then averaged these vectors of scaled estimates, element-wise, for all tone-1 syllables beginning with /k/, all tone-2 syllables beginning with /k/, and so forth.

Thus from the start of one particular vowel (in the syllable ke2) we get the sequence of F0 estimates (rounding to one decimal place)

156.2 150.5 148.5 149.6 135 136.7 138.6 139.6 140.3 141.7

These were calculated with a spacing of 5 msec., so the 10 estimates span 50 msec. altogether.

The mean value is 143.7, and so the scaled values are

1.087 1.047 1.033 1.041 0.94 0.952 0.965 0.971 0.977 0.986

My script repeats that calculation for all 69 instances of tone-2 syllables whose initial is /k/, and averages element-wise, resulting in the sequence

1.068 1.046 1.025 1.011 0.995 0.983 0.975 0.973 0.976 0.979

which is what is graphed using the plotting symbol "k" in the graph in the second column of the first row of the array below:

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

In the case of tone 1, the difference between voiced and voiceless initials seems similar, both qualitatively and quantitatively, to the difference in English, as shown in the previous posts:

The pattern in the case of tone 2 is also similar in terms of the consonant voicing effect, modulo the difference caused by a low initial F0 target. The difference seems to be pretty much gone in tone 3, and rather attenuated in tone 4. Why? I don't know — it's worth further investigation, I think.

And that investigation will have to give up on the simple graphical averaging technique I've used so far, and find a way to use regression to explore the relationship between the many relevant factors and the F0 contours of individual syllable onsets.

In particular, the nature of language entails that real-life usage is highly non-orthogonal in various ways, which mere dataset-size doesn't change. Thus (for example) the set of 69 tone-2 syllables starting with /k/ in the Chinese Broadcast News dataset I used were

55 k e2

6 k uang2

4 k ao2

1 k ui2

1 k u2

1 k an2

1 k un2

where I would guess that "ke2" was mostly 可 ke3 "can; may; able to; certain(ly); to suit; (particle used for emphasis)" after 3rd-tone sandhi. The 2,317 instances of tone-2 syllables starting with /g/ were distributed as

2155 g uo2

108 g e2

25 g uan2

11 g ang2

6 g an2

6 g u2

6 g uang2

where again I would guess that "guo2" was mostly 国 guo2 "country; state; nation".

Particular words — e.g. "can, may, be able to" vs. "country, state, nation" — will have particular distributions of phrasal position, emphasis, etc., which will effect pitch range, vocal effort, etc. A larger dataset will just bring these highly-skewed distributions into better alignment with the overall average for (some particular variety of) the language in question.

Also, the consonantal feature of "voicing" has more than two values, both phonologically (where both voiced and voiceless stops can be aspirated or not, for example) and phonetically (where e.g. English "voiceless" stops have widely varying degrees of relative timing between laryngeal and oral articulations). And it's also worth noting that different genres and styles of speech — here TIMIT sentences read by volunteers in an acoustics laboratory vs. news stories presented on the air by professional announcers — are likely to be different in various ways.

To make sure that the effects we see are the effects we're looking for, and not the result of some correlated variable(s), we need to consider and evaluate many alternative hypotheses.

So on balance I think it's amazing that I got any interpretable results at all.

John Coleman said,

June 12, 2014 @ 6:12 pm

Yay!

David B Solnit said,

June 13, 2014 @ 1:10 am

Going by Pinyin is a tad misleading. The usual description of Mandarin is that initial obstruents contrast in aspiration, not voicing. <b d g> are just the Pinyin way of writing voiceless unaspirated stops, contrasting with aspirated <p t k>;also Pinyin <z> is not the counterpart of <s>; it's an unaspirated dental affricate corresponding to the aspirated one written <c>. That's why the old Wade-Giles transciption used <p> vs <p'>, <t> vs <t'>, etc. Y.R. Chao in his 1968 grammar says that the unaspirated obstruents are voiced in intervocalic position, and I'm sure there have been instrumental studies bearing on this; perhaps others can comment.

So if they're not phonologically fully voiced obstruents, might it be that the pitch effects you find are due to aspiration raising the onset rather than voicing lowering it?

[(myl) In the first place, you're right that it's important to consider the (phonological and phonetic) type of laryngeal features involved, as I noted in the body of the post. But in the second place, the usual conception is exactly that it's consonantal voicelessness that causes raising of F0, more than consonantal voicing causing lowering — the impedance caused by an obstruent does tend to lower F0, but the main effect of consonantal voicing features is to raise F0 temporarily following the restoration of voicing when the vocal folds have been abducted and then adducted for a voiceless consonant, whether aspirated or not.

And in the third place, though Mandarin and English certainly differ in the allophonic distribution of consonant voicing, the difference is not as clear-cut as your description suggests. For example, here's a spectrogram and waveform for the first pinyin 'b' in the dataset:

It's in the "bao1" of

其中 包括 众议院 少 数 派 领袖 格 普 哈 特

which was rendered in pinyin (by Jiahong Yuan's program) as

qi2 zhong1 bao1 kuo4 zhong4 i4 yuan4 shao3 shu4 pai4 ling3 xiu4 ge2 pu3 ha1 te4

The audio is this:

It's clear that the voicing continues pretty much throughout the stop gap in this case, failing only briefly just before the release, probably because of loss of trans-glottal pressure drop rather than because of any laryngeal maneuvre. This is what we generally see in English "voiced stops" as well, in cases where they're syllable-initial, precede a vowel, and follow a vowel or nasal or liquid. (Though in both languages, the extent to which voicing continues during the closure is variable, depending on place of articulation, closure duration, vocal effort, and other factors.)

Consonant allophony in Chinese and English is certainly different, but the standard L2 textbook descriptions (generally based on isolated citation forms) are not to be relied on.

(I agree that I should have contrasted 'z' with 'c', not 's', so I've corrected the last row of plots accordingly.)]

David B Solnit said,

June 13, 2014 @ 1:45 pm

Thank you! The graphic nicely shows Chao's "intervocalic" voicing (but really between a nasal and a vowel). Thanks also for the correction on voicelessness raising vs voicing lowering. I was thinking that aspiration might raise pitch even more, or differently, than nonaspirated voicelessness, having in mind some cases where voiceless aspirates and voiceless sonorants condition a tonal reflex different from the one conditioned by voiceless unaspirates.

[(myl) I expect you're right, that voiceless unaspirated and voiceless aspirated consonants have different F0 effects in languages where the distinction exists. And voiced aspirates are probably different yet. There are probably some studies out there…

For a truly intervocalic /b/, here's the next pinyin 'b' in the dataset — 'ba1' in

中国 已经 成为 美国 公司 的 第 八 大 出口 市场

zhong1guo2 i3jing1 cheng2ui2 mei3guo2 gong1 sii1 de0 / di4 ba1 da4 chu1kou3 shiii4chang3

In this case, the 'b' is voiced throughout. But notice that after the silence, the initial /d/ of 'di4' is clearly a voiceless unaspirated stop…]