Non-projective flavor

« previous post | next post »

From a current Starbucks ad, a nice example of a non-projective English sentence:

For those of you who are not up-to-date on the terminology of computational linguistics, here's an explanation of what "non-projective" means, from Ryan McDonald and Georgio Satta, "On the Complexity of Non-Projective Data-Driven Dependency Parsing", Proceedings of the 10th Conference on Parsing Technologies, Prague 2007:

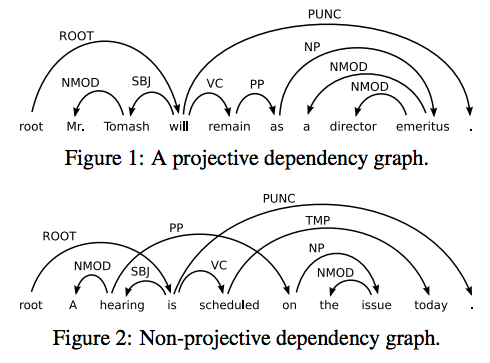

Dependency representations of natural language are a simple yet flexible mechanism for encoding words and their syntactic dependencies through directed graphs. […] Figure 1 gives an example dependency graph for the sentence Mr. Tomash will remain as a director emeritus, which has been extracted from the Penn Treebank. Each edge in this graph represents a single syntactic dependency directed from a word to its modifier. In this representation all edges are labeled with the specific syntactic function of the dependency, e.g., SBJ for subject and NMOD for modifier of a noun. To simplify computation and some important definitions, an artificial token is inserted into the sentence as the left most word and will always represent the root of the dependency graph. […]

The dependency graph in Figure 1 is an example of a nested or projective graph. Under the assumption that the root of the graph is the left most word of the sentence, a projective graph is one where the edges can be drawn in the plane above the sentence with no two edges crossing. Conversely, a non-projective dependency graph does not satisfy this property. Figure 2 gives an example of a nonprojective graph for a sentence that has also been extracted from the Penn Treebank. Non-projectivity arises due to long distance dependencies or in languages with flexible word order. For many languages, a significant portion of sentences require a non-projective dependency analysis. Thus, the ability to learn and infer nonprojective dependency graphs is an important problem in multilingual language processing.

Why is "You can't put flavor into a bean that isn't already there" non-projective? Well, the prepositional phrase "into a bean" is part of the complement of the verb "put", while the relative clause "that isn't already there" modifies the noun "flavor". So the arc connecting "put" to "in" will cross the arc connecting "flavor" to the relative clause.

The unconstrained problem of parsing non-projective sentences is NP-hard, if scoring depends on grandparent or sibling features — which is necessary for good performance. In other words, unconstrained non-projective dependency parsing is impossible in practical terms, for the same reasons that context-sensitive languages are in general algorithmically intractable. So there's been a lot of recent work on how to define what we might call "mildly non-projective" dependency parsers, which can handle things like the Starbucks example, but retain plausible performance. A notable recent example is Emily Pitler et al., "Finding Optimal 1-Endpoint-Crossing Trees", TACL 2013.

Andre B said,

October 15, 2013 @ 9:20 am

Ah – this new terminology is helpful. It sounded so odd to me because I was taking the projective reading (the bean is not already there).

Jerry Friedman said,

October 15, 2013 @ 9:28 am

I'm glad to learn a term today that I didn't know. But how was the term chosen when it seems not to have anything to do with the phenomenon?

[(myl) I believe that the terminology comes from P. Ihm and Y. Lecerf, Eléments pour une grammaire générale des langues projectives, 1963, as cited in David Hays, "Dependency theory: A formalism and some observations", Language 1964:

When such a diagram is accurately constructed, there are no intersections of connection lines with one another or with projection lines […] This attribute, called PROJECTIVITY, is analogous to nondiscontinuity of constituents in immediate-constituent theory.

The Ihm and Lecerf paper seems to be unobtainable, but I'm pretty sure that the term was borrowed from group theory, and specifically from projective geometry, about which Wikipedia says that "the projective group acting on homogeneous coordinates (x0:x1: … :xn) is the underlying group of the geometry".

A clue to the mathematical relationship can be found in Hays's note on the Ihm and Lecerf paper, in this annotated bibliography:

Originally issued as an internal report in April 1960, this paper defines the word-order rule known as "projectivity:" If word A does not depend directly or indirectly on words B, C, then A does not occur between B and C. German separable prefixes are examined, and the consequences of projectivity for parsing algorithms are stated.

This seems analogous to the invariance of incidence structures in projective geometry.

And for an idea of the use of "projective" in areas of group theory near to formal language theory, consider this passage from J. Ahsan & K. Saifullah, "Completely quasi-projective monoids", Semigroup Forum 1989:

]

(Is "not to have" non-projective? I was trying for one in each sentence.)

Jerry Friedman said,

October 15, 2013 @ 9:32 am

Never mind—I don't think "seems not to have" is non-projective.

OrenWithAnE said,

October 15, 2013 @ 10:52 am

It's also interesting that it's hard to come up with a natural sounding version that is projective.

You can't put into a bean flavor that isn't already there?

You can't put flavor that isn't already there into a bean?

Flavor that isn't already there can't be put into a bean?

Into a bean you can't put flavor that isn't already there? [ Yoda works for Starbucks … ]

Ø said,

October 15, 2013 @ 11:30 am

There is projective geometry, and yes that leads to some groups in the mathematical sense. And in another branch of mathematics there are projective objects in a category (for example in the category of modules for a ring, or in the category of "S-systems" in the quote above), and groups can come up there, too. But it's pretty clear to me that these represent two unrelated adoptions of the word "projective" as a mathematical term, and that neither of them has much to do with the use of the same word by some linguists.

Ø said,

October 15, 2013 @ 11:31 am

Hays writes of "projection lines".

D.O. said,

October 15, 2013 @ 11:33 am

Two questions from a member of the audience. First, why NP-hardness is important? After all, it is an asymptotic characteristic and it is not like natural language sentences tend to run for an infinite number of words. Second, why in the first example "director" modifies "emeritus" and not the other way around? I understand that pragmatically the most important information is that Mr. Tomas already has retired and the fact that the ghost-like status he retains is that of a "director" is secondary, but syntactically it seems that the root is "director" who just sort of faded away.

[(myl) I believe that you're right (and the Penn Treebank is wrong) about "director emeritus" — the head is "director", and "emeritus" is a post-modifier.

As for the complexity-theory issues, the problem is not just a theoretical one: even with fast computers, having to evaluate all 20^19=5e+24 non-projective dependency graphs for a 20-word sentence would be unaffordably slow. The restrictions (like "1-endpoint-crossing") allow the problem to be factored in a way that allows local partial solutions to be somewhat efficiently combined into a global solution.]

Andrew Bay said,

October 15, 2013 @ 11:55 am

I'm still trying to figure out how to put flavor into the missing or tardy bean.

TR said,

October 15, 2013 @ 12:16 pm

So is "non-projective" synonymous with "containing discontinuous syntactic constituents"?

[(myl) Pretty much — modulo the details of how each phrase is defined, and how various constructions are analyzed.]

reader_not_academe said,

October 15, 2013 @ 3:27 pm

I've had to tie this in with a feature of German that I've been pondering for a while. The two sentences below are equivalent in meaning ("I haven't read the novel you told me about"):

1) Ich habe den Roman, von dem du mir erzählt hast, nicht gelesen.

2) Ich habe den Roman nicht gelesen, von dem du mir erzählt hast.

In 2), the modifier of "Roman" is shifted out of the main sentence's VP, right across a phrase boundary; in 1), it's one of those embedded clauses non-native speakers and interpreters dread. if I'm reading this right, that creates a discontinuous constituent. Now what I find intriguing is that my inner CPU's thermometer is telling me that 2) is the one that's easier to produce as well as to parse for the human brain. That's strangely at odds with the greater computational complexity of parsing it formally.

Bob Ladd said,

October 15, 2013 @ 3:45 pm

@OrenWithAnE: You can't put flavor into a bean if it isn't there?

Rubrick said,

October 15, 2013 @ 4:12 pm

This isn't related to the linguistic point, but I'm guessing you can't put flavor into a bean if it is already there, either. So the meaning boils down to "You can't put flavor into a bean," which isn't so catchy.

[(myl) I'm sorry to say that there are people who put all sorts of flavors into coffee beans all the time: hazelnut, amaretto, irish creme, and worse. I agree that this shouldn't be allowed, but I have to admit that it is.]

Oliver said,

October 15, 2013 @ 4:24 pm

As for the German example, I must say that it is different. The relative clause must always be a part of the noun phrase right before the verb. The English example is strictly speaking ambiguous. The German example isn't.

The split of the original example cannot be made in German. If you want to render it in German, you'll need to use a full dependent clause, not a relative clause ",if it isn't already there".

Although German has a similar phenomenon, when there are multiple possible nouns in a noun phrase. Like:

Der Hund meiner Tante, den ich erschießen will, sitzt im Garten.

The dog of my aunt, whom I want to shoot dead, is sitting in the garden.

Der Hund meines Onkels, den ich erschießen will, sitzt im Garten.

The dog of my uncle, whom I want to shoot dead, is sitting in the garden.

In the first example grammatical gender makes clear that I want the canine, not the relative dead. But the second example is ambiguous.

reader_not_academe said,

October 15, 2013 @ 4:52 pm

my point with the german example was different; i was only driving at the discontinous phrase. i think the article's original example is not relevant because of the ambiguity either.

in some contexts this split is possible in german; in some others, it's not. compare these:

A1) Der Hund, den ich streicheln will, sitzt im Auto.

A2) *Der Hund sitzt im Auto, den ich streicheln will.

B1) Ich sehe den Hund, den ich streicheln will, nicht.

B2) Ich sehe den Hund nicht, den ich streicheln will.

A2 is not grammatical, B2 is. the why is interesting, but it's not what i'm after here. what i needed was just something like B2, which has a discontinuous constituent, i.e., it's non-projective.

my point is: it's a very complex task to parse non-projective sentences like B2, if you measure complexity by turing machines. but: my very personal, very subjective impression is that the non-projective sentence is easier to parse, as measured by my brain. this is by no means hard data, and it may be proved false by experiment.

what intrigues me is whether or not the tasks that are hard for computers (turing machines etc.) are the same tasks that are hard for human brains. my very subjective feeling is that they are not. which would be quite intriguing, if it were really true.

[(myl) But as Aravind Joshi has been pointing out for nearly 50 years, the kinds of apparent non-locality/non-projectivity/non-context-freeness in natural language syntax are stereotyped and limited, allowing some simple additions to grammars and parsing algorithms to cope without too much algorithmic pain.]

reader_not_academe said,

October 15, 2013 @ 4:57 pm

(but please, don't shoot the dogs.)

dainichi said,

October 15, 2013 @ 6:57 pm

@reader_not_academe

But (maybe depending on the analysis) your examples 1) and B1) also have discontinuous constituents, namely "habe nicht gelesen" and "sehe nicht". 2) and B2) also has them, but the dependency arcs are shorter. Maybe your brain prefers 2 short-distance discontinuous constituents to 1 long-distance discontinuous constituent.

Nathan Myers said,

October 15, 2013 @ 7:18 pm

I wonder if there is any connection between the awkwardness of expressing the statement without a non-projective construction, and the foolishness of the intended statement. Does non-projectivity mask nonsense? Is masking nonsense non-projectivity's major use?

We should note that aside from the issue of infusing hazelnut &c flavors, it is absolutely routine to remove most of the coffee flavor from one collection of beans, process it, and then put it into an entirely-other collection of beans that previously had their own flavor leached out. This is an essential part of producing "decaf" coffee beans.

Jerry Friedman said,

October 15, 2013 @ 11:53 pm

Would my first attempt at a non-projective question have worked? "Why was a name chosen that has nothing obvious to do with the phenomenon?"

MYL: Thanks for the detailed answer. Projective geometry and monoids seem to specifically not have order, which is essential to grammatical projectivity, so at this point Ø's take on it makes sense to me.

[(myl) On the contrary. As Wikipedia tells us,

In abstract algebra, the free monoid on a set A is the monoid whose elements are all the finite sequences (or strings) of zero or more elements from A. It is usually denoted A∗. The identity element is the unique sequence of zero elements, often called the empty string and denoted by ε or λ, and the monoid operation is string concatenation. The free semigroup on A is the subsemigroup of A∗ containing all elements except the empty string. It is usually denoted A+.

]

How does this work with interjections and vocatives?

"But ah, my foes, and oh, my friends—

It gives a lovely light!"

If that's non-projective, it may be trivially so (though maybe not trivially to computers).

Yoav Goldberg said,

October 16, 2013 @ 2:30 am

Just a small point on the assertion that "unconstrained non-projective dependency parsing is impossible in practical terms" — this assumes that the parsing process is "searching over the space of all possible trees in order to find the one with the absolutely highest score according to some model". I don't think this is a good definition of parsing. Humans most probably don't do that, and there are also quite successful parsing algorithms that don't do that.

NP-hard only matters if you look for some globally optimal structure, but there are many ways around that: what if we are OK with getting a tree which is close to the optimal? Maybe we want to locally score individual actions in the parsing process?

[(myl) Good idea. But figuring out how to "locally score individual actions in the parsing process" in such a way as to be able to combine those local scores to determine a global optimum or at least a good approximation — that's pretty much a definition of "parsing algorithm". That's what the cited papers, and many thousands of others, are all about.]

Bloix said,

October 16, 2013 @ 3:13 am

Maybe it's just me but I find the Starbucks sentence ungrammatical (I 'feel' it as ungrammatical, anyway.) A correct sentence would be "You can't put flavor into a bean if it isn't already there."

But I've got no problem with the lyric from the old America song:

"Oz never did give nothing to the Tin Man / That he didn't, didn't already have."

Yet Another John said,

October 16, 2013 @ 7:27 am

I'll second Ø's intuition (in response to MYL's response to the second comment) that this is only distantly related to the technical modern math senses of "projective" that he cites. I'm familiar with all these senses, but to me simply the everyday layman sense of "projective" seemed entirely natural to me: these are graphs that "project well" onto the plane in some sense.

Projective geometry is not a branch of group theory, though it is related to group theory, inasmuch as all geometry is (via symmetry groups and other concepts). And the second (category-theoretic) math sense of projective is more directly related to the everyday sense of "project" that to projective geometry, I think (via obscure intuitions about projective limits, which projective objects are supposed to generalize).

[(myl) This is probably right, but much of the early French work on the mathematics of grammars was done by group theorists, as epitomized by M.-P. Schützenberger, who famously helped develop the Chomsky-Schützenberger theorems among other contributions:

Among the many algebraic structures that appear in Schützenberger's works a dominant one is the structure of semigroups. Semigroups appear in his works essentially in two forms: 1) as free monoids, for example in coding and language theories and often in combinatorial designs; 2) as finite semigroups, for example in transition semigroups of automata or in the theory of pseudo-cvarieties for the study of varieties of languages. … Schützenberger's works contributed strongly to giving semigroup theory its letters of nobility by taking it out of its self-contemplation and putting it at work in areas of mainstream mathematics.

People working on formal language theory in the 1960s, especially in France, are sure to have been influenced (and probably taught) by Schützenberger. So I'd reserve judgment on whether the sense of "projective" used in works like this is related (historically or even formally) to the sense of "projective" introduced in the 1960s with respect to formal language theory.]

Jerry Friedman said,

October 16, 2013 @ 11:29 am

Bloix: How would you feel about "You can't put no flavor into a bean that ain't already there"?

Kristina said,

October 16, 2013 @ 11:30 am

It's odd, because I find the sentence grammatical but non-sensical.

For me it parses as if "isn't already there" refers to the bean, and not the flavor (in the bean).

In which case, yes: you cannot put flavor into a non-existing bean. But your point is therefore relevant how….?

Which makes me wonder if perhaps the "into" might not be interfering somewhat. Something must be interfering with the trace, and I'm not sure it's the negation, because the examples in the quotation from the paper work fine.

I wonder if the subset of us for whom this sentence fails have different intuitions on its meaning/grammaticality, or whether this is a significant point. What is the general impression on this sentence? I'm not certain that it is just me. (Particularly as Bliox's suggestion above sounds fine to me; just how is it ungrammatical for we, the subset? I feel like we need a survey up in this joint.)

Kristina said,

October 16, 2013 @ 11:34 am

On second thought, is the problem for me the "that" there?

Mark said,

October 16, 2013 @ 5:24 pm

The sentence is ambiguous between two readings.

1. You can't put flavor into a bean that isn't already in the bean.

2. You can't put flavor into [a bean that is not there/doesn't exist].

Maybe this is clear from the comments, I'm not sure.

The advertiser's intended meaning is (1) I assume, without having followed the ad campaign. I conjecture that the existence of an alternative parse helps to make the tag-line more memorable, and there may even be research showing this. Many advertising tag-lines are obviously intentionally ambiguous (e.g., "fly the American way"), with both interpretations being part of the advertiser's message. It would be interesting to know what happens when, as in the starbucks case, one interpretation is anomalous, or at least puzzling.

John Birch said,

October 17, 2013 @ 7:44 am

The meaning is the sentence is obvious – but clearly not what was intended. It says that you cannot put flavour into a bean if you do not have the bean.

The problem is that that "FLAVO(U)R" and "into a bean" are the wrong way round. Presumably they meant to say that "You cannot put into a bean FLAVOUR that is not already there", which makes sense, though clumsy. Better still might have been "You cannot add flavour to a bean".

To which I would add that a) surely you can add flavours to anything – dip your bean into chocolate and it will taste of chocolate, and b) I hate coffee so ultimately I do not care anyway.

Colin Fine said,

October 17, 2013 @ 7:51 am

@myl, re your reply to Jerry Friedman: I don't see what that has to do with Jerry's point. Nothing about that definition requires ordering, and in any case that is the definition of the Free monoid on S, not of monoids in general.

Oliver said,

October 17, 2013 @ 8:49 am

I am afraid that German allows some very hard splitting operations:

DIE KIRSCHEN nehme ich heute, weil mich Freunde besuchen, ALLE

DIE KIRSCHEN nehme ich heute ALLE, weil mich Freunde besuchen.

DIE KIRSCHEN nehme ich ALLE heute, weil mich Freunde besuchen.

DIE KIRSCHEN nehme ALLE heute ich , weil mich Freunde besuchen.

ALL DIE KIRSCHEN nehme heute ich, weil mich Freunde besuchen.

I take all die cherries today, because friends will visit.

chris said,

October 18, 2013 @ 9:17 am

It's odd, because I find the sentence grammatical but non-sensical.

For me it parses as if "isn't already there" refers to the bean, and not the flavor (in the bean).

I interpreted it that way too, but I wonder if that's intentional, if the act of reparsing the slogan somehow makes it more memorable. At least it's got us talking about it…