Annals of overgeneralization

« previous post | next post »

Suppose you heard about a study "showing" that Ivy League students are more socially sensitive than students at public universities or students at private colleges not among the Ancient Eight. You'd be skeptical, I hope.

So you take a look at the study, and discover that the authors — themselves Ivy League grads — did five experiments.

In the first experiment, they chose three Harvard students who exemplify, in their opinion, the best characteristics of that fine institution, and three students from the University of Michigan, again selected to represent the authors' idea of what such students should be like. They then subjected these six students to a battery of tests of empathy and social intelligence, and found that the three Harvard students scored a bit better than the three Michigan students.

The other four experiments were similar. In the second experiment, the authors selected three Princeton students from among a few dozen student-government leaders, and compared them to three selected representatives of the University of Oregon football team, and three (in their opinion characteristic) young people who did not attend college at all. Experiment 3 tested six new students, three from Yale and three from the University of Arizona, again selected to represent the authors' opinion of what such students should be like. Experiment 4 re-used four of the students from Experiment 3, but substituted two new choices from the same pools. And Experiment 5 re-used five of the six students from Experiment 4, substituting for one participant who seemed on reflection not to be quite of the Right Kind.

At this point, you should be saying to yourself, Wait a minute, this is a total crock! Where was it published, in one of those fake take-the-money-and-run open-access journals?

No, the study on which I've based this description was published a few days ago in Science, the flagship journal of the American Association for the Advancement of Science. But I've disguised my description of the conclusions and procedures, to protect the guilty get you to engage your critical faculties. The paper compares "literary fiction" to "popular fiction" and non-fiction, not Ivy League students to students at public universities and less prestigious private colleges; and it compares short-term priming effects on readers, not the abilities of group members; but it does base its conclusions on experiments that compared three hand-selected examplars of each general category. This is a design feature that would never be accepted in a competently-taught undergraduate course.

We're talking about David Comer Kidd and Emanuele Castano, "Reading Literary Fiction Improves Theory of Mind", Science 10/3/2013:

Understanding others’ mental states is a crucial skill that enables the complex social relationships that characterize human societies. Yet little research has investigated what fosters this skill, which is known as Theory of Mind (ToM), in adults. We present five experiments showing that reading literary fiction led to better performance on tests of affective ToM (experiments 1 to 5) and cognitive ToM (experiments 4 and 5) compared with reading nonfiction (experiments 1), popular fiction (experiments 2 to 5), or nothing at all (experiments 2 and 5). Specifically, these results show that reading literary fiction temporarily enhances ToM. More broadly, they suggest that ToM may be influenced by engagement with works of art.

Needless to say, this study has gotten considerable media uptake. But what's the basis of the authors' conclusion that "literary fiction, which we consider to be both writerly and polyphonic, uniquely engages the psychological processes needed to gain access to characters’ subjective experiences"?

Here's their account of the selection of materials (from the Supplementary Materials).

Experiment 1:

Six texts (3 fiction, 3 nonfiction) were selected by the authors. Critical to the selection were the criteria that the works of fiction depicted at least two characters and the nonfiction primarily focused on a nonhuman subject. These criteria were used to focus on the effects of reading about individuals presented in literature compared to those of simply reading a well-written text. Two of the texts in the literary fiction condition, “The Runner” by Don DeLillo (38) and “Blind Date” by Lydia Davis (39), were written by contemporary award-winning authors. The third, “Chameleon”, was written by Anton Chekhov (40), an early master of the modern short story. The nonfiction texts were “How the Potato Changed the World” by Charles C. Mann (41), “Bamboo Steps Up” by Cathie Gandel (42), and “The Story of the Most Common Bird in the World” by Rob Dunn (43). Participants in each condition were randomly assigned to read one of the three appropriate texts.

Experiment 2

Excerpts of the first several pages (8-11) of recently published novels were used as stimuli, with the stipulation that excerpts did not end in the middle of a scene or paragraph. In the literary fiction condition, participants read an excerpt from one of three recent finalists for the National Book Award for fiction [The Round House by Louise Erdrich (45), The Tiger’s Wife by Téa Obreht (46), and Salvage the Bones by Jesmyn Ward (47)]. In the popular fiction condition, participants read an excerpt from one of three recent bestsellers on Amazon [Gone Girl by Gillian Flynn (48), The Sins of the Mother by Danielle Steel (49), and Cross Roads by W. Paul Young (50)]. Participants in the control condition read no text.

Experiment 3

Six new texts, 3 in each condition, were used. The stories in the popular fiction condition were selected from an edited anthology of popular fiction (29). They were also chosen to represent a range of genres, including science fiction [Space Jockey by Robert Heinlein], mystery [Too Many Have Lived by Dashiell Hammett] and romance [Lalla by Rosamunde Pilcher]. Stories in the literary fiction condition were selected from a collection of the 2012 winners of the PEN/O. Henry Award for short literary fiction (30). They included Corrie by Alice Munro, Leak by Sam Ruddick, and Nothing Living Lives Alone by Wendell Berry.

Experiment 4

Four of the texts used in Experiment 4 were the same as those used in Experiment 3. Two new texts, Jane by Mary Roberts Rinehart (29, popular fiction) and Uncle Rock by Dagoberto Gilb (30, literary fiction), replaced Lalla (29) and Leak (30) from Experiment 3.

Experiment 5

Five of the texts used in Experiment 5 were the same as those used in Experiment 4, but The Vandercook by Alice Mattinson (30) replaced Nothing Alive Lives Alone by Wendell Berry (30) in the literary fiction condition because it was shorter and so closer in length to the other texts.

It would be inappropriate to conclude anything about Harvard students vs. Michigan students based on tests of three representatives of each set, hand-picked by researchers who admittedly wanted to find a way to support their pre-existing belief that Harvard students are superior. And it's just as inappropriate to conclude anything about literary fiction vs. popular fiction, or literary fiction vs. nonfiction, based on a comparison of a very small number of short excerpts, selected by the researchers to be somehow typical or characteristic of the genre — especially given that the researchers chose the samples in an attempt to get exactly the results that they got.

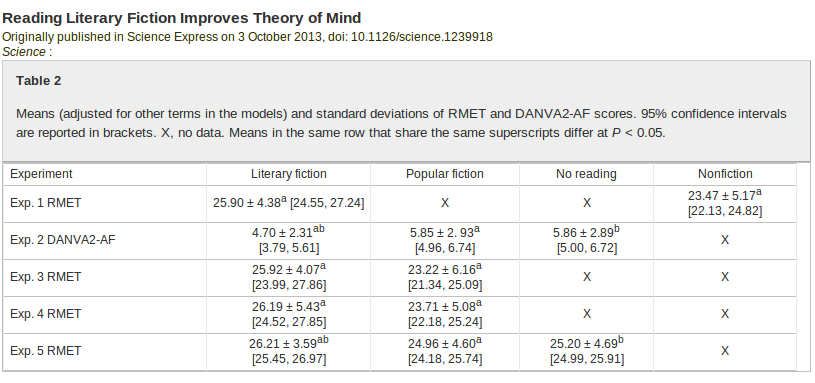

By the way, despite the authors' care in selecting the rabbits to place into their hat, the resulting effects are rather small:

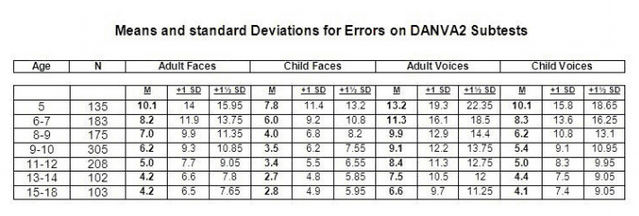

And the subjects' performance, least in some cases, is overall rather poor — compare these DANVA Norms:

Aside from the breathtaking overgeneralization (to all literary fiction vs. all popular fiction or all non-fiction, based on biased selection of a handful of probably atypical exemplars), there are other problems of interpretation.

Perhaps reading a short passage of self-consciously literary fiction reminded the Mechanical Turk test-takers of the experience of being in school, forced to read things they didn't understand and didn't much care for, and this put them in a mindset to attend more dutifully to a subsequent test whose goals also seemed arbitrary and mysterious.

Or maybe the Turkers who read non-fiction, popular fiction, or nothing were distracted by what they'd just read, or by intrusive thoughts from their daily life, whereas those who read passages from literary fiction were lulled by boredom into a state of mental blankness in which their responses to tests like RMET and DANVA were a bit more stimulus-driven.

These theories are not very likely, in my opinion, but I feel that they're just about as well supported by the reported experiments as the authors' conclusions are.

The real question here is why Science chose to publish a study with such obvious methodological flaws. And the answer, alas, is that Science is very good at guessing which papers are going to get lots of press; and that, along with concern for their advertising revenues from purveyors of biomedical research equipment and supplies, seems empirically to be the main motivation behind their editorial decisions.

D-AW said,

October 8, 2013 @ 7:17 am

This sounded fishy when I heard it reported on NPR several days ago. But my second thought was, given the superficial similarity of the conclusion to the positions of (e.g.) Martha Nussbaum or Wayne Booth, why is this so obviously a crock and that not? I guess some would say it is — Richard Posner among them — but others would want to debate their ideas, whereas for this study it's sufficient to show the fatal flaw in the method. It's a question of epistemological claim, or goal, isn't it; i.e. of methodology? The recent debate on "Computational linguistics and literary scholarship" [http://languagelog.ldc.upenn.edu/nll/?p=6968] is still knocking around in my head, I suppose.

[(myl) The conclusion about the benefits of reading literary fiction might well be correct, I have no strong opinion on that point. What's "a crock", in my opinion, is the methodology of the experiments that are supposed to lead to this conclusion.

And the point at issue is not a small or subtle or sophisticated one: if you want to claim that a large and diverse set X in general has more effect on Q than a large and diverse set Y does, you can't call it "science" when you test the hypothesis using three exemplars of set X and three exemplars of set Y, which you've hand-selected so as to demonstrate the effect, ]

Daniel Ezra Johnson said,

October 8, 2013 @ 7:37 am

"which papers are going to get lots of press … and. concern for their advertising revenues from purveyors of biomedical research equipment and supplies"

Re: your final link: This applies to Rao et al.'s Science paper about the Indus script? A different segment of the press, at least, and little to do with biomedicine.

[(myl) In the linked post, Richard Sproat writes

I think the conclusion is inescapable that Science is interested primarily if not exclusively in how their publications will play out in the press.

The rest of his post is support for that view.

I don't know of any systematic studies of the relationship between the editorial policies of Science and the business of biomedical research equipment and supplies. But the point is a pretty obvious one.

If you read a typical issue of Science, you'll see that there are many examples of a kind of article that is very unlikely to get any popular-press uptake (e.g. "Structure of the CCR5 Chemokine Receptor–HIV Entry Inhibitor Maraviroc Complex"); and you will also see that nearly all of these articles are in the areas of research of interest to the people who buy things like the "Droplet Digital PCR" hardware whose makers bought the back-cover advertisement in the 9/20 issue, or the "new BD FACSAria™ Fusion cell sorting hardware" whose makers bought a full-page ad opposite the table of contents in the same issue, or the "Roche LightCycler 96" qPCR machine whose makers bought a full-page ad opposite the first page of content, or etc. etc. etc.

So I stand by the observation that Science mostly publishes two kinds of articles: things that are likely to get a lot of press, even (and alas especially) if they're scientifically very weak; and things that are likely to be of interest to the researchers that the companies who buy their (very expensive) advertisements (for very expensive equipment) want to reach.

This is not a big surprise, since it means only that their management is responding to the incentives inherent in its situation; but we might as well recognize the situation for what it is.]

D-AW said,

October 8, 2013 @ 7:56 am

@myl – yes, I completely agree (can't call it anything but bullshit, probably, in the technical sense). It violates the methodological principles of the field, and comes to conclusions that are therefore groundless. But then there's this other field investigating the same (or a similar) question according to its own methodology, which is no more empirical (though the claims would seem to ask for or allow empirical evidence – I think this is partially Posner's point), but whose premises and conclusions are seriously debated over, instead of rejected out of hand. Obviously some questions are more suited to a humanities or a scientific mode of enquiry; these cases of cross over seem to set the stakes out in an interesting way (to me).

Brett said,

October 8, 2013 @ 8:44 am

When I heard about this claim, I was struck by two glaring problems with the methodology and conclusions. The first was the totally inappropriate selection procedure for the passages used in the experiment (as discussed in this post). The second was that the researchers and journalists all seemed to treat the experiment as evidence that reading literary fiction was important to building up a lifelong understanding of human interactions. In fact, the researchers showed a short-term difference in how the subjects responded to theory of mind tests, correlated with what they were assigned to read. There are lots of effects in psychology where a subject's performance on a task can be affected by what they do immediately before. However, in many cases, the effect has also been shown not to be persistent; after a relatively brief period, the differences between experiment and control groups go away. This regression is not universal, but it is fairly common, and it would have made the media claims about the long-term impacts of reading different kinds of material unjustified, even if the study involved here had not had its own serious methodological problems.

KeithB said,

October 8, 2013 @ 10:25 am

Sounds like the recent paper where some folks put *two* chicken nuggets to the test:

http://scienceblogs.com/insolence/2013/10/07/someone-other-than-mike-adams-puts-chicken-mcnuggets-under-the-microscope-hilarity-ensues/

eleventy-one!!! They found fat and blood vessels!

J. W. Brewer said,

October 8, 2013 @ 10:36 am

There's a separate question of how accurate and invulnerable to manipulation the "affective ToM" test is. If you'd phrased the claim as "Ivy League students are better than others at faking some desirable personal quality in contexts where there is apparent careerist value to doing so," perhaps it would it be a more intuitively plausible claim (although still, of course, subject in principle to experimental confirmation or disconfirmation). Indeed, because the modern Ivy League admissions process by all reports increasingly selects for people who don't merely have high grades and SAT's but who can also self-present a (possibly rather fictive) persona through their application file as a whole that meets a grownup's idea of what a certain impressive sort of 17 year old ought to be like (perhaps including a "socially sensitive" component it was fortunately unnecessary for me to learn to fake when I was applying to college all those years ago), you'd have a plausible mechanism to explain the intuition.

KeithB said,

October 8, 2013 @ 10:57 am

So, is Ted Cruz and outlier? 8^)

MattF said,

October 8, 2013 @ 11:12 am

Maybe there's two entirely different Science magazines edited by two entirely different groups, and the two magazines are just mushed together before publication. This article, on the junk publshed in 'open-access' journals is from the 'good' Science:

http://www.sciencemag.org/content/342/6154/60.full

[(myl) Um, yes. Sort of.]

EricF said,

October 8, 2013 @ 12:19 pm

Back in olden times, when I was an undergrad math major, I had to take Stats 101 for degree requirements and had somehow skipped it until my senior year. I ended up in a class with two other math students and dozens of people not particularly thrilled to be there. I recall the professor had a method called "Proof By Three Examples," which relieved the student of the burden of actually understanding proof methods, but was in his words "good enough for you people." Could it be that paper's authors were my fellow classmates and took the method to heart? (Oh, but I was at Michigan, not Harvard…)

Bill Benzon said,

October 8, 2013 @ 1:27 pm

This reminds me of a bit of evolutionary psychological work on fiction I read some years ago. The authors of the study were interested in verifying some hypothesis about why women read romance novels and, in particular, about why a genre of fan fiction known as 'slash' is popular.

Fan fiction, of course, is fiction fans write using characters from a TV show, movie, novel, or whatever. "Slash" designates the mark of punctuation: /. So, we have Kirk/Spock or Homes/Watson as kinds of slash. In this fiction normally heterosexual characters are put into homoerotic stories.

So, to test their hypothesis about the appear of slash the authors selected a commercial novel from the 1950s about a homoasexual affair between trapeze artists in a circus. Whatever that is, it's not slash fanfic.

Who did they have read their text? Not readers of slash. No, they chose a group of women who read romance novels together.

Their hypothesis was considered verified.

Jonathan Mayhew said,

October 8, 2013 @ 2:18 pm

I'm struck that press accounts of this type of experiment almost never give information about effect size. It isn't very convincing to say one group of experimental subjects was 2% more likely to do something, but if you say "more likely" that implies a generalization even if the effect is very small.

Also, how almost anyone who seriously holds this theory of reading fiction as being good for empathy, understanding of others, ToM, etc… views this as a long-term effect, over years of reading and study, not as a short-term "priming" effect. It's interesting how that gets translated to a lab-testable effect.

[(myl) I want to add that the cited explanation for a (claimed) priming advantage for "ilterary fiction" over "pop fiction" doesn't make any sense to me. Surely (for example) detective novels lead readers to think about characters' knowledge, belief, and motives at least a much as "literary fiction" does. ]

D.O. said,

October 8, 2013 @ 4:02 pm

Reading high quality imaginative literature, what is used to be called belles lettres, may be a reward in itself. Or one might not care about it at all. Why it also has to be useful, I have no idea. What if reading the description of dog on the beach in the third chapter of Ulysses left a lasting impression on me? Should I also have a better understanding of canine psychology as a result?

Arts are more immune to this kind of impositions. Though they have their share too. One, as I recall, was a study of milk production by cows, exposed to music by Mozart vs. jazz. I hope it was a joke.

Brett said,

October 8, 2013 @ 4:59 pm

@D.O.: I don't know about Mozart being even the slightest bit resistant to this kind of thing. The "Mozart effect," in which listening to classical music produces a significant (but relatively small and complete transitory) improvement in subjects' performance of spatiotemporal reasoning tests, is extremely famous. The hype surrounding it has led hordes of American parents to play Mozart for their babies, apparently believing that it will make them smarter later in life.

Adrian Morgan said,

October 8, 2013 @ 6:17 pm

Seriously, what do people who advocate the view that fiction can be divided into "literary" and "non-literary" categories have in mind? No-one has ever explained it to me.

Brett said,

October 8, 2013 @ 6:48 pm

@Adrian Morgan: During the NPR report about this research, they distinguished literary fiction as dealing primarily with understanding the characters; non-literary fiction was supposed to be about plot instead, with the information about the characters more or less explained to the reader. They read examples of a romance novel, which flat-out explained what a particular character was like, and a literary piece, which described a character doing something slightly enigmatic.

Personally, I don't like the terminology, and I don't think the difference between the types of fiction is really all that great. However, there is at least some kind of distinction that (at least some people) have in mind when they discuss this.

Marion Crane said,

October 9, 2013 @ 6:45 am

The Dutch newssite http://www.nu.nl even seems to have interpreted Theory of Mind as 'mindreading' in its headline, which makes it even worse…

[(myl) This is a widespread usage, promoted in this case by Science NOW, the "the daily online news service of the journal Science", which used the headline "Want to Read Minds? Read Good Books".]

David J. Littleboy said,

October 9, 2013 @ 7:26 am

This is all rather humorous. Even more so given this scathing review in Slate of Gladwell's latest book: Christopher Chabris, "The Trouble With Malcolm Gladwell", 10/8/2013.

It seems C. Chabris (and Steven Pinker before him in the "Igon Values" article) are holding poor Mr. Gladwell to a higher standard than Science holds their authors.

Jerry Friedman said,

October 9, 2013 @ 9:11 am

Adrian Morgan: I actually think it's a bright spot of this study that the authors seem to think of literary fiction as one of the categories of fiction instead of as the default kind, with only sf, mysteries, romance novels, etc., being genres. (Cf. "language" and "dialect".)

To answer your question along the same lines as Brett's more specific answer, I was once in a writer's group and was writing mostly science fiction. A member of the group told me that she had trouble with my writing and some other members' because nothing was interesting in fiction but the characters and their relationships. I'd say that's a statement about the core of literary fiction, with the proviso that in it social commentary can also be found interesting, and (especially in the higher-brow reaches?) metafiction can be too.

But speaking of higher, everything that rises must converge, and the genres meet at the top. Many of the best writers of literary fiction are interested in things other than characters, and even try their hand at the "paraliterary" genres, while many of the best paraliterary writers are very interested in characters and their relationships and even try their hand at literary and other genres.

All subject to correction by those who know more.

Jerry Friedman said,

October 9, 2013 @ 10:09 am

I should also have mentioned that literary readers and writers can be interested in style in and of itself.

Brett's comment probably answers MYL's question about why the study authors think literary fiction prompts "theory of mind" skill better than mysteries. The authors may believe literary fiction presents information about the characters' minds more realistically and more subtly than mysteries; the subtlety demands more engagement from the reader.

Larry K. Andrews said,

October 9, 2013 @ 10:28 am

When subjects are selected by the investigators the results are predictable.

Neal Goldfarb said,

October 9, 2013 @ 10:43 am

Among the courses taught by one of the coauthors of the paper are (1) Data Analysis and (2) Research Methods.

[(myl) Perhaps you've left out the subtitles: "Data Analysis: Getting The Results You Want", and "Research Methods: How to Prove Scientifically That Your Prejudices Are Valid".]

Suburbanbanshee said,

October 9, 2013 @ 12:24 pm

How about "Mechanical Turk: Keeping Your Eyelids Open at 5 AM While Attempting to Make Money Before All the Turk Jobs Are Taken"?

JR said,

October 10, 2013 @ 12:44 am

I only read literary fiction, but from what I remember when I was young, I’d say non-literary fiction has as its main goal entertainment.

Agatha Christie detective novels only make you question who might be the murderer and why. They are fun novels. But you don’t stop and wonder what is going on and why people are saying and doing the things they do. Unlike in the detective novels of Rubem Fonseca, such as High Art, where there are numerous discussions about hermeneutics—why a discussion of hermeneutics in a detective novel?!

I’ve only read one science fiction novel, by Asimov, and I am guessing there is a similar difference going on compared to the novels of Kobo Abe, such as Inter Ice Age 4.

And as others have mentioned the importance of plot in non-literary fiction, one could go even further and say that much of modern literary fiction just has no use for plot or that it jokes and plays with it, which is not allowed by popular fiction, which has entertainment as its goal. So, early Peter Handke novels have a plot, but that is not at all what his novels are about—the plot is useless and meaningless. Or take the fun plot in the first and third parts of Roberto Bolaño’s Savage Detectives—lots of fun, but why? They seem to not be doing anything!

So, again, big questions of “why?” in literary fiction, whereas in non-literary fiction, things are much more straight-forward.

Brett said,

October 10, 2013 @ 11:01 am

@JR: I'm confident the study's authors would agree with everything you say.

Random DriveBy said,

October 10, 2013 @ 12:06 pm

@JR: so you read lit-fic to be bored? :-P

Jerry Friedman said,

October 10, 2013 @ 1:52 pm

Or to put it another way, big questions of "why" and playing with plot aren't entertaining?

Brett: I disagree. JR sees the detective genre as containing literary fiction (Fonseca) and the same with the sf genre (Abe, who maybe I should read), whereas AFAICT the study authors seem to think such things don't exist.]

Also, I suspect that some of the books JR likes that joke and play with plot do the same with character, and aren't the kind of thing that Kidd and Castano expect will improve theory-of-mind skills.

However, JR, if you read some more science fiction, you'll see that plotless stories are allowed on occasion. Even Asimov wrote a few. So are occasional discussions of hermeneutics, as in some of Samuel R. Delany's work. (There's one in Gene Wolfe's Book of the New Sun, too, but as the discussion is based on Dante and doesn't make you wonder what it's doing there, you might not like it as much as you like some others.)

@WriteJohnWrite said,

October 10, 2013 @ 4:26 pm

I agree that certain works of fiction make it easier for us to identify with other people. But more generic works of fiction can do this as well, as can movies and TV shows and paintings and works of sociology and anthropology. Some help us expand our sense of who "we" are. Some don't. But if your goal is to be more sociable, hang out with people, not books (but books too).

thecynicalromantic said,

October 10, 2013 @ 6:33 pm

Oh good, I was hoping someone would take a proper critical look at this study. I've been giving the headlines the side-eye for days wondering what they meant by "literary fiction"; turns out it's even worse than I suspected.

I despise the term "literary fiction" and its pretensions towards being a discrete category from "popular" or "genre" fiction. If I write a book and someone asked me what kind of books I write and I said "Good ones" or "Deep ones" everyone would rightly think I was being insufferable. But apparently I can decide to write "literary fiction" by using certain tropes or devices the same way I can decide to write "fantasy" by putting a dragon in my story, and pretend that my stuff is therefore automatically better, look, we've said its "literary." And if I put a dragon AND a "literary device" in my story then everything gets confused and people start making weird theories and arguments to decide whether literary fantasy is a part of the fantasy genre or a type of literary fiction, because they're all hung up on the two being separate.

Someday I am going to invent my own genre and call it "masterpieces." Every book written in this genre, using whatever set of tropes or devices it turns out to be, is therefore a "masterpiece," no matter how terrible the story.

At this point I tend to react to people saying "I read literary fiction" (or, worse, "I write literary fiction") about the same way I react to people who say things like "I'm really a nice guy" or "I'm not racist, but."

That said, I strongly believe one can learn empathy from reading good books. The books that taught me the most about empathy when I was growing up were about girls becoming knights and sorceresses and I have never once seen them shelved in the "literary fiction" section of any bookstore or library.

JR said,

October 10, 2013 @ 9:53 pm

@Jerry: Yes, I agree that the authors of the article seem to have a concept of literary fiction based on Victorian novels. Early Peter Handke and Bolaño's works certainly have no interest in characters and the nuances of their relations with other characters. (Which is not to say that either writer does not create some memorable characters; they do.)

Also, yes, as I admitted, I've read little SF, so maybe I should read some of what you mentioned. Still, when one says "typical Hollywood movie," most people know what that means, even though some, like the Matrix, may be more exploratory than the most typical of Hollywood movies.

@thecynicalromantic: "But apparently I can decide to write "literary fiction" by using certain tropes or devices." Can you give some examples of these tropes and devices and authors who use them in an "insufferable" way?

JR said,

October 10, 2013 @ 10:03 pm

Btw, my recent comment about SF and Hollywood movies was more of a reply to Adrian's original question. Again, I am not qualified to speak about SF.

David Golumbia said,

October 11, 2013 @ 8:45 am

to return to the topic of an earlier discussion, yet another example of interdisciplinarity that does not respect the disciplines into which it reaches. I can't speak to its bizarre and utter failure to construct a valid experiment on either structural (as MYL and several commentators mention) or statistical terms, given the researchers' home discipline of psychology, which should teach those things (let alone that one of the co-authors apparently teaches research methods); but the bizarre distinction between "literary" and "non-literary" fiction (and the inherent presumption that these form two, relatively homogeneous, categories) is not one that current literary scholarship would support, in any form, let alone the elitist form the authors give it. It's no surprise they turned to prizes rather than scholarship to set up the distinction, as I can only think of places where scholars would argue against rather than for it.

Jerry Friedman said,

October 11, 2013 @ 12:08 pm

JR: Indeed, typical science fiction does have a rather Victorian (or Homeric) idea of plot and character, which doesn't prevent me from enjoying it. If you want recommendations for the atypical parts that you might particularly enjoy, I hesitate because I haven't read any of the writers you named and because this isn't the place. However, feel free to e-mail me at jerry_friedman@yahoo.com. There are also forums where you could get a wider spectrum of better-informed answers, such as rec.arts.sf.written, if you say what you like and ask what sf you might like.

David Golumbia: I was pleased to see your comment that current literary scholarship wouldn't support the elitist distinctions of genre that the paper under discussion was based on.

While agreeing with your criticisms and everybody's of that paper, I should say a word for it. I've long thought, and have said in a comment here, that experimental psychology could study questions about the effect of writing on readers. Are there measurable differences in effect or response between prize-winning fiction and best-sellers that aren't nominated for prizes? Between works canonized in freshman English and those that aren't? What happens to reader responses, or to effects on readers, if we change one word of a great poem?

I don't hear much about such studies, but then I wouldn't. Do they exist? If they're rare, then I'd give Kidd and Castano some credit for just thinking in those terms, and I think it would be interesting if their work leads to work along similar lines without the disastrous flaws of this paper. (As a science-fiction reader, I can see an objection, though: what if somebody comes up with a formula for enjoyable fiction for certain readers?)

Science, My Aunt Fanny - The Horn Book said,

October 11, 2013 @ 3:31 pm

[…] You might guess from that question that I am not the world's heaviest lifter of "literary fiction," and am not even sure I know it when I see it. The New York Times recently reported on a study published in Science which purported to suggest that reading "literary fiction" upped a person's empathy quotient where reading "popular fiction" (and nonfiction) did not. It sounds fishy (these guys think so too). […]

JR said,

October 11, 2013 @ 9:30 pm

In terms of literary studies, there have certainly been discussions of different types of writing, such as Roland Barthe's writerly texts versus readerly texts, or Schiller's naïve versus sentimental poetry. Also, there still are notions of canons, and reading lists for graduate students are created largely based on some criteria that have to do with some concept of literariness.

However, judging between literary and non-literary fiction is not our job. That's the job of people who write book reviews for newspapers or on Amazon or wherever. And what is considered the proper object of study has certainly changed radically in the last few decades, so I agree with David that it is a bit odd to suddenly now talk about an obvious distinction between literary and non-literary fiction.

Still, behind closed doors, I'd say most do still hold a notion that there is fiction that is non-literary. Ask a Brazilian literature student about Paulo Coelho, and you will most likely get at least a rolling of eyes. You might get a similar response from Spanish students about authors like Isabel Allende, who, unlike Coelho, is on graduate student reading lists. I said above that I only read "literary fiction," but in terms of music, it's a totally different story, so I'm not sure if calling it elitist is quite right. Also, I am now studying travel literature, which had never been considered literature until recently. That's not pretentious or elitist, even though I do not want to read Harry Potter–the first book was enough for me to never want to read the rest, sorry.

I’ve got your missing links right here (12 October 2013) – Phenomena: Not Exactly Rocket Science said,

October 12, 2013 @ 11:00 am

[…] Remember that study about literary fiction, which was published in Science. Language Log absolutely savages it. […]

thecynicalromantic said,

October 12, 2013 @ 5:52 pm

JR: Tropes and devices I have heard described as hallmarks of "literary fiction" just within the past week include: The main character doesn't get what they're searching for (the full version of this comment was "Every plot ever: Someone is looking for something. Commercial version: They get it. Literary version: They don't get it"). Unreliable narrators. Unlikeable protagonists. English professors who fall in love with a quirky student and have deep thoughts about cheating on their wife. Anne Neugebauer gives a list of seven completely different things that can make something "literary fiction" here: http://annieneugebauer.com/2012/05/07/what-is-literary-fiction/ . All of the things she helpfully identifies as "myths" in the beginning are also things I have heard people genuinely mean when they say "literary fiction," sometimes including self-identified "literary fiction" authors. And when you ask editors of "literary fiction" magazines what "literary fiction" is, you get an entirely different set of definitions: http://www.writing-world.com/fiction/literary.shtml, often explicitly including value determinations like "great writing" or "originality".

All put together, it all comes off as a way to pretend that writing is inherently original if the protagonist doesn't get what they want, or that any work in epistolary form is deeper and more challenging than work that's not, or that having an unlikeable protagonist automatically confers literary merit. Or, most stupidly, the idea that fantasy and science fiction, being genres, cannot be original. It falls apart even worse when you start trying to compare modern "literary versus commercial" fiction to things like school reading lists and the classic Western canon, where many works of "great literature" have strong plots, or happy endings, or magic. Many of the biggest names in classic Western great literature were popular writers at the time (Shakespeare, Dickens, Melville).

In other words, it's a deliberately confusing term trying to cast certain types of story as more inherently literature-y than others, without doing the hard work of evaluating each individual story's actual depth and mastery of the many possible aspects of literature.

David Golumbia said,

October 12, 2013 @ 10:53 pm

@JR, I'm not clear if you are an English professor or not, and I would not invoke professional status except that the question being raised is precisely whether this work engages with the active profession as it is currently practiced. As a practicing English professor for almost two decades, I will repeat what I said before: the "literary/non-literary" distinction as these authors invoke it does not exist in current or recent scholarly work. It does not map onto Barthes's distinction between "readerly" and "writerly"–if it did, these authors would have used Barthes's favorite Alain Robbe-Grillet as their "literary" texts to generate "empathy"(!). Whether his distinction is valid or not, Schiller's work is over 200 years old, so not in itself evidence of current scholarship. And the canonical/non-canonical distinction, which you portray as far less controversial and far more set in stone than have the many professors I've worked with, itself will not get these authors what they want. If they mean "canonical" by their term "literary," that would mean they should have chosen canonical contemporary texts like Finnegans Wake, A Clockwork Orange, Gravity's Rainbow, or the works of Martin Amis, none of which display the kind of empathic characterization these researchers deliberately pick out. I'd wager many readers would feel far more empathic to their fellow humans having spent a half-hour with Gone, Girl than with the Wake or Martin Amis. Or Proust, or Herman Melville, or Thomas Mann, or Christopher Marlowe, or any number of other figures who occur on almost anyone's version of the relevant historical canon.

JR said,

October 12, 2013 @ 11:42 pm

@David: Yes, I am aware when Schiller wrote. And I said I agreed with you about current scholarship.

JR said,

October 12, 2013 @ 11:50 pm

@David: It is also amusing that you were the one accusing others of elitism, but then you brandish your professional credentials against me as part of your argument.

Did you notice, btw, that I only mentioned two English-language writers, Asimov and Agatha Christie? All the rest were foreign authors that I read I the original language. Make of that what you will.

Adrian Morgan said,

October 13, 2013 @ 9:50 am

Thanks to the responses to my question about defining literary fiction. I can't say the discussion has altered any of my impressions (e.g. that literary and non-literary fiction do not exist as categories), but it does perhaps clarify the questions.

A metaphor that works for me is a work of fiction is like a house, where plot corresponds to the architecture (walls, floor, ceiling, etc), while allegedly "literary" qualities, such as the invitation to reflect meaningfully upon the characters, correspond to the furnishings (chairs, cupboards, curtains). The ideal house — and the ideal fiction — is adequately supplied with both.

One can imagine laying out all works of literature on a multidimensional graph, with "importance of plot" as one axis, "importance of reader introspection" as another axis, and a multitude of other axes that one can define arbitrarily. These axes are more-or-less orthogonal, which is why literary and non-literary fiction cannot exist as categories. They might at best conceivably exist as idealisations, comparable to a crippled version of phonetics in which [u] and [a] are the only cardinal vowels.

I wouldn't waste my energy — as The Cynical Romantic appears to — on anger at people who think fantasy and literality are mutually exclusive. My impression is these people are so rare, even in circles where literature is most passionately discussed, that it is easier simply to dismiss them. (Indeed, one of the endearing qualities of fantasy is its capacity to build empathetic bridges to inhabitants of wholly fictional worlds.)

Jerry Friedman said,

October 13, 2013 @ 8:35 pm

David Golumbia: Kidd and Castano's literary fiction may not map directly onto Barthes's "writerly", but the study does contain the phrase "literary fiction, which we consider to be both writerly and polyphonic".

D-AW said,

October 13, 2013 @ 9:22 pm

@those on the interdisciplinarity discussion:

Note how the brief gesture to Barthes, Bakhtin and Bruner in the opening (w/ acknowledgement of Marzoni in the footnote) resembles the empty gesture towards Aristotle at the top of the Latent Personas in Film paper. This seems to be a reflex among those wanting to write about literature who have no training or education in the discipline. There is more than one methodology being violated here.

JR said,

October 13, 2013 @ 9:32 pm

@Jerry: Thank you. I mentioned only two examples of literary scholarship involved in some type of evaluation of different types of literature. And, yes, I never said it was the same as what this study does. You point out another example, Bakhtin's notion that certain writings exhibit polyphony, while others do not. One could also add the idea of écriture féminine, which only some dozen authors exhibit.

So I will take back my earlier comment somewhat: Schiller, Barthes, Bakhtin, and the French Feminists are all still discussed in current scholarship. Does that mean current scholarship spends its time dividing literature according to Schiller's naïve versus sentimental distinction? No, of course not. But German scholars certainly do continue to read and discuss Schiller's 200-year-old work.

Nick Lamb said,

October 15, 2013 @ 11:45 am

Hmm, perhaps Mark has delivered enough of a kicking already, but who decided that picking various stories from a dusty old copy of "Popular Fiction: An Anthology" was a fair or even useful comparison to a bunch of award-winning short literary fiction from 2012 ?

Make no mistake, "Popular Fiction: An Anthology" isn't an awards collection, or a "best of" except in the sense that its editor felt the short stories selected illustrated points about genre fiction generally and the specific genres particularly. And these aren't recent works, the Heinlein is from the 1940s, the detective story I think dates to the 1930s and even the romance story, which is chronologically newer, seems to me to be aimed at women my grandmother's age.

Finally though, a thought about how to fix this (perhaps off-topic on LL but it can't hurt). Maybe all but a handful of specialist journals covering experimental science should insist on accepting or declining experiments at the design stage instead of finished papers. The experimental design would be submitted, modified to appease the journal's reviewers as necessary, approved and then "locked in". Then you can run the experiment, maybe it has exciting results and maybe it doesn't, the usual peer review process follows for the remainder of the paper. It seems to me this would eliminate a lot of the cherry-picking that goes on today both by experimenters and journals. Obviously there would still be outright fraud, but it feels intuitively as if fraud is a smaller (though serious) problem for the discipline.

David Marjanović said,

October 16, 2013 @ 1:51 pm

Indeed, and they've published similarly flawed stuff in other fields before (I vividly remember "Plucking the feathered dinosaur" from 1997). However, I disagree with your answer to that question: as a paleontologist, I find this attempt to answer it much more convincing. It's not about the money, it's about the impact factor, where Science keeps being number two.

Bill Benzon said,

October 17, 2013 @ 11:04 am

I just looked at the letters the NYTimes has published in response to an op-ed on this work. Not a hint of the methodological problems:

http://www.nytimes.com/2013/10/10/opinion/does-literary-fiction-teach-empathy.html?src=recg

Fictional Science | Book View Cafe Blog said,

October 24, 2013 @ 1:02 am

[…] linguist Mark Liberman, who is brilliant at dissecting popular but bad scientific studies, cut it to ribbons on Language Log, validating my gut feeling and demonstrating why the study didn't […]

A false sense of authority (or, the problem with LifeHacker) : The Writing Center said,

October 24, 2013 @ 4:54 am

[…] For example, see Read literary fiction before dates or meetings for social success, and then read Annals of overgeneralization at Language Log. There's also the case of Why liars tend to say "I" less often, […]

Raghu said,

November 6, 2013 @ 4:21 pm

I believed the study concluded reading fiction leads to more empathy.

But the author of the study never concluded that the empathy would lead to sympathy, which is wat you concluded ( my understanding)

"Suppose you heard about a study "showing" that Ivy League students are more socially sensitive than students at public universities or students at private colleges not among the Ancient Eight. You'd be skeptical, I hope."

Your at-a-glance guide to psychology in 2013 « RoyMogg's Blog said,

December 25, 2013 @ 9:03 am

[…] fiction boosts your empathy skills, but only if it's literary fiction, a study claimed. Not everyone was impressed. The purpose of Obama's BRAIN initiative became clearer thanks to publication of an interim […]

DrA said,

October 6, 2014 @ 11:26 pm

Did the researchers report that the experimental groups did not differ on pre-tests of the ToM measures? I would hope so, given its publication in Science. But if not, one can't conclude that differences between the groups on post-test are due to the experimental treatment. By happenstance, the Literary Fiction group could have scored higher on pre-test, thus confounding the results.