It's worse than you thought

« previous post | next post »

The most recent xkcd:

Mouseover title: "Collaborative editing can quickly become a textual rap battle fought with increasingly convoluted invocations of U+202a to U+202e."

What convolutions are really available? The Wikipedia entry on Bi-directional text says:

[T]he Unicode standard provides foundations for complete BiDi support, with detailed rules as to how mixtures of left-to-right and right-to-left scripts are to be encoded and displayed.

In Unicode encoding, all non-punctuation characters are stored in writing order. This means that the writing direction of characters is stored within the characters. If this is the case, the character is called "strong". Punctuation characters however, can appear in both LTR and RTL scripts. They are called "weak" characters because they do not contain any directional information. So it is up to the software to decide in which direction these "weak" characters will be placed. Sometimes (in mixed-directions text) this leads to display errors, caused by the BiDi-algorithm that runs through the text and identifies LTR and RTL strong characters and assigns a direction to weak characters, according to the algorithm's rules.

In the algorithm, each sequence of concatenated strong characters is called a "run". A weak character that is located between two strong characters with the same orientation will inherit their orientation. A weak character that is located between two strong characters with a different writing direction, will inherit the main context's writing direction (in an LTR document the character will become LTR, in an RTL document, it will become RTL). If a "weak" character is followed by another "weak" character, the algorithm will look at the first neighbouring "strong" character. Sometimes this leads to unintentional display errors. These errors are corrected or prevented with "pseudo-strong" characters. Such Unicode control characters are called marks. The mark U+200E (left-to-right mark) or U+200F (right-to-left mark) is to be inserted into a location to make an enclosed weak character inherit its writing direction.

This suggests that the text-direction "marks" only affect the ordering of "weak" characters. But this page on "Understanding Bidirectional Text" tells a more complex story: In addition to the "implicit markers" U+200E and U+200F, Unicode has two kinds of "explicit" text-direction markers, the "embeds" (U+202A and U+202B) and the "overrides" (U+202D and U+202E). These must be canceled by the "Pop-Directional-Formatting" (PDF) character U+202C.

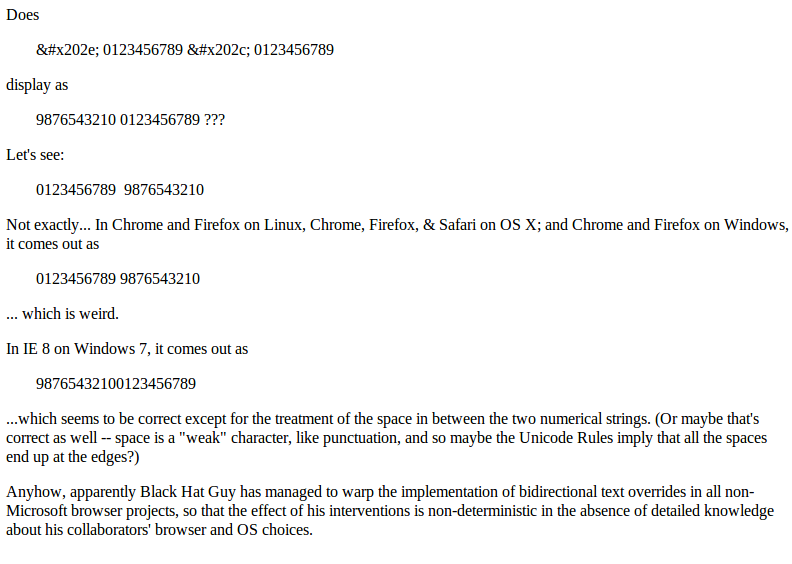

In the comic, Black Hat Guy uses U+202E, the "Right-to-Left Override" (RLO), which causes following characters to be considered "strong" in the right-to-left direction until the override is ended by a PDF. Does this kind of thing work?

Check out RTLtest.html to see.

Screenshot from Chrome on Linux here:

As the test page notes, Black Hat Guy has apparently managed to subvert the implementation of this corner of Unicode interpretation in all non-Microsoft browser projects, so that the effect of his interventions will be non-deterministic in the absence of detailed knowledge about his collaborators' browser and OS choices.

(We need to do the test in a separate html file because WordPress has a set of obnoxious and hard-to-evade filters, which munge the text of posts in what someone once thought would be intelligent and helpful ways. Among many other annoyances, this results in the loss of most things like the RLO and LRO marks. This same misfeature has also required me to edit the second paragraph of the Wikipedia quotation displayed, because WordPress blithely ignores explicit instructions to the contrary and removes or defaces its attempts to display the relevant code points.)

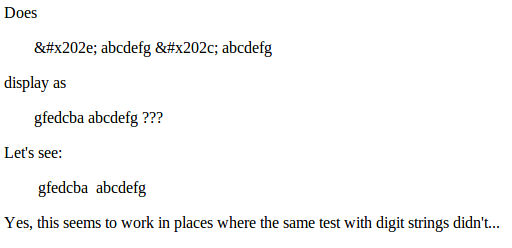

Update — OK, following up on suggestions in the comments, here's a version of the test using Latin letters. Chrome in Linux screenshot:

So indeed this works in some browser/OS combinations where the other file didn't. I still don't understand why the number-string version of the test produces such curious and variable results, though it obviously has something to do with the fact that in (most?) RTL languages, digit strings are rendered LTR — though this doesn't explain why the right-hand digit string is rendered RTL; plus maybe in the non-IE browsers, the second digit string winds up on the left (a problem that I didn't think about) — though still, it's not clear to me why that should happen with digits and not with letters. Convolutions within convolutions, whatever, it just shows that Black Hat Guy has been hard at work in the councils of the Unicode Consortium as well.

Feel free to tell us about your explorations of these issues in various browsers and text editors and word processing programs. Or (as the cartoon suggests) in IM contexts?

Philip Spaelti said,

November 21, 2012 @ 8:01 am

I guess there is a brief convolution when he gets to "the" in the 2nd line…

[(myl) A typical problem in attributional abduction: Did Randall faithfully copy a fictional typographical error, or insert a real-life typo of his own?]

r said,

November 21, 2012 @ 8:20 am

You shouldn't have used numbers for your test. They are treated differently, introducing an extra variable. Try 'abcdefghij' or something

Philip Spaelti said,

November 21, 2012 @ 8:23 am

More seriously, I am a little worried about your test because you are using numbers. I believe that in RTL languages numbers are supposed to behave differently from script (they are supposed to display the same as for us).

I just tried to modify your test to use "abcedf" and things behave a little differently. But I can never get my head around this stuff.

r said,

November 21, 2012 @ 8:29 am

Also, you shouldn't have used the same string twice. The fact that the '0123456789' on the left is actually the *second* one (the one after the U+202C) is thus obscured!

BIDI is really terrifying stuff, and I don't claim to understand it for a second.

Philip Spaelti said,

November 21, 2012 @ 8:35 am

I completely agree with r. Note that if you put some text between the numbers they suddenly flip to the order you were looking for.

Mark Young said,

November 21, 2012 @ 8:40 am

I did the digits to letters conversion, and in Chrome it came out as it should have (with the embedded space and all). In IE it came out as it should have /except/ for the space (which was apparently deleted — only one space was left on the line as shown on-screen).

Lane said,

November 21, 2012 @ 10:17 am

I learned the words "munge" and "misfeature" from this post. Thanks!

Ellen K. said,

November 21, 2012 @ 10:26 am

I wonder if using a nonbreaking space rather than just the space character makes a difference.

Henry Clay said,

November 21, 2012 @ 11:26 am

If you look closely, he's also put those control characters in the title tag, so depending on your browser, your title bar might also be reversed.

For example, in Firefox on linux, I'm seeing "xkcd: xoferiF allizoM – RTL" in the title bar when I visit xkcd.com

Wm Tanksley said,

November 21, 2012 @ 11:51 am

This is related to a hack used to entice people to open attachments. You name a file something like "interestingfile gpj.exe", but replace the space with the BiDi reverse character. Windows Explorer obediently displays the filename as interestingfileexe.jpg, but of course it sees the extension as EXE.

Dan Lufkin said,

November 21, 2012 @ 12:34 pm

T Eliot top bard notes putrid tang emanating is sad Id assign it a name gnat dirt upset on drab pot toilet

TonyK said,

November 21, 2012 @ 1:02 pm

You shouldn't use two identical strings for your test! How can you tell "string1 2gnirts" from "string2 1gnirts" if string1 and string2 are identical?

You could have used, for example 12345 and 67890.

[(myl) Yes, several others have made that point. You could add productively to the conversation by showing us what the results look like.]

Pavel Curtis said,

November 21, 2012 @ 1:31 pm

If you'd like a more detailed explanation of the bidi algorithm, which will explain why digits behave differently from Latin letters, check out the Unicode Standard Annex #9: The Unicode Bidirectional Algorithm, here: http://www.unicode.org/reports/tr9/tr9-27.html

[(myl) Does it explain why IE behaves differently from the other browsers? Or tell us which behavior is "correct"?]

David Morris said,

November 21, 2012 @ 4:55 pm

Should "ETH" in the middle of the bottom block be "EHT" or is there some sort of in-joke there? I could imagine someone writing "het" as the reverse of the error/internet usage/in-joke "teh".

Daniel Barkalow said,

November 21, 2012 @ 4:58 pm

You can discover a lot about what's going on by selecting parts of the text. The browser will select regions which are contiguous in writing order, not regions which are contiguous in space.

I believe what's going on is like this: when you write, you have an area on the line which hasn't been used yet. RTL characters consume space at the right end of this area, and LTR characters consume space at the left end of this area. This means that, if you have a chunk of RTL characters in the middle of a bunch of LTR characters, all of the RTL characters will appear to the right of the LTR characters, regardless of how they interleave. However, a strong LTR character eliminates the remaining unused space in the center of the line and creates a new unused space to the right of anything already written.

In your first test, we write the digits that appear on the right first, starting from the right, and then the digits that appear on the left, starting from the left; finally, we shove the whole line together and put it at the left side of the page. In your second test, we write the letters that appear on the left first, starting from the right, then we shove all that over to the left and write the remaining letters left to right to the right of what had been written already.

I have no idea why anyone would want the behavior of sorting LTR characters entirely to the left of RTL characters, but it's plausible as a naive rule when there isn't a clear guide as to the correct answer for where to place each sequence of same-direction characters.

F said,

November 21, 2012 @ 5:00 pm

You say (on your test page) that space is a weak character. It isn't – it is neutral, which is quite different in terms of the bidi algorithm (though I haven't quite worked out whether that makes any practical difference here). Nonbreak space (Ellen K.) *is* a weak character.

Ø said,

November 21, 2012 @ 5:18 pm

He has changed ETH to EHT now.

said,

November 21, 2012 @ 6:58 pm

Here's a walkthrough of the algorithm applied to this text:

RLO 01234 PDF 56789

Which appears in your browser as:

0123456789

Steps that aren't matched are omitted.

Refer to http://www.unicode.org/reports/tr9/ for the explanation of each step.

(I expect wordpress to destroy my formatting. The text, type, and level lines should be vertically aligned throughout.)

Start:

text = RLO 0 1 2 3 4 PDF 5 6 7 8 9

type = RLO EN EN EN EN EN PDF EN EN EN EN EN

level=

P3: base = 0

X1: (current, override) = (0, N)

X4: push (0, N); (current, override) = (1, R)

X9:

text = 0 1 2 3 4 PDF 5 6 7 8 9

type = EN EN EN EN EN PDF EN EN EN EN EN

level=

X6 (6 times):

text = 0 1 2 3 4 PDF 5 6 7 8 9

type = R R R R R PDF EN EN EN EN EN

level= 1 1 1 1 1

X7: pop -> (current, override) = (0, N)

X9:

text = 0 1 2 3 4 5 6 7 8 9

type = R R R R R EN EN EN EN EN

level= 1 1 1 1 1

X6 (5 times):

text = 0 1 2 3 4 5 6 7 8 9

type = R R R R R EN EN EN EN EN

level= 1 1 1 1 1 0 0 0 0 0

I1:

text = 0 1 2 3 4 5 6 7 8 9

type = R R R R R EN EN EN EN EN

level= 1 1 1 1 1 2 2 2 2 2

L2 (level=2):

text = 0 1 2 3 4 9 8 7 6 5

type = R R R R R EN EN EN EN EN

level= 1 1 1 1 1 2 2 2 2 2

L2 (level=1):

text = 5 6 7 8 9 4 3 2 1 0

type = EN EN EN EN EN R R R R R

level= 2 2 2 2 2 1 1 1 1 1

The key step is I1 which increases the level of the ENs (European Number)s so that it is reversed twice in step L2.

Inserting a letter after the PDF activates step W7 which turns the second set of ENs to Ls like so:

RLO 01234 PDF a56789

01234a56789

[(myl) Amazing! Thank you…]

said,

November 21, 2012 @ 7:04 pm

PS.

I don't know enough about RTL languages to hazard a guess at why the algorithm is designed to do this. But, it's apparent that IE's behavior is incorrect.

Marc said,

November 21, 2012 @ 7:31 pm

Randall has fixed the convolution…

Anthony said,

November 21, 2012 @ 11:03 pm

But does Unicode support boustrophedon?

Warp3 said,

November 22, 2012 @ 7:37 am

—

If you look closely, he's also put those control characters in the title tag, so depending on your browser, your title bar might also be reversed.

For example, in Firefox on linux, I'm seeing "xkcd: xoferiF allizoM – RTL" in the title bar when I visit xkcd.com

—

I hadn't noticed that until you posted this. Mine reads "xkcd: arepO – RTL" using Opera (in a Linux VM).

ErikF said,

November 25, 2012 @ 9:53 am

The BiDi algorithm works fairly well when it's used for simple to moderately-complex cases, but when you start dealing with things that are not words (or when people intentionally abuse the system) all bets are off! Raymond Chen described how this affects non-word items: http://blogs.msdn.com/b/oldnewthing/archive/2012/10/26/10362864.aspx