Danger: Demo!

« previous post | next post »

John Seabrook, "The Next Word: Where will predictive text take us?", The New Yorker 10/14/2019:

At the end of every section in this article, you can read the text that an artificial intelligence predicted would come next.

I glanced down at my left thumb, still resting on the Tab key. What have I done? Had my computer become my co-writer? That’s one small step forward for artificial intelligence, but was it also one step backward for my own?

The skin prickled on the back of my neck, an involuntary reaction to what roboticists call the “uncanny valley”—the space between flesh and blood and a too-human machine.

The "artificial intelligence" in question is GPT-2, created early this year by Open AI:

Our model, called GPT-2 (a successor to GPT), was trained simply to predict the next word in 40GB of Internet text. Due to our concerns about malicious applications of the technology, we are not releasing the trained model. As an experiment in responsible disclosure, we are instead releasing a much smaller model for researchers to experiment with, as well as a technical paper.

GPT-2 is a large transformer-based language model with 1.5 billion parameters, trained on a dataset of 8 million web pages. GPT-2 is trained with a simple objective: predict the next word, given all of the previous words within some text. The diversity of the dataset causes this simple goal to contain naturally occurring demonstrations of many tasks across diverse domains. GPT-2 is a direct scale-up of GPT, with more than 10X the parameters and trained on more than 10X the amount of data.

Given the organization name "Open AI", it's ironic that the cited result is actually closed — although the recipe is clear enough that anyone with the right skills and a moderate amount of computational resources should be able to replicate the system.

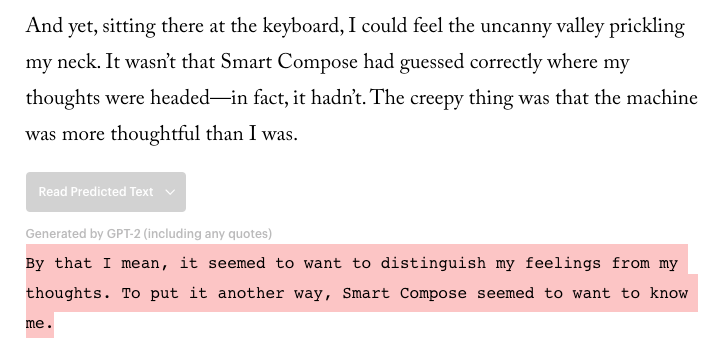

Seabrook's article gives an impressive demonstration of the technology — at nine points in (the online version of) his article (if I've counted right), there's a button labelled "Read Predicted Text". Here's the first one:

If you click on the button, you see what (a version of?) GPT-2 creates as a continuation of what Seabrook wrote up to that point — in this case it's

The other examples are equally spooky.

Later in the article, Seabrook discusses and exemplifies "fine tuning", i.e. adaptation of the GPT-2 system:

GPT-2 was trained to write from a forty-gigabyte data set of articles that people had posted links to on Reddit and which other Reddit users had upvoted. […]

GPT-2 was trained to write from a forty-gigabyte data set of articles that people had posted links to on Reddit and which other Reddit users had upvoted. […]

Yes, but could GPT-2 write a New Yorker article? That was my solipsistic response on hearing of the artificial author’s doomsday potential. What if OpenAI fine-tuned GPT-2 on The New Yorker’s digital archive (please, don’t call it a “data set”)—millions of polished and fact-checked words, many written by masters of the literary art. Could the machine learn to write well enough for The New Yorker? Could it write this article for me? The fate of civilization may not hang on the answer to that question, but mine might.

I raised the idea with OpenAI. Greg Brockman, the C.T.O., offered to fine-tune the full-strength version of GPT-2 with the magazine’s archive. He promised to use the archive only for the purposes of this experiment. The corpus employed for the fine-tuning included all nonfiction work published since 2007 (but no fiction, poetry, or cartoons), along with some digitized classics going back to the nineteen-sixties.

I presume, though Seabrook doesn't tell us, that the "Read Predicted Text" examples in his article were generated by this "fine-tuned" version of GPT-2.

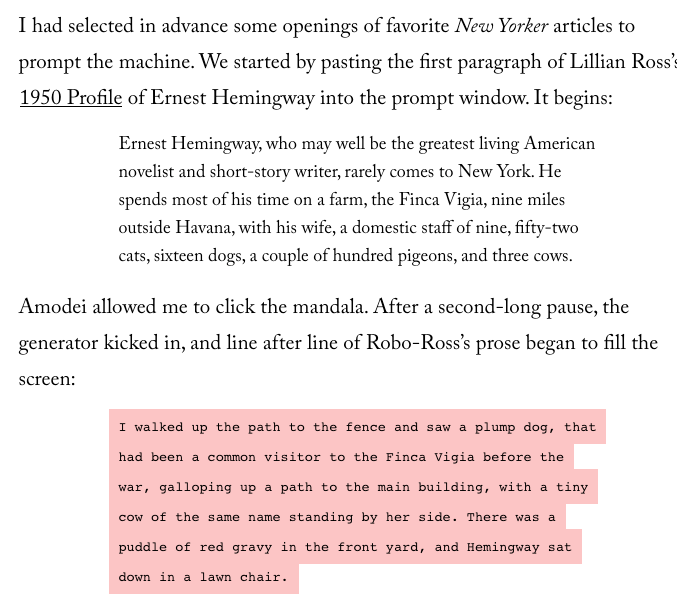

He gives another impressive example, of GPT-2 continuing a 1950 article that was not part of its training set:

As Seabrook observes, there are some issues:

On first reading this passage, my brain ignored what A.I. researchers call “world-modelling failures”—the tiny cow and the puddle of red gravy. Because I had never encountered a prose-writing machine even remotely this fluent before, my brain made an assumption—any human capable of writing this well would know that cows aren’t tiny and red gravy doesn’t puddle in people’s yards.

And he notes that such errors are in fact pervasive:

Each time I clicked the refresh button, the prose that the machine generated became more random; after three or four tries, the writing had drifted far from the original prompt. I found that by adjusting the slider to limit the amount of text GPT-2 generated, and then generating again so that it used the language it had just produced, the writing stayed on topic a bit longer, but it, too, soon devolved into gibberish, in a way that reminded me of hal, the superintelligent computer in “2001: A Space Odyssey,” when the astronauts begin to disconnect its mainframe-size artificial brain.

This undermines the claim of GPT-2's creators that they've withheld the code because they're afraid it would be used to create malicious textual deep fakes — I suspect that such things would routinely exhibit significant "world modelling failures".

And Seabrook's experience illustrates a crucial lesson that AI researchers learned 40 or 50 years ago: "evaluation by demonstration" is a recipe for what John Pierce called glamor and (self-) deceit ("Whither Speech Recognition", JASA 1969). Why? Because we humans are prone to over-generalizing and anthropomorphizing the behavior of machines; and because someone who wants to show how good a system is will choose successful examples and discard failures. I'd be surprised if Seabrook didn't do a bit of this in creating and selecting his "Read Predicted Text" examples.

In general, anecdotal experiences are not a reliable basis for evaluating scientific or technological progress; and badly-designed experiments are if anything worse. For a bit more on this, from my perspective as of 2015, see these slides for a talk I gave at the Centre Cournot.

This is not deny that GPT-2 and its ilk are impressive, interesting, and useful developments. And if you want to start to understand how and why this all works, see Andrej Karpathy, "The Unreasonable Effectiveness of Recurrent Neural Networks" (May 21, 2015), which illustrates the predictive-text capabilities of a much smaller and simpler "deep learning" system, with code that you can run yourself on a modern laptop.

But I don't think that John Seabrook needs to worry about being replaced by GPT-2 — or even GPT-3 or GPT-4. For one of many reasons, read about the Winograd Schema Challenge, a type of text-based problem that tests exactly the abilities needed to avoid "world modelling failures" — and which the best AI systems so far don't do well on.

Benjamin E Orsatti said,

October 8, 2019 @ 7:37 am

This is interesting. Linguists will soon be in the position that geneticists find themselves in now — Realizing that there are things that science _can_ do, but wondering whether those things are things that science _should_ do. Nuclear weaponry, cloned human beings, the "outsourcing" of the pen.

Will society allow AI to run, unrestrained, towards its logical conclusion? Will my grandchildren be reading their array of potential responses to my senescent ramblings from their Google Glasses text prompts? Or from their retinal projection screens?

We complain that technology isolates people, that it inhibits communication, people spending time with each other. We complain that the new generations don't speak face-to-face — that they e-mail, text, or post on social media instead. And now, even that fragile communication link is being "automated"?

In other news, Google has apparently created a quantum computer…

Luzh said,

October 8, 2019 @ 7:45 am

There's a circularity here: GPT-2 is trained on articles submitted to reddit. Many of these are opinion pieces from blogs or mainstream news sites, and many of them are about AI, and I bet many of those are about the spooky aspects of smart compose and thoughts and feelings. The proliferation of these very kinds of articles has brought the source of their concerns closer to reality.

I agree that it lacks world knowledge, though in a different context the puddle of red gravy could be weighty surrealist symbolism. And as this article post, we often grant human writers the most charitable readings — for example finding acute insight and wisdom in New Yorker articles that really just mindlessly regurgitate old misgivings about computational creativity that look less accurate with each passing decade.

KeithB said,

October 8, 2019 @ 8:31 am

One wonders how many "tiny cows" there are in the corpus.

Philip Taylor said,

October 8, 2019 @ 8:56 am

Fairly sure that someone posted this "tiny cow" link only recently, but it seems singularly apposite in the present context …

Yuval said,

October 8, 2019 @ 12:01 pm

This failure mode in GPT-2 has been discussed quite eloquently here.

One replication effort is described here, another here, and the whole keeping-the-model-secret thing was ridiculed on Twitter across many a thread, to meme levels.

Thaomas said,

October 8, 2019 @ 2:40 pm

I stopped reading the New Yorker article after that first button. First I felt like the writer themself was trying — a little too hard — to write like a New Yorker article. Second I was not impressed by the continuation produced by the AI. The had just made a perfectly clear statement; why would the follow up with a flat, wordy restatement?

Jerry Friedman said,

October 8, 2019 @ 3:39 pm

what roboticists call the "uncanny valley"—the space between flesh and blood and a too-human machine.

I thought the uncanny valley was the space where too-human machines reside, between flesh and blood on one hill and safely mechanical machines on the other.

ktschwarz said,

October 8, 2019 @ 4:10 pm

You can generate your own imaginary news stories using the GROVER demo, as discussed recently by Janelle Shane on her AI Weirdness blog. Here's one failure mode: if I ask GROVER for an article at nytimes.com by Nicholas Wade headlined "Martians Discover Universal Structure of Human Language", it often produces an article that doesn't mention Martians!

Insert your own joke about whether the output is distinguishable from actual NYTimes articles — but that is actually the purpose of GROVER. I gave it a real article by Nicholas Wade, and indeed it rated it as "written by a human".

ktschwarz said,

October 8, 2019 @ 6:15 pm

What really jumped out at me in the Hemingway demo was the nonrestrictive relative clause introduced by "that":

Yes, it's the elusive ivory-billed relative clause, famed for its extreme rarity in print! I don't know what's more surprising: that GPT-2 produced it, or that Seabrook (and his editors) didn't notice it as a major violation of New Yorker style — on the contrary, he praises the machine for "expertly capturing The New Yorker’s cadences and narrative rhythms" and "[mastering] rules of grammar and usage".

JPL said,

October 8, 2019 @ 8:10 pm

Responding to ktschwartz above:

There seems to be so much wrong with the New Yorker continuation text, beginning with the fact that, far from being a good prediction, this would be a very highly unlikely text following the given text for any human writer. E.g., The ivory-billed relative clause does not fit with the preceding nominal as antecedent: the nominal is singular, referring to a particular dog, but the relative clause provided requires a generic nominal as antecedent, in this case probably one expressed with the definite article (e.g., "the grey coyote"), because of the adjectival "common". (Maybe that particular plump dog was a frequent visitor to the Finca Vigia before the war, but a common one?) Why are they aiming for prediction, instead of just producing something interesting? (Given the essential property of free will in purposeful adaptive action and the fact of human contrariety, it would not seem to be a realistic goal.)

ktschwarz said,

October 10, 2019 @ 1:00 pm

Yes, it's too bad Seabrook didn't look that closely. He was too dazzled by the glamor.

The question Seabrook didn't ask: Did the fine-tuning make any difference? That is, would the output have been any less New-Yorkerish after just the original Reddit training? I bet his OpenAI contacts were careful not to raise that question, because the answer could well have been "not much", considering that the New Yorker corpus was so much smaller, and the same genre (general journalism and essays).