The quality of quantity

« previous post | next post »

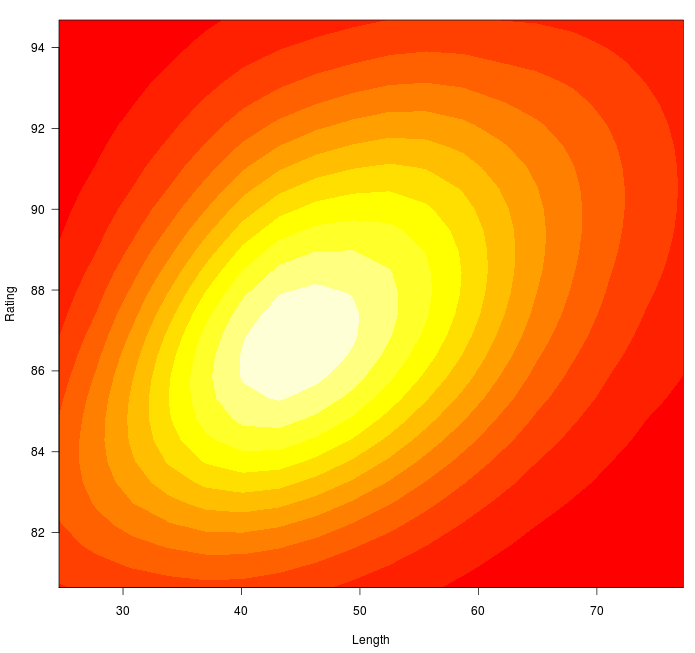

The longer it is, the higher the rating:

We're talking about the length of wine reviews, measured in words, and the numerical rating given to the associated wine. (Well, actually, the length of the reviews is measured in terms of the output of a tokenizer that sets off punctuation as well as alphanumeric strings…).

The data comes from 84,158 reviews at Wine Enthusiast magazine, and the picture above shows a density plot of the relationship between review length and the numerical rating of the associated wine. The reviews range in length from four reviews with just eight lexical tokens each:

smells vegetal , and tastes unnaturally sweet .

simple and sweet . tastes like lemonade .

flat , fruity and lacking in complexity .

unripe , with feline aromas and flavors .

… to ones like this with more than 130:

if there 's any such thing as the perfect spanish red , pesus is it . a blend of 80% tempranillo with other grapes including cabernet sauvignon , this wine sees 200% new oak , resulting in a thick , dark , tannic beauty that bubbles over with toast , cola , mint , chocolate and spice aromas . the mouth is sheer heaven ; a mile deep in terms of berry flavor and more , but faultless and smooth . shows outstanding structure and power , and should age well for 15 – 20 years . hails from two 100 – year – old vineyards and some baby vines with but 25 years of age . crazy expensive but only 150 cases were made ; drink 2013 – 2030 .

The average review length is 49 lexical tokens.

The numerical ratings range from 80 to 100, with a mean of 87.4.

You can probably guess that the long review above was associated with a better rating than the earlier four short ones. And you'd be right: the long one scored 100, while the short ones scored 81, 80, 82, and 80. Much of your judgment would be based on the content ("feline aromas and flavors" is never going to beat "the mouth is sheer heaven", regardless of the rest of the context), but some of your reaction probably also comes from the simple length of the review.

In some situations, at least, a short evaluation does not seem as positive as a longer one. This is certainly the conventional wisdom about letters recommending someone for a job or for entrance to an educational program. A brief though positive recommendation ("X is excellent") seems less likely to impress readers than an equally positive letter that goes on at length about exactly how the writer knows that X is excellent.

In the case of the Wine Enthusiast reviews, we can be be more precise about the relationship. The length of the review alone explains almost a quarter of the variance in ratings (Adjusted R-squared = 0.2389). The coefficients emerging from linear regression are

rating = 82.1 + 0.11*nwords

This is not as good as we can do with a simple bag-of-words model of the content: predicting ratings based on the review-by-word matrix, limited to words that occur at least 20 times overall (5,516 of them) explains 73% of the variance. But still, 25% of the variance from a single, simple feature is not bad at all.

Update — Here are a few previous studies on the topic of the length of letters of recommendation, which it seems that psychologists have been studying for nearly 50 years. In the large recent machine-learning literature on "sentiment analysis" as applied to product reviews, I haven't been able to find a clear evaluation of the effect of review length (though length is clearly a useful feature in detecting spam reviews) — perhaps a reader can help us out here.

Albert Mehrabian, "Communication Length as an Index of Communicator Attitude", Psychological Reports 1965:

It was hypothesized that communications about liked objects are longer than communications about disliked objects. Ss wrote letters of recommendation about liked and disliked people under conditions where the topics to be covered in the letter were minimally or partially specified. The hypothesis was confirmed for conditions of both minimal and partial topic specification.

CHris Keinke, "Perceived Approbation in Short, Medium, and Long Letters of Recommendation", Perceptual and Motor Skills, 1978:

Mehrabian (1965) and Wiens, et al. (1969) found that subjects wrote longer letters of recommendation when the letter was for someone they liked rather than disliked. These results led Wiens, et al. to suggest that communication channels may provide a useful nonreactive measure of attitudes and motivations. The present research replicated the above encoding studies with a series of decoding experiments in which subjects rated short, medim-length, and long letters of recommendation written in English, German, or with deleted text. Short letters were evaluated as being least favorable towards the job applicant and long letters were evaluated as being most favorable toward the job applicant. It was concluced that attitudes attributed to length of letter are consistent with attitudes influencing length of letter. Subjects' limited awareness of the influence of length of letter on the evaluations was related to Nisbett and Wilson's (1977) argument about the weakness of introspection.

Stephen Colarelli et al., "Letters of Recommendation: An Evolutionary Psychological Perspective", Human Relations 2002:

This article develops a theoretical framework for understanding the appeal and tone of letters of recommendation using an evolutionary psychological perspective. Several hypotheses derived from this framework are developed and tested. The authors’ theoretical argument makes two major points. First, over the course of human evolution, people developed a preference for narrative information about people, and the format of letters of recommendation is compatible with that preference. Second, because recommenders are acquaintances of applicants, the tone of letters should reflect the degree to which the relationship with the applicant favors the recommender’s interests. We hypothesized that, over and above an applicant’s objective qualifications, letters of recommendation will reflect cooperative, status and mating interests of recommenders. We used 532 letters of recommendation written for 169 applicants for faculty positions to test our hypotheses. The results indicated that the strength of the cooperative relationship between recommenders and applicants influenced the favorability and length of letters. In addition, male recommenders wrote more favorable letters for female than male applicants, suggesting that male mating interests may influence letter favorability.

Jerry Friedman said,

April 24, 2012 @ 2:12 pm

I suspect part of the reason for this correlation is that complexity is supposed to be a good characteristic in wines. (I on the other hand might prefer the one that tastes like lemonade, and I might like lemonade even better.) I wonder reviews of things such as pens, which are supposed to work unobtrusively but can have various annoying or ineffective qualities, would show the opposite correlation.

Another reason, of course, is that once you notice the feline flavors, you stop looking for undertones of cola and mint. They won't help.

Nathan said,

April 24, 2012 @ 2:32 pm

So much for my plan to market Cat Cola.

uebergeek said,

April 24, 2012 @ 2:44 pm

Hmm, the more they like the wine the more verbose they become? Why does this correlation not come as a surprise? I always suspected they don't really spit it out… ;-)

Kenny Easwaran said,

April 24, 2012 @ 2:59 pm

I assume there's a couple obvious factors at work here. One is that if you really like something, you'll feel that it deserves more of your time and effort than something you don't particularly like. Another is that (at least with certain sensory things) it can be very easy to explain why something is bad, if there is a single overwhelming feature that is negative. To explain why something is good, on the other hand, you have to go into all the details of what makes it interestingly different from something else that is pretty good.

I suspect there's a relation to the Anna Karenina observation about happy families – it's often very obvious to say what's bad about a wine, but it's much harder to say what makes something good. But maybe this connection should be inverted when it comes to flavors.

Book reviews will often be interestingly different – sometimes the reason a book is bad is quite subtle, at least if it is a book that is supposed to lead you to a conclusion. Maybe it'll be more similar for reviews of fiction, or reading that is supposed to be more about enjoyment than edification?

uebergeek said,

April 24, 2012 @ 3:02 pm

And it almost seems like some sort of corollary to the disturbing findings emerging on standardized test essay scoring. http://nyti.ms/IqY5wu Though since students have long been taught to emphasize length over succinctness and style over substance, perhaps it actually confirms they've mastered the material…

[(myl) An interesting point of comparison. But the two cases are different, I think. In the SAT essay-grading case, the rating is given to the text itself by an independent examiner; here, the ratings are given by the author of the text him- or herself, to the thing that the text is describing.

In the SAT-grading case, a charitable interpretation would be that more fluent writers, who are able to turn out a longer essay within the severe time constraints imposed, are also (on average) better writers in other ways. There are obviously other, less charitable, interpretations.

In the case of wine tasting notes, I suspect that other things are going on. The reviewers are motivated to think and write at greater length about wines that they enjoy more; complexity is seen as a positive quality in wines, and aromas and tastes that are perceived as complex take longer to describe; higher-quality wines merit discussion of how long to wait before drinking them; and so on.]

David W. Hogg said,

April 24, 2012 @ 3:36 pm

It would be great to see the farthest outliers: What wines have the longest *bad* reviews, and the shortest *good* reviews. I bet highly priced, once-good producers earn the first category, but what is in the second?

Kevin said,

April 24, 2012 @ 5:09 pm

I can't comment on the wine reviews, but for the job recommendations I'd expect that much of the "short is bad" pattern (in the US) comes from potential legal liability. I would expect that many people might take a position that they should (i) say nothing untrue (slander) and (ii) say nothing negative (defamation). If asked to comment on a lousy employee, they might have little left to say.

[(myl) This may be true in some cases. But speaking for myself as a letter writer, I try to give some detail about the individuals work, perhaps summarizing their contribution to various projects, explaining the results of some key papers, along with why the results matter, and so on. This is a time-consuming process, and (though I try to give everyone a fair evaluation) I'm obviously more motivated to put in more time in the cases where I have a higher opinion of the work involved.]

Robert E. Harris said,

April 24, 2012 @ 8:17 pm

While I was a graduate student, many years ago, my research adviser (George Jura) reviewed research grant proposals for the Air Force Office of Scientific Research. He had me read many of his reviews. The longer they were the less enthusiastic they were.

[(myl) This accords with my experience in reading and writing reviews of grant proposals. The proposal itself will have presented all the reasons that it's great; in most cases, the role of the reviewer is either to agree with the proposal's self-characterization, or to point out mistakes, omissions, or problems.]

Keith said,

April 24, 2012 @ 8:46 pm

I would hope and suspect that the ratings are given during a blind tasting, and that the reviews are written after the tasting, once the labels are unveiled.

That makes me think that Kenny Easwaran has the right idea: the writers of the reviews are more likely to write longer reviews of wines that they enjoyed, because they feel:

more predisposed to spend time writing about something they like,

they need to justify giving a good rating to a particular wine.

Keith.

Graeme said,

April 24, 2012 @ 10:15 pm

The reviewer has tasted a cat?

Cat's pee aroma on the other hand is a common feature of reasonable sauvignon blanc. Are the Kiwis still marketing one affordable and decent SB under the name 'Cat's Pee on a Gooseberry Bush'?

richard said,

April 24, 2012 @ 11:10 pm

Finally, linguistics research with a practical application! Now I don't have to read wine reviews–I can just glance at the length of the column and buy the wine corresponding to the longest reviews. The resulting increase in purchasing efficiency will be sure to please the economist in the office next to mine.

U said,

April 25, 2012 @ 2:29 am

Not linguistics, but I find it interesting that they have 100 rating points but only use the top 20 of them. I have definitely drunk 10 wines.

GeorgeW said,

April 25, 2012 @ 5:33 am

It seems to me (based on unrigorous research) that the opposite is the case of crowd-sourced restaurant and hotel reviews in Urbanspoon, Yelp, Tripadvisor and the like. It seems that often highly negative reviews tend to be very wordy with the reviewer compelled to report every detail of their dissatisfaction.

[(myl) There are some collections of such reviews out there, and so this can be checked. I'm convinced, however, that many features of "sentiment analysis" are quite genre and context-specific, and I expect that the interpretation of length might very well switch sign from domain to domain.]

Dan H said,

April 25, 2012 @ 6:35 am

And it almost seems like some sort of corollary to the disturbing findings emerging on standardized test essay scoring. http://nyti.ms/IqY5wu

I actually *suspect* that those "disturbing findings" are a lot less disturbing than they seem.

Given a short period of time to produce a piece of writing on a given topic, the more able, more confident students are likely to wind up producing more writing than the less able, less confident students. I suspect that the truth is that the SATs do not "reward length and ignore facts" but rather that they ignore facts for perfectly sensible reasons (as far as I understand it, it's a writing test, not a history test, so a historical error is completely unrelated to what the test is actually testing) and they reward qualities which strongly *correlate* with the length of the essay.

Indeed the evidence from wine reviews suggests that there is clearly more to it than examiners blindly giving good marks to long essays – it is clearly *not* the case that wine reviewers decide how much they like a wine based on the length of their own review.

Jason Eisner said,

April 25, 2012 @ 6:55 am

Breakfast Experiment(TM): Here's a little animation of review score versus review length for the 1200+ reviews from the EMNLP 2007 conference.

http://cs.jhu.edu/~jason/tmp/review-score-and-length.gif

(blue line is median, gray line is mean)

There is a slight negative correlation, with problematic papers drawing slightly more comments. But perhaps also a hint of triage, where for papers that are beyond hope, reviewers might not waste as much time giving constructive feedback. I haven't tested significance because unlike Mark, I don't usually leave time for Breakfast. :-)

Jason Eisner said,

April 25, 2012 @ 7:27 am

Oh, and for comparison, here's a version like Mark's:

http://cs.jhu.edu/~jason/tmp/review-score-and-length.jpg

You can see more quickly that the effect, if any, is small (as it is with the wine reviews: Mark's plot zooms in on the range 80-95 characters). But it's harder on this plot to see what's going on with the very good and very bad papers, or with the long reviews, because there are too few of them to show up.

Jason Eisner said,

April 25, 2012 @ 7:30 am

"80-95 characters": I meant words, sorry.

[(myl) Actually, the vertical axis (80-95) represents the rating scale; and there are no ratings below 80, and only 629 of 84,158 (0.7%) above 95.

The horizontal axis shows the range between 25 and 78 words inclusive — this includes 78896 out of 84158 (94%) of the reviews. So most of the data is represented in the plot.]

GeorgeW said,

April 25, 2012 @ 7:50 am

@MYL: "I'm convinced, however, that many features of "sentiment analysis" are quite genre and context-specific . . ."

I also wonder if the status of the reviewer is a factor. A professional reviewer would have a different perspective than that of a consumer and this difference might be reflected in the word length of their comments.

Rob P. said,

April 25, 2012 @ 8:33 am

I hope not too off topic, but what does "sees 200% new oak" mean?

the other Mark P said,

April 26, 2012 @ 5:23 am

We hypothesized that, over and above an applicant’s objective qualifications, letters of recommendation will reflect cooperative, status and mating interests of recommenders.

Eeek! Well, I'm not aware of taking "mating interests" into account when I write letters of recommendation!

It was hypothesized that communications about liked objects are longer than communications about disliked objects.

I have several times had to write letters on behalf of people which were negative. They tended to be very short. But it wasn't because I didn't like them, because they had behaved badly or such negative thoughts of them. Instead it was because they were people with very little to recommend them.

Conversely I have written quite long positive letters on behalf of people I didn't much like. But those people did, actually, have some things other people might want.

Sometimes it is possible to overthink these things.

Mak said,

April 26, 2012 @ 10:54 am

>I hope not too off topic, but what does "sees 200% new oak" mean?

It's an unofficial way to say that the wine was put into new oak barrels, and then at some point transferred into another set of new oak barrels.

Urso said,

April 27, 2012 @ 1:12 pm

More interesting to me is that an 80 is apparently an F. Actually, looks like an 82 is an F, and an 80 would be an F—

Of course, there's an entirely logical and noncharitable reason for this. Joe 750ml walking through the grocery store looking for a $10 red doesn't know about this inflated and truncated system. An "87," by itself, looks damn good. And under a normal scale it would be. But that would be a wholly average, 50th percentile wine.

Even worse, Joe may see something marked an 80 for only $4 and think, well, that's not the highest rating on the shelf but it's at least a passing store. Two hours later he gets a mouthful of cat urine.

Paul Kay said,

April 28, 2012 @ 6:36 pm

Urso, if you buy groceries in California, I imagine you've noticed that the smallest eggs you can buy are 'large'. (The medium-sized eggs are 'extra large', and the large eggs are 'jumbo'.) When a recipe calls for, say, four large eggs, I uh …

Dan Hemmens said,

April 29, 2012 @ 11:05 am

I seem to recall that there's an old Language Log post about the "large is the smallest" phenomenon which points out (with respect to coffee at least) that it makes a lot more sense than it seems to. I suspect that the reason the smallest eggs are "large" is not that supermarkets are mislabeling their eggs to gull foolish customers, but that a "large egg" is a specific size of egg which happens to be the smallest size of egg it's worth selling (when, after all, did you ever see a recipe call for "four small eggs").

Rube said,

April 30, 2012 @ 9:39 am

@Dan Hemmens, indeed, as someone who was sorted eggs, I can tell you that there are many official sizes of eggs, with the smallest being, IIRC, "Peewee". Most of the eggs smaller than "Large" will go for processing into baked goods and such like, and "Large" size is mostly what you'll see in stores.

If a recipe calls for "four large eggs", you'll do fine using the eggs marked "Large".

The Quality-Length Correlation - Lingua Franca - The Chronicle of Higher Education said,

May 1, 2012 @ 10:59 pm

[…] on Language Log recently a post by the eminent computational linguist Mark Liberman entitled "The quality of quantity" that reported on a result relating to quality judgments in wine reviews revealing a […]