Separated by a common problem

« previous post | next post »

The first issue of a new journal has just appeared: Linguistic Evidence in Security, Law and Intelligence (LESLI), founded and edited by Dr. Carole Chaski. As a member of the editorial board, I'm pleased with the quality of the first issue, and I feel that Carole deserves a round of applause.

But there's something in the first issue that reminds me of a long-standing puzzle: why is there so little communication between two research communities who seem to be working on essentially the same problem? The trigger is Harry Hollien's policy paper, "Barriers to Progress in Speaker Identification with Comments on the Trayvon Martin Case". And the two communities — separated by a common problem — are the people who work on what Prof. Hollien calls (forensic) "speaker identification", versus the people involved with what I know as "speaker recognition research".

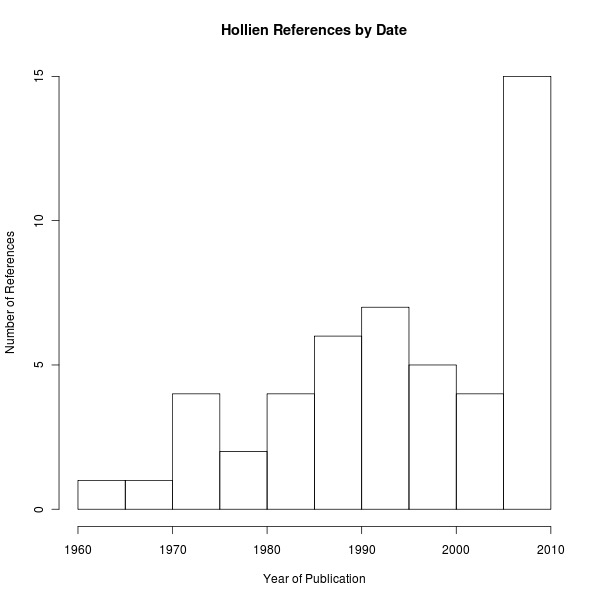

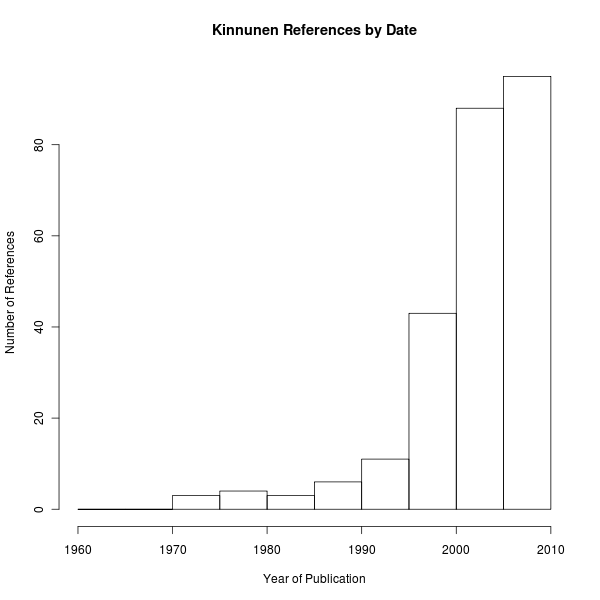

One way to explain my reaction is to compare Prof. Hollien's paper with a fairly recent review of the speaker recognition field as I know it: Tomi Kinnunen and Haizhou Li, "An overview of text-independent speaker recognition: From features to supervectors", Speech Communication, January 2010 (cited 322 times, according to Google Scholar). Kinnunen and Li have 266 references; Hollien has 62 references; Both papers survey the past 40-50 years of work, with an emphasis on more recent stuff — the references that have associated dates since 1960 compare like this:

|

|

But (a quick scan suggests that) there is only one reference in common: A. Alexander, F. Botti, D. Dessimoz, & A. Drygajlo, "The effect of mismatched recording conditions on human and automatic speaker recognition in forensic applications", Forensic Science International 146S, December 2004.

And there's one other case where the same material is cited relative to a journal article vs. a conference paper — thus Hollien has D.A. Reynolds, "Speaker Identification and Verification Using Gaussian Mixture Speaker Models", Speech Comm. 1995, where Kinnunen & Li have D. Reynolds & R. Rose, "Robust text-independent speaker identification using Gaussian mixture speaker models", IEEE Trans. Speech Audio Process. 1995.

Prof. Hollein suggests that the research that he ignores aims to solve a fundamentally different problem:

It first must be pointed out that speaker identification (SI) is but one of two related divisions subsumed under speaker recognition. The other is speaker verification (SV). Speaker verification (SV) is 1) where a talker wishes to be correctly identified (examples: to gain access to a bank account, to attain entry to a restricted area) or 2) when an individual’s identity needs to be validated for some reason (examples: a speaker at a security outpost or as to which astronaut is talking in the space capsule). Only the most sophisticated procedures and equipment are employed for speaker verification. Moreover, the SV examiner can establish a library of many and varied referent speech samples to compare with the questioned utterance(s). On the other hand, Speaker identification (SI) constitutes a much greater challenge. Here an often uncooperative and unknown speaker, residing within a population of unknown size and composition, must be identified by analyzing samples of his or her speech and voice. The examiner must do so under conditions which, in many cases, range from poor to minimally adequate. As is obvious, a different approach to successful recognition is needed for SI than is the one for SV. […]

[T]he presence of, and competition by, SV has seriously impeded good progress in the development of effective SI procedures. It has done so for two reasons. First, SV is commercially viable. It can be useful in many situations — in the military, at correctional institutions, for security purposes and, especially, in commerce. As a result, the SV developer’s financial rewards – i.e., those resulting from the purchase and use of his SV system – are substantial indeed. As you might expect, this situation attracts many professionals to organizations that need to verify the identity of their customers, clients, associates, personnel and/or others. In turn, these relationships have served to shift most of the financial and research support from SI to SV.

Secondly, the relatively benign environment associated with SV and the relatively modest number of variables encountered here are attractive to professionals and draw them to this area (rather than to SI). Ironically, since SV is many magnitudes a lesser challenge than is SI, if a good SI system could be developed, the problem of verification would be solved also. Finally, engineers tend to be comfortable with verification. Their response often is to develop algorithms which permit them to build a device that can be directed at the problem (even though it may not directly focus on speech or voice) and then use it. If the system does not operate effectively, it is discarded and replaced by a different one.

Thus, it is phoneticians who are left to develop speaker-specific ways to identify talkers. When they do, they then must subject their systems to a series of experiments (sometimes a long series) in order to see if they work. Of course, this latter approach is costly and results in a delay between the system’s concept and its use. In short, the glamour and financial rewards of SV have seriously overshadowed the slow, grinding pathway to successful SI.

Worse yet, the differences between the SI and SV domains have tended to force the phoneticians and engineers to work apart from each other. Only a few of them presently collaborate and this lack of extensive cooperation serves to impede progress in the development of effective speaker identification systems.

This is one of those cases that makes me wonder, from time to time, whether transdimensional leakage has somehow mingled subcultures from different possible worlds. Here's what Kinnunen & Li have to say about applications of the research they're surveying:

An important application of speaker recognition technology is forensics. Much of information is exchanged between two parties in telephone conversations, including between criminals, and in recent years there has been increasing interest to integrate automatic speaker recognition to supplement auditory and semi-automatic analysis methods […]

Not only forensic analysts but also ordinary persons will benefit from speaker recognition technology. […] An example is automatic password reset over the telephone.

In addition to telephony speech data, there is a continually increasing supply of other spoken documents such as TV broadcasts, teleconference meetings, and video clips from vacations. Extracting metadata like topic of discussion or participant names and genders from these documents would enable automated information searching and indexing. Speaker diarization, also known as “who spoke when”, attempts to extract speaking turns of the different participants from a spoken document, and is an extension of the “classical” speaker recognition techniques applied to recordings with multiple speakers.

In forensics and speaker diarization, the speakers can be considered non-cooperative as they do not specifically wish to be recognized. On the other hand, in telephone-based services and access control, the users are considered cooperative. […] In text-dependent systems, suited for cooperative users, the recognition phrases are fixed, or known beforehand. […] In text-independent systems, there are no constraints on the words which the speakers are allowed to use. Thus, the reference (what are spoken in training) and the test (what are uttered in actual use) utterances may have completely different content, and the recognition system must take this phonetic mismatch into account. Text-independent recognition is the much more challenging of the two tasks.

Although K & L don't mention intelligence applications, it should be obvious that these are also among the important motivations for speaker recognition research — and are also circumstances where "an often uncooperative and unknown speaker, residing within a population of unknown size and composition, must be identified by analyzing samples of his or her speech and voice".

Another indication of cultural barriers to communication — or transdimensional mixing of alternative histories — is the fact that Prof. Hollien doesn't mention or reference the 17 years of Speaker Recognition Evaluations (SREs) sponsored by the U.S. National Institute of Standards and Technologies. His phrase "the slow, grinding pathway to successful SI" strikes me as an excellent characterization of this program. And against the background of this research, Prof. Hollein's complaint about "sharply controlled environments" and "utterances that vary but little from the speech contained in the reference sets" is simply bizarre (or again, perhaps, valid in a parallel universe not our own):

[A]s you might expect, engineers tend not to have much of a background in the behavioral sciences — in the anatomy and physiology of speech, or in psychoacoustics. Yet, these parameters all play important roles when a person attempts to understand, and compensate for, the many variables that impact the speaker identification process. Inadequacies of this type make it difficult for engineers to develop systems which can identify specific speakers unless they are in a sharply controlled environment and their utterances vary but little from the speech contained in the reference sets.

The NIST SREs have featured speech from interviews recorded with literally dozens of systematically varied microphones and microphone placements; sampled variations in room acoustics and background noise level; included telephone conversations using multiple different devices, transmission channels and locations, among them cell-phone recordings made on busy city streets; used recordings in different languages by bilingual speakers; included recordings made by given speakers at various times over a span of 17 years; and so on and so on. And the wide range of envisioned applications is underlined e.g. by George Doddington, "The Role of Score Calibration in Speaker Recognition", Interspeech 2012:

NIST has fostered research in speaker recognition through a continuing series of evaluations. In these evaluations the general speaker recognition problem has been framed as a detection task, with performance characterized in terms of miss and false alarm probabilities. While most research effort toward this objective is focused on improving performance generally without regard to particular tasks or applications, the job of setting detection thresholds and making decisions remains an important consideration and a significant technical challenge.

For people who follow "speaker recognition research", at least in my universe, the NIST SRE data and metrics are hard to miss. The IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) is one of the main venues where speech research of all kinds is reported, and a search for the string "SRE" in paper texts shows 79 papers over the past eight years that have used NIST SRE data and/or report results on NIST SRE tasks:

| 2006 | 1 paper |

| 2007 | 7 papers |

| 2008 | 5 papers |

| 2009 | 12 papers |

| 2010 | 3 papers |

| 2011 | 20 papers |

| 2012 | 12 papers |

| 2013 | 19 papers |

| TOTAL | 79 papers |

For those who believe that only old-fashioned journal articles should count, a similar search for "SRE" in the journal Speech Communication yields 21 relevant articles, and a search in Computer Speech and Language yields 12 relevant articles.

Let me hasten to add that the point of this post is not to argue with Prof. Hollien, but to underline the extreme lack of communication between research areas that his "policy paper" exemplifies. I hope that the new journal will help make some of those barriers more permeable.

Carole Chaski said,

December 12, 2013 @ 12:01 pm

Thank you, Mark.

LESLI's policy papers are intended to spark discussion in the field of forensic linguistics and LESLI provides a forum, as the home page states, for policy discussions. It is an unfortunate quality of the current state that conflicting parties often refuse to speak to each other or take over fora where only one part of a conflict can be heard. One of the primary motivations for starting LESLI was to provide a place where real discussions about the development and use of linguistic evidence can take place. I have been involved with several technical working groups in forensic science and know firsthand that consensus and progress does not come easy. It certainly takes courage to participate in this kind of discussion, where conflict is inevitable, and really listen to and engage with alternative viewpoints, but I strongly believe that it is the only way for the field of forensic linguistics to make real progress.

Theophylact said,

December 12, 2013 @ 4:03 pm

Reminds me of Solzhenitsyn's The First Circle. Forensic linguistics has many uses.

Bill Tozier said,

December 17, 2013 @ 2:14 pm

As to "so little communication between two research communities who seem to be working on essentially the same problem," I'd suggest Andrew Abbott has cleared that up fairly thoroughly in his The System of Professions, at least for my poor layman's sensibilities. Even if you're already familiar with the work (or The Chaos of Disciplines, which I found a bit too Sociology-focused), I recommend revisiting it now and then.