Two Disciplines in Search of Love

« previous post | next post »

This is a guest post by Bill Benzon, in response to earlier posts by Hannah Alpert-Abrams and Dan Garrette ("Computational linguistics and literary scholarship", 9/12/2013) and David Bamman ("On Interdisciplinary Collaboration and "Latent Personas"", 9/17/2013).

In scientific prognostication we have a condition analogous to a fact of archery—the farther back you draw your longbow, the farther ahead you can shoot.

_______________– Buckminster Fuller

“We need to make every effort to defend, in changed circumstances, the tradition that makes the humanities in the university the place especially charged with the combination of Bildung and Wissenschaft, ethical education and pure knowledge.”

_______________ – J. Hillis Miller

I’ve been thinking about literary criticism and computational linguistics since the mid-1970s, when I was a graduate student at SUNY Buffalo. I was in the English Department, which was roiling with the new conceptual currents flowing from Europe, France in particular, by way of Johns Hopkins (where I’d done my undergraduate work). But I got my most important training under David Hays, a computational linguist who’d been the founding chair of SUNY’s Linguistics Department and had been one of the first-generation researchers in machine translation. Hays had his Continental affinities, too, but not for Lévi-Strauss or any of the post-structuralists. He was particularly attracted to the dependency grammar of Lucien Tesnière and the functionalist proclivities of the contemporary Prague School linguists, Sgall and Hajičová in particular. But let me assure you, he knew his Jakobson too, and regarded him as arguably the greatest linguist of the 20th Century.

The purpose of this essay is to open up a discursive space in which the manifold relationships between computational linguistics and literary studies can be investigated with care and, yes, at leisure, because that’s the only way we’ll have time to acquire the conceptual tools needed to cross the very wide canyon between computational linguistics and literary studies as they are currently constituted. Thus I begin by quoting a passage from Alan Liu, a proponent of digital humanities who can’t resist firing some shots across the bow of the Good Ship Computation – a pre-emptive strike perhaps, a bit of rhetorical vaccination? My response: don’t give up the ship!

Then I consider the unhappy relationship academic literary criticism has had with, well, you know, criticism, evaluation, by looking at passages from Northrup Frye and Wayne Booth. From there I offer a distinction between naturalist criticism and ethical criticism (by way of Bruno Latour’s pluralism), which echoes an older distinction between Wissenschaft and Bildung. Having made that distinction I take up the matter of computational linguistics and literary criticism, looking first at “classical” computational linguistics (CL), conceived as symbol processing, and then the newer statistical techniques of natural language processing (NLP). To understand the latter we must learn to think in terms of description. I conclude by considering the roles of CL and NLP in a hypothetical large-scale and long-term investigation of romantic love.

Let’s Set Critique Aside

It’s premature. If we go there now, things will not go well.

What do I mean?

Let us begin with this remark by Alan Liu, who is himself an advocate and practitioner of the digital humanities, a phrase whose reference, we should note, is broader than computational linguistics (CL) or natural language processing (NLP):

In the digital humanities, cultural criticism–in both its interpretive and advocacy modes–has been noticeably absent by comparison with the mainstream humanities or, even more strikingly, with “new media studies” (populated as the latter is by net critics, tactical media critics, hacktivists, and so on). We digital humanists develop tools, data, metadata, and archives critically; and we have also developed critical positions on the nature of such resources (e.g., disputing whether computational methods are best used for truth-finding or, as Lisa Samuels and Jerome McGann put it, “deformation”). But rarely do we extend the issues involved into the register of society, economics, politics, or culture in the vintage manner, for instance, of the Computer Professionals for Social Responsibility (CPSR). How the digital humanities advance, channel, or resist the great postindustrial, neoliberal, corporatist, and globalist flows of information-cum-capital, for instance, is a question rarely heard in the digital humanities associations, conferences, journals, and projects with which I am familiar. Not even the clichéd forms of such issues–e.g., “the digital divide,” “privacy,” “copyright,” and so on–get much play.

Liu is not the only one who has been making such observations. They are common enough. This is to be expected of a discipline interested in the way literary texts “reflect systems of power and oppression”, to invoke a phrase used by Hannah Alpert-Abrams and Dan Garrette in some recent remarks on computational linguistics (CL) and literary scholarship.

With all due respect for Professor Liu, let me be blunt; there is a serious issue addressed in his statement, but it is not addressed in an intellectually serious way. For example, the reference to “how the digital humanities advance, channel, or resist the great postindustrial, neoliberal, corporatist, and globalist flows of information-cum-capital,” is so formulaic that I cannot take it seriously. Such language has the feel of territorial marking – spritz a little neoliberal here, some information-cum-capital there: I hereby claim this territory in the name of Captain Critique, Master of the Postmodern Main – rather than being openings for actual, you know, thinking. And, yes, I understand that Liu was making a program statement and not actually arguing a problematic, but my skepticism stands. There is too much such language in contemporary humanist discourse.

Later Liu asserts: “To my digital humanities colleagues in this room, let me say that, while we have the tools and the data, we will not even be in the same league as Moretti, Casanova, and others unless we can move seamlessly between text analysis and cultural analysis.” That may be so, but starting out by granting a congealed cluster of reified conceptualization– “postindustrial, neoliberal, corporatist, and globalist flows of information-cum-capital” – a regulative force in the inquiry pretty much dooms that inquiry to triviality.

To my colleagues actively engaged in research with conceptual tools from CL and NLP I would say: treat such remarks as tactical challenges. If you can do your work without having to answer such questions, then don’t bother. If for some reason you have to provide an answer (e.g. a dean wants to know), then do your best to tell your interlocutor what they want to hear without, however, compromising your own intellectual integrity too badly (think of all those physicists who have had to justify their research in terms of its contributions to building atomic weaponry). If such questions do in fact interest you as stated, well then, by all means have at it.

However, as a practical matter, Wissenschaft, the search for pure knowledge, can only but be subordinate to Bildung. – “a harmonization of the individual’s mind and heart and in a unification of selfhood and identity within the broader society” in the Wikipedia’s formulation. What activity can possibly be more important than living one’s life well? Wissenschaft is but a tool that some may use in their own pursuit of a full life in their community.

If THAT’s what Liu was getting at, well then, of course, he’s got a point. But I think we must first come to terms with CL and NLP on their own terms, for that is the only way to understand them. And without understanding them, how can you possibly pass them under ethical scrutiny?

Oh, Those Meddlesome Value Judgments

Let us then set CL and NLP aside for a moment and think about literary criticism as a discipline. Why does this discipline proclaim itself to be a species of criticism when any actual critical judgment has been, at best, only a secondary or tertiary concern?

During my undergraduate years at Johns Hopkins in the late 1960s the issue came up a time or three in this or that class and the answer generally went something like this: “Well, my evaluation is implicit in my choice of texts. The actual articulation of value judgments is irrelevant to criticism.” In the introduction to his well-known Anatomy of Criticism (1957), which sought to define literary criticism as an autonomous field of study (Wissenschaft), Northrup Frye relegates the making of value judgments to the history of taste (p. 21):

Shakespeare, we say, was one of a group of English dramatists working around 1600, and also one of the great poets of the world. The first part of this is a statement of fact, the second a value-judgement so generally accepted as to pass for a statement of fact. But it is not a statement of fact. It remains a value judgement, and not a shred of systematic criticism can ever be attached to it.

The profession was happy to see in Frye’s statement a statement of its aims and desires, though, of course, the journalistic reviewers of books and movies did not hesitate to make aesthetic and ethical judgements about the works they reviewed. That was, after all, their job.

Almost 20 years later Frye repeated that sentiment in the his essay, “The Responsibilities of the Critic”, in the Centennial Issue of MLN (Modern Language Notes), published in honor of the centenary of the founding of The Johns Hopkins University (where the journal was founded and where it is still edited). Frye says (p. 810, emphasis mine):

The word critic is connected with the word crisis, and all the critic’s scholarly routines revolve a critical moment and a critical act, which is always the same moment and act however often it recurs. This act, I have so often urged, is not an act of judgement but of recognition. If the critic is the judge, the community he represents is supreme in authority over the poet; all human creation must conform to the anxieties of human institutions. But if the critic abandons judgement for recognition, the act of recognition liberates something in human creative energy, and thereby helps to give the community the power to judge itself.

At this moment I am tempted, I must confess, to explicate the words surrounding the sentence I have emphasized, for they are important words, written by an important critic on what we must regard as a ceremonial occasion.

I urge you to re-read those words and ponder their implications, but I must move on to another senior critic of the highest distinction, the late Wayne Booth, The Company We Keep: An Ethics of Fiction (1988). Booth uses “ethics” in a sense that includes aesthetics. He is interested in what makes literature good, in the beauty of the work if you will, and in the mode of life it urges upon us (think of ethos). This is from his first chapter, “Introduction: Ethical Criticism, a Banned Discipline?” (p. 5):

Many critics today will resist any effort to tie “art” to “life,” the “aesthetic” to the “practical.” Indeed, when I began this project I thought that ethical criticism was as unfashionable as most current theories would lead one to expect.

That’s more or less the view we have found in Frye. Booth continues, citing one of the major post-modern critics:

When I first read, three or four year along in my drafting, Fredric Jameson’s The Political Unconscious that the predominant mode of criticism in our time is the ethical (1981, 59), I thought he was just plain wrong. But as I have looked further, I have had to conclude that he is quite right. I’m thinking here not only of the various new overtly ethical and political challenges to “formalism” [there’s an essay in THAT word and what it means in this context]: by feminist critics asking embarrassing questions about a male-dominated literary canon and what it has done to the “consciousness” of both men and women; by black critics pursuing Paul Moses’s kind of question about racism in American classics; by neo-Marxists exploring class biases about racism in European literary traditions; by religious critics attacking modern literature for its “nihilism” or “atheism.”

Booth opens his next chapter, “Why Ethical Criticism Fell on Hard Times”, by observing (p. 25).

that though most people practice what I am calling ethical criticism of fictions, it plays at best a minor and often deplored role on the scene of theory. It simply goes unmentioned in most discussions on the scene of theory…Though anthologies of “literary criticism through the ages” obviously cannot avoid including great swatches of ethical evaluation in their selections, they disguise it, in effect, under other labels: “Political,” “Social,” or “Cultural” criticism; “Psychological” or “Psychoanalytic” criticism; and more recently terms like “Reader-Response Criticism,” “Feminist Criticism,” and “The Discourse of Poser.”

That, I suspect, is where the profession remains today, 15 years after Booth published that The Company We Keep, though we can now add queer studies, ecocriticism, and animal studies to Booth’s list of ethical criticisms.

The profession is also trying to figure out how to move forward. A return to evaluation is one of the topics that turned up repeatedly at the now defunct group literary blog, The Valve – A Literary Organ, where I had posting rights, and it shows up frequently in journalistic discussions as well. People outside the academy are interested in evaluation and there are people within the profession who believe that it was a mistake ever to have cut ourselves loose from evaluation.

It is clear that the ethical dimension of literature is important. To put it bluntly, we value literature exactly because it has an ethical dimension. Consider the position Kenneth Burke articulated in his essay, “Literature as Equipment for Living” from The Philosophy of Literary Form (1941). Using words and phrases from several definitions of the term "strategy" (in quotes in the following passage), he asserts that:

. . . surely, the most highly alembicated and sophisticated work of art, arising in complex civilizations, could be considered as designed to organize and command the army of one's thoughts and images, and to so organize them that one "imposes upon the enemy the time and place and conditions for fighting preferred by oneself." One seeks to "direct the larger movements and operations" in one's campaign of living. One "maneuvers," and the maneuvering is an "art."

Through the symbols and strategies of shared stories, members of a culture articulate their desires and feelings to one another thereby making themselves mutually at home in the world. Surely professional participation in that activity is an honorable activity.

At the same time, ethical criticism has not been central to most of my practice as a critic. I want to figure out how literary texts work, how they work in the minds of individuals, how they circulate in society. But I’ve not been so interested in using them as vehicles for working out matters of beauty, morality, or social policy.

How, then, do I resolve these matters?

I don’t. There’s nothing to resolve. They are separate, if closely related, discourses.

That is, I accept ethical criticism as a fully legitimate intellectual pursuit. If it isn’t the pursuit that most interests me, that’s one thing. That’s my personal choice. But it is different from what I have come to call, in a recent series of posts (which I have collected as Literary Criticism 21: Academic Literary Study in a Pluralist World) naturalist criticism. While ethical and naturalist criticism may be directed at the same objects, they are different activities. They have different goals, and different methods. Nothing is to be gained by continuing to confuse them or by continuing to practice ethical criticism with out, as Booth says, explicitly saying that that’s what we are doing and subjecting that practice to theoretical elaboration and scrutiny.

It remains to be seen whether or not ethical criticism is simply a specialized version of Bildung naturalist criticism a specialized version of Wissenschaft. I certainly didn’t have that distinction when I was doing that work. Rather, I was working through the object-oriented ontology of Graham Harman, and the pluralist metaphysics of Bruno Latour (e.g. We Have Never Been Modern, Politics of Nature, On the Modern Cult of the Factish Gods) and of the late Paul Feyerabend (Conquest of Abundance). Just why and how I did that is too much to pack into a paragraph or two in this essay. The practical result is that I believe academic literary studies must split in two, conceptually. Institutionally, that’s a whole different story, but I do note that, institutionally, literary studies often supports itself by staffing writing courses, and THAT has not been a particularly happy marriage.

The question of the relation between literary scholarship, on the one hand, and CL and NLP on the other, becomes primarily a question for naturalist criticism, not ethical criticism. The ethical critic is, of course, free to draw on any evidence he or she wishes but the most pressing issues raised by CL and NLP are matters of intellectual craft and epistemology. What are we doing, and how does it work?

CL and NLP in Naturalist Criticism

My own journey on the Good Ship Computation began in my undergraduate years at Johns Hopkins. I did in fact take a course in computer programming – one of the first ever offered at an American university – but my introduction to Chomskian thought under James Deese, a psycholinguist, was probably more important. I was prompted to book my ticket by a very specific problem in literary analysis.

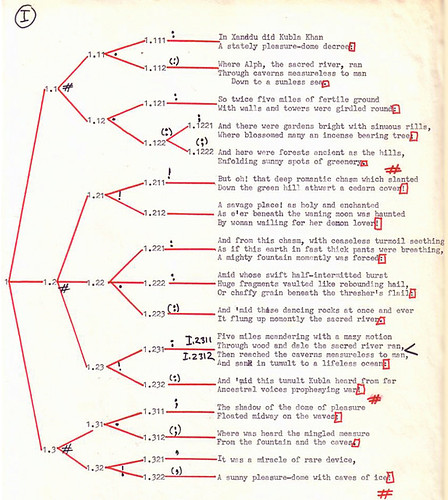

In the course of a MA thesis in the Humanities Center at Hopkins I’d discovered that Coleridge’s “Kubla Khan” had a remarkable structure. Here’s a diagram for the first 36 lines:

Notice what appears to be a nested structure (branch points with three subordinates); the last 18 lines have a similar form. What’s that about? I wanted to know. But it looked like a problem for the cognitive sciences. It just smelled like computation.

When I went off Buffalo in the autumn of 1973 to get a degree in English, I was going though a well-worn intellectual pipeline between Johns Hopkins and SUNY Buffalo. The Buffalo program was intellectually adventurous, but I had no idea whether I’d really be able work on the cognitive sciences.

Luck was with me. A fellow graduate student, Ralph Henry Reese, had been studying with David Hays in linguistics and introduced me to him in the Spring of 1974. We got along well. I became his student and eventually we published a series of articles together.

As some of you know, Hays headed the RAND Corporation’s research on machine translation back in the 1950s and 1960s and, as such, was one of the founders of the discipline of computational linguistics. He left RAND in 1969 to become the founding chair of the Linguistics Department at SUNY Buffalo (when it separated from Anthropology, I believe). He had a small research group in the Linguistics Department, which I joined.

By that time he’d become focused on semantics, using a cognitive net notation of the sort pioneered, I believe, by Ross Quillian, though he’d also been influenced by the stratificational work of Sydney Lamb, who was a personal friend. When I joined the group Brian Phillips was finishing a dissertation on discourse analysis (of drowning man stories) and Mary White was completing a dissertation in which she mapped the cognitive structures of a millenarian community.

I went to work on poetry. I wrote a long paper in which I looked at Great Chain of Being imagery in two or three passages in William Carlos Williams, Patterson, Book V; I also submitted that paper in Charlie Altieri’s modern poetry course. Then I went to work on Shakespeare’s Sonnet 129, “Th’ expense of Spirit.” I wrote a dense and moderately technical article, Cognitive Networks and Literary Semantics, which I published in 1976 in that same special issue of MLN that opened with Fry’s “The Responsibilities of the Critic”. Stanley Fish, Edward Said, and Walter Benn Michaels also had articles in that issue.

While I am certainly proud to have been placed in such distinguished company, in the context of this post there is a much more important point to be made. And that is simply that some article, much of which was a quasi-technical exercise in knowledge representation, appeared in a literary criticism journal edited at the school and by the people that a decade before had brought Derrida, Lacan, Barthes, Todorov and others for the (in)famous 1966 structuralist symposium, The Languages of Criticism and the Sciences of Man. In the mid-1970s things were up in the air in literary criticism – Western metaphysics, after all, was going up in smoke – and everything seemed possible.

Even cognitive science.

In literary criticism.

When I wrote that piece I thought I was bringing new and wonderful conceptual tools to the attention of literary scholars. It was to be awhile before I realized that no one was interested in those particular tools. I went on to write a dissertation on Cognitive Science and Literary Theory (1978). It had several chapters in which I built up my cognitive model, based on Hays’s work, and then a long chapter on the narrative form, followed by one on poetics, and a concluding chapter in which I undertook a somewhat more sophisticated analysis of “Th’ expense of Spirit” than I had taken in that 1976 article. Notice, though, that my dissertation said nothing about “Kubla Khan”, which is the poem I’d wanted to work on. I couldn’t make it work, and so I had to be satisfied with the Shakespeare sonnet.

Back then I was imagining that, in time, techniques would be developed that worked on Coleridge, and on other literary texts. I was imagining that a few other literary critics would pick up on the cognitive sciences and we’d set to work modeling the mental processes at work while reading literary texts (see Benzon and Hays, Computational Linguistics for the Humanist, Computers and the Humanities, Vol. 10, pp. 265-274, 1976). For that’s what models were about in that era, symbolic computation. Some researchers were interested in models that could be justified though empirical evidence about human cognition while others were simply interested in models that worked on real computers and in real time operation.

By the mid-1980s that work had collapsed. The knowledge models were too complex, requiring hours of hand coding and consuming too many computing resources (CPU cycles and memory) at runtime. Commercial funding of AI start-ups based on symbolic processing technology dried up and there was talk of AI Winter. At roughly the same time J. Hillis Miller was lamenting the demise of deconstruction in literary criticism. In his “Presidential Address 1986. The Triumph of Theory, the Resistance to Reading, and the Question of the Material Base” (PMLA 1987, 102, 281-91) lamented the “almost universal turn away from theory in the sense of an orientation toward language as such” (283).

Can you imagine that? Deconstruction, dead, way back in 1986! If the term is still with us that is because, on the one hand, the practice wasn’t quite THAT dead, but on the other hand, the term’s meaning has become degraded, as has the meaning of, for example, “algorithm”.

But if deconstruction was losing its influence, that is in part because it had paved the way for other more overtly critical approaches. Feminist, African-American, and post-colonial approaches assumed increasing importance. And the so-called New Historicism took hold as well.

Meanwhile, symbolic approaches in computational linguistics and artificial intelligence were giving way to a variety of statistical approaches. Those approaches achieved the kind of practical success, and commercial profitability, that had eluded symbolic processing. This success was in part a matter of having more computer power available, but only in part. The power would have been useless without the development of techniques that could use.

It is those techniques that have become known as natural language processing (NLP). These are the techniques that are kicking up the dust in digital humanities. Whereas the techniques of symbolic processing were motivated, at least in part, by the desire to simulate the human mind (that was Hays’s primary motivation by the time I began working with him), NLP methods are motivated primarily be a desire to achieve a practical result.

Thus the literary program I had imagined in my dissertation days could not be realized though these techniques, though they’ve revolutionized computational linguistics. For that matter, the program I’d imagined back then couldn’t be realized by any program I’d be willing to extrapolate from current technology. Things change; what had been thought possible at one time may drop below the horizon. That program is dead.

I do not, however, think those old-style symbolic computational models are useless, not at all, a matter I’ll return to in the next section. But I don’t think we’ll be constructing any such models so that we can simulate the reading process. We’re going to have to get at that, those who want to do it, some other and rather more arduous way. But that discussion – how to understand what happens when one reads – would be a diversion from the business at hand.

What current NLP technology CAN do is provide new kinds of descriptive objects and those descriptions give literary scholars . . . . Just what exactly? A new kind of access to large-scale literary phenomena?

NLP as a Descriptive Tool

Consider topic modeling, a relatively new technique introduced about a decade ago which I learned about while coming to terms with a substantial post by Andrew Goldstein and Ted Underwood, What can topic models of PMLA teach us about the history of literary scholarship? In topic modeling documents are treated as bags of words. We don’t care about word order, just that those words co-occur in a text. A topic in this sense is simply a collection of words that tend to occur together across many documents in a collection.

Topic modeling uses a statistical technique to infer such topics in a collection. The researcher specifies how many topics to construct, designates stop words (words to be excluded from the analysis), and then lets the computer crunch away. In one run Goldstein and Underwood asked for 150 topics; in another they asked for 100 topics. When the process has settled down the computer presents a list of topics, where a topic is simply a list of words that have been found to co-occur in the corpus. In the 150-topic run on PMLA, topic 109 is associated with these words: structure, pattern, form, unity, order, structural, whole, central. That’s all the computer coughs up, a list of words and a number to designate that list. It is up to the researchers to interpret that topic as, well, a meaningful topic within literary scholarship over the last century.

The question that interests me is this: when you’ve done a topic analysis, just what is it that you’ve got? The answer is by no means obvious and I find that question far more pressing, and interesting, than figuring out what role Latent Dirichlet allocation is playing in “the great postindustrial, neoliberal, corporatist, and globalist flows of information-cum-capital.” My provisional answer is that a topic model for a corpus is a description, but a description of what?

And just what, anyway, is a description? That’s a question I’ve been thinking and blogging about quite a bit. I was prompted to it, not under pressure from NLP, but from my own work, such as that structure tree for “Kubla Khan” we’re already glanced it. The relationship between that tree and Coleridge’s text seems to me to be transparent, and objective. The text really IS like that. That’s not something that I, as critic, am imposing on the text from out of the tender and idiosyncratic depths of my own consciousness – as some critics have remarked to me. Coleridge put that there, though he didn’t realize he was doing so, and we as critics have to come to terms with it. But discussing THAT would be another diversion. I simply introduced that as an example of the sort of thing I initially had in mind when I talk about description in the context of literary texts.

Alas, the work of description has been badly under-theorized in discussions of critical method (my initial thoughts are HERE). Furthermore, as far as I can tell, descriptive has been generally neglected, though Brian Ogilvie’s The Science of Describing: Natural History in Renaissance Europe, is an exception. Until literary criticism has located and theorized the descriptive tactics within its own practice it has no point of contact between current critical practice and the new NLP techniques, which are, I will argue, primarily descriptive.

Let’s consider two somewhat different examples of description, one from linguistics, and the other from biology.

In Aspects of the Theory of Syntax (1965, pp. 18 – 27) Noam Chomsky distinguished between grammars that are descriptively adequate and those that meet the requirements of explanatory adequacy. In this context, I don’t care what the requirements were for either case, all that interests me is that Chomsky characterized a grammar as a description of a language. In what sense does a grammar describe a language? For that matter, just what does it mean to describe a language? Do you list all the words? How do you do that when new words are being coined all the time and other words are dropping from use? However you deal with that problem, any convention you come up with is obviously not sufficient to the task as there’s more to language than the words. Words are used in conjunction with other words. Some combinations seem acceptable, others not. How do you characterize that difference? In particular, as the number of acceptable utterances is infinite, how is it possible to provide a finite description of that infinite collection?

That’s the problem Chomsky grappled with. And a huge literature has grown up around it, a literature fraught with controversy. Though I have opinions on some of those matters, those opinions are irrelevant here. My point is a simple. The task of merely describing a language is an extremely difficult and problematic one.

Let’s consider a different example; that of the double-helix model of the DNA molecule. What is “double helix” but a description? What is the shape of a DNA molecule? Why, a double helix.

The paper in which Watson and Crick published that description was a short one (only 2 pages PDF) and it is one of the most important papers in 20th Century science. And yet it is a description, a mere description.

The process by which they arrived at that description, however, was not at all a simple and direct one. It was indirect and involved x-ray crystallography, in which you beam x-rays through crystallized DNA and onto a photographic emulsion. The emulsion is developed, yielding images like the one below (technically, a fibre diagram, see explanation HERE), from an article that accompanied the seminal Watson and Crick paper. That doesn’t look anything like a double helix.

So where’d the double helix come from? Watson and Crick made it up. Not out of whole cloth, but they made it up. Knowing the properties of x-rays, they inferred that those kinds of smudges would be produced if the DNA crystal had the form of a double helix.

* * * * *

What then is a topic model? I’ve said it is a description. But at the moment I do not think it is a description of a body of texts. Rather, it is a description of what those texts are about. That is, in topic modeling the texts themselves play a role analogous to the fibre diagrams generated in x-ray crystallography. Actually, it is NOT the texts themselves that play that role; rather it is the bags of words obtained from the texts that play that role. A given topic analysis would then stand in relation to those bags of words as the double-helix model stands in relation to the fibre diagrams.

[I wasn’t aiming for that when I started drafting this post and so it comes as something of a surprise. I find it interesting. But I haven’t yet had time to mull it over. One thing to ponder: what holds words together into topics are structures of the kind investigated under the aegis of symbolic computation, the kinds of structures I investigated with David Hays years ago.]

And just what is this thing that those texts are about? If all the texts were written by a single individual, we could say that that thing is that individual’s mind. But generally we’re working with texts produced by many individuals, often over a period of decades. Furthermore, those texts were read by many more individuals. A topic analysis would thus seem to be some kind of collective mind, perhaps even the collective mind behind a Focaultian épistème.

And with the introduction of that phrase, “collective mind”, we’ve entered strange territory. Are we talking about some kind of Jungian collective unconscious, or a Zeitgeist? Maybe. Who knows? Talking about collective mentation is a tricky business, one prone to mysteries, mysticism, and mystification. We can get lost doing it. But we have no choice but to do it somehow.

Well, whatever that thing is, however we conceptualize it, topic modeling, and NLP techniques more generally, give us specific techniques for manipulating it and describing it. I am thus reminded of a well-known passage in the text that put Claude Lévi-Strauss on the intellectual map, his 1955 travelogue and intellectual biography, Tristes Tropiques. This well-known passage is from Chapter 6, “The Making of an Anthropologist” (Atheneum, 1974, p. 57):

When I became acquainted with Freud’s theories, I quite naturally looked upon them as the application, to the individual human being, of a method the basic pattern of which is represented by geology. In both cases, the researcher, to begin with, finds himself faced with seemingly impenetrable phenomena; in both cases, in order to take stock of, and gauge, the elements of a complex situation, he must display subtle qualities, such as sensitivity, intuition and taste. And yet, the order which is thus introduced into a seemingly incoherent mass is neither contingent nor arbitrary. Unlike the history of the historians, that of the geologist is similar to the history of the psychoanalyst in that it tries to project in time – rather in the manner of a tableau vivant – certain basic characteristics of the physical or mental universe . . . Following Rousseau, and in what I consider to be a definitive manner, Marx established that social science is no more founded on the basis of events than physics is founded on sense data: the object is to construct a model and to study its property [sic] and its different reactions in laboratory conditions in order later to apply the observations to the interpretation of empirical happenings, which may be far removed from what had been forecast.

Note three things: First the references to Freud and Marx. These would become to go-to theorists on Continent-influenced literary study in the latter half of the 20th Century though, of course, their ideas would be refracted through, reflected from, and combined with those of other thinkers, earlier and later. Second, note the geological metaphor, and the stratigraphy implicit in it. Lévi-Strauss had set that up in a previous paragraph (p. 56): “A pale blurred line, or an often almost imperceptible difference in the shape and consistency of rock fragments, are evidence of the fact that two oceans once succeeded each other where, today, I can see nothing but barren soil.” Third, “construct a model”, yes; but just how do we study history’s “different reactions in laboratory conditions”?

Well, we can’t, not quite. But since Lévi-Strauss wrote that passage computer scientists and others have developed many and sophisticated tools for simulating many phenomena, such as atomic reactions (the problem that paid for much of the early developmental work in computing), weather, traffic behavior, and even the evolution of biological species. While I suppose that I could conjecture that someday we might be able to set up a simulation of world history since, say, 1000 CE, and twiddle with the dials to see under what conditions, say, the novel arises and how it evolves from there, I’m not going to do that – though I’m sure that you can find such proposals tucked away in some science fiction somewhere.

I wish only to note a crude family resemblance between Lévi-Strauss’s dream of constructing “a model and to study its property [sic] and its different reactions in laboratory conditions” and what you do when you run a topic model. You twiddle the dials to see how different settings affect the results. Much of what the investigator learns comes simply from playing with the model.

Romantic Love: Cultural Invention or Biological Universal?

Let us consider what we might do using NLP techniques over the long term and in the large.

When I was an undergraduate at Johns Hopkins I took a course in medieval literature taught by the late Donald Howard. In that course I learned that romantic love had been invented as courtly love in the 12th Century in Southern France. I was stunned, for I’d thought that romantic love – one and only forever and ever – was inherent in human nature. That’s how WE are. The idea that it had been invented was simply astounding to me.

Considerable scholarship has been devoted to the study of romantic love, its history, ideology, and its role in the development of the family. But there has also been pushback, as they say. There have been large-scale cross-cultural studies indicating that romantic love is in fact quite widespread if not universal and so hardly an artifact of Western culture. Some literary Darwinists have taken this universalist line while snickering up their sleeves at the quant notions of postmodernists humanistic scholarship, I’ve sketched out a very little of this in a post at New Savanna, Romantic Love, Conversation, Biology, and Culture, and so I won’t go through that here.

I think there’s a real dispute here, and it’s one that mixes mere matters of definition with matters of substance. Those cross-cultural studies that find romantic love everywhere are working from a definition that goes something like this: romantic love is a strong sexualized relationship between a man and a woman that may/often/ideally leads to marriage. That, of course, excludes courtly love, the modern re-discovery of which started this whole conversation, for courtly love is explicitly adulterous. And what of poor Dante Alighieri who met Beatrice Portinari only twice, but devoted his imaginative life to her? On the other hand, if we cut our definition so as to fit the medieval European case, it will be too specific to shed much light on the romantic predicaments of Jane Austen’s heroines.

Before we go on, let me note that my choice of romantic love as my set-piece example cannot possibly be justified by an appeal to Wissenschaft. That choice was ethical through and through. I chose it because it is all but self-evident to me that it is a very important topic, not to the universe, but to us. It speaks to our nature or, if you will, our natures: What’s the mix of biology and culture? Shelly famously asserted that “poets are the unacknowledged legislators of the world.” Is romantic love an example of their legislative guile and prowess? Does literature, mere words, have that kind of power? Does culture exert its own pressure on history rather than being a mere reflection of the (by now in intellectual history, exceedingly nebulous) forces of production?

[As an aside I note that, in these terms, the biologist’s choice of which organisms to investigate from among the millions of identified species is an ethical one.]

As I conceive it, there’s a lot riding on this line of investigation. How can NLP contribute to it? In particular, does NLP have a contribution that’s both necessary and impossible through other means?

I don’t really know. But here are my crude first thoughts.

First, much of the historical record consists of documents, and no artifacts speak more directly to what’s going on in people’s minds than texts, texts of all kinds. The literary arguments, however, have been based on a relatively small and highly selected set of documents, the so-called canonical texts of Western literature. The techniques of NLP give us access to a much wider range of texts – which is one of Moretti’s points about “distant” reading.

To access those texts we must, of course, find textual indices of romantic love, indices that NLP techniques can identify, classify, count, and summarize. What are they? How, for example, would we instruct or train a computer to tell the difference between an adulterous relationship and a marital one? It’s an important question for which I can’t, at the moment, see my way to a ready answer, though others might.

But there are two angles on the general problem. On the one hand we can program computers to look for certain things. But we can also send them on an open-ended stroll through the texts and see what they come up with. That’s what topic analysis does. Are any topics reasonably taken as indices of romantic love? That’s obviously an interpretive call.

Assuming then, that we can find it, I’d be interested in tracking romantic love in various categories of texts. Does it begin appearing in certain kinds of texts before it shows up in others? If so, are there plausible reasons why that would be the case? What categories should we investigate? On principle I’d be interested in the relationships between the canonical texts and the rest.

But I’d also be very much interested in the relationship between literary texts and non-literary texts of all kinds: histories, biographies, newspaper and magazine stories, legal records (births, marriages, divorces), diaries, and personal letters. Do accounts in letters and diaries lag ahead or behind accounts in literary texts? If behind, that’s evidence that practice is following fiction, not leading it. That’s the kind of evidence we need to prove Shelly out.

Of course, once we start looking at letters, diaries, and even legal records, we encounter another problem: handwriting. Recognizing characters in printed documents has become a routine process, though it is by no means a perfect one. As far as I know, handwriting recognition is a computational nightmare. Still, the problem is an interesting one, and important for a variety of practical tasks, and so things will get better. But I have no idea when or even if things will get so much better than every existing handwritten document will be digitized and transcribed into machine-readable texts.

But that’s a digression. Let’s get back to the main line.

While we’re digitally trolling through piles of texts, literary and non-literary, one thing I’d want to look for are patterns of the sort that Stephen Greenblatt works with. As I explain in a recent post, Notes Toward a Naturalist Cultural History:

Now let us consider an exemplary New Historicist critic, Stephen Greenblatt. In his collection, Learning to Curse, there’s a chapter, “The Cultivation of Anxiety: King Lear and His Heirs”. It opens with a passage from an 1831 article on child rearing by one Rev. Francis Weyland and goes on to explore how “Wayland’s struggle is a strategy of intense familial love, and it is the sophisticated product of a long historical process whose roots lie at least partly in early modern England, the England of Shakespeare’s King Lear” (p. 82). Later on, in a chapter entitled “Psychoanalysis and Renaissance Culture” contrasts the conception of the self implicit in the story of Martin Guerre in 16th Century France with the conception of the self implicit in Freud’s psychoanalytic theorizing. Those conceptions are very different.

In both of those cases there is a difference between two historical situations that seems to me to be similar to the difference between the Saxo Grammaticus story of Amleth and Shakespeare’s later story about Hamlet. In all cases we have two historical situations such that one came before the other and the later one is in the same historical ‘stream’ as the earlier. That ordering is not, however, contingent in the sense that the reverse order is equally possible. Rather, that ordering is necessary; the second moment could only have come after the first because it builds on mental structures that were in place in that earlier moment.

It’s that kind of directionality that interests me. Is it really there? If so, how do we explain it?

My preferred explanation is indicated in that last quoted sentence. I am a constructionist from the time when the concept was associated with the developmental psychology of Jean Piaget, who argued that later moments in the child’s intellectual development involve the creation of new mental structures over and from older ones – perhaps, in the spirit of Bruno Latour, we might talk of composition: composing new mental structures. That’s what I argue in, The Evolution of Narrative and the Self, which I published in Journal of Social and Evolutionary Systems (1993, Vol. 16, No. 2, pp. 129-155). That argument, in turn, was based on my 1978 dissertation, Cognitive Science and Literary Theory.

As I explained above, when I wrote that dissertation I was deep in enemy/friendly (the choice of adjective depends on where you now stand) territory. Much of that dissertation was a quasi-technical exercise in knowledge representation where computation isn’t simply a bunch of techniques we use to troll through piles of raw data (texts) but rather becomes the phenomenon under investigation. How does the mind work? What are its computational mechanisms?

What, I hear someone objecting, aren’t you assuming a lot when you assert computational mechanisms? Yes, I am, but just what I’m assuming, that’s not at all obvious. For the nature of computation itself is by no means clear. Maybe we’re talking about a dynamical system of the sort modeled by Berkeley neurobiologist Walter Freeman, and many others, or maybe it’s symbolic computation implemented in a dynamical system, Maybe we’ve got a three-level system, as argued by Peter Gärdenfors (Conceptual Spaces 2000, p. 253):

On the symbolic level, searching, matching, of symbol strings, and rule following are central. On the subconceptual level, pattern recognition, pattern transformation, and dynamic adaptation of values are some examples of typical computational processes. And on the intermediate conceptual level, vector calculations, coordinate transformations, as well as other geometrical operations are in focus. Of course, one type of calculation can be simulated by one of the others (for example, by symbolic methods on a Turing machine). A point that is often forgotten, however, is that the simulations will, in general be computational more complex than the process that is simulated.

Who knows?

My point is simply that some kinds of mechanisms are at work in minds, and the cognitive sciences have accumulated several decades’ worth of serious investigation into those mechanisms. If, for example, the Foucaultian épistème is more than a phantasm in the minds of post-structuralist thinkers, then it must have some kinds of operative mechanisms. Computational linguistics, in the “classical” sense of symbol processing, has something to say about how such mechanisms might work. And natural language processing, in the sense of statistical techniques for exploring large bodies of texts, provides techniques for identifying them “in the wild”. Taken together these two bodies of intellectual technique and investigative craft give us new tools for investigating the problematic of romantic love.

Evolutionary psychologists and their literary kin rightly insist that we are biological creatures and that we must understand that biological heritage if we are to understand, well, in this case, literature. But, it is one thing to suggest, with E. O. Wilson, that “genes hold culture on a leash” – a formulation that begs for a deconstructive reading (real deconstruction, not hack work) that explicates it as an inversion of Plato’s allegory of the charioteer. My own preferred metaphor is much different. Consider a game, such as chess. Biology provides the board, the pieces, and the basic rules. The strategy and tactics are provided by culture.

That’s a very different metaphor, one, I warrant, that leaves ample room for literary texts to reshape our feelings and desires, gradually, through many readings, by many people, over decades and centuries.

And what, you might ask, do I think will be the upshot of this enterprise, if it ever transpires? I expect it to change the terms of discussion. Beyond that I do not care to venture.

Further Reading

The Key to the Treasure IS the Treasure

A short post at New Savanna in which I suggest that literary studies in the 21st Century should have four components: 1) Description, 2) Naturalist Criticism , 3) Ethical Criticism, and 4) Digital Humanities.

Corpus Linguistics for the Humanist: Notes of an Old Hand on Encountering New Tech

Abstract: Corpus linguistics offers literary scholars a way of investigating large bodies of texts, but these tools require new modes of thinking. Literary scholars will have to recover a kind of interest in linguistics that was lost when the discipline abandoned philology. Scholars will need to think statistically and will have to start thinking about cultural evolution in all but Darwinian terms. This working paper develops these ideas in the context of a topic analysis of PMLA undertaken by Ted Underwood and Andrew Goldstone.

Literary Criticism 21: Academic Literary Study in a Pluralist World

Abstract: At the most abstract philosophical level the cosmos is best conceptualized as containing various Realms of Being interacting with one another. Each Realm contains a broad class of objects sharing the same general body of processes and laws. In such a conception the human world consists of many different Realms of Being, with more emerging as human cultures become more sophisticated and internally differentiated. Common Sense knowledge forms one Realm while Literary experience is another. Being immersed in a literary work is not at all the same as going about one's daily life. Formal Literary Criticism is yet another Realm, distinct from both Common Sense and Literary Experience. Literary Criticism is in the process of differentiating into two different Realms, that of Ethical Criticism, concerned with matters of value, and that of Naturalist Criticism, concerned with the objective study of psychological, social, and historical processes.

[Above is a guest post by Bill Benzon]

Two Disciplines in Search of Love | Replicated Typo said,

September 28, 2013 @ 8:11 am

[…] a guest post I've contributed to Language Log. The disciplines are literary criticism on the one hand, and computational linguistics on the […]

bookmarks for September 26th, 2013 through September 28th, 2013 | Morgan's Log said,

September 28, 2013 @ 4:01 pm

[…] Two Disciplines in Search of Love – This is a guest post by Bill Benzon, in response to earlier posts by Dan Garrette ("Computational linguistics and literary scholarship", 9/12/2013) and David Bamman ("On Interdisciplinary Collaboration and "Latent Personas"", 9/17/2013). – (dh ) […]

Ray Dillinger said,

September 29, 2013 @ 11:42 am

As a computational linguist, this is a topic that interests me greatly. The application of computational methods to problems both of linguistic structure and criticism seems to me to be much like the application of power tools to both carpentry and manufacturing.

You can get a lot more done in both cases, but you will be doing slightly different kinds of tasks. There is still a need for craftsmanship but the craftsmen must have more focus on abstraction because the feedback between process and product has become more decoupled.

Accordingly, the methods lend themselves to different approaches to the problems and therefore to solving problems in different ways. What computational methods allow that wasn't possible before is the utilization of larger data sets and more precise descriptions of the methods used. But understanding what language describes is still a hard problem.

There is progress being made but the application of such methods to ethical and sociological criticism seems inevitably linked to the development of so called strong artificial intelligence. That is, saying anything important about states of being seems to both have as a practical requirement and be a very strong contribution to the creation of a model of consciousness itself.

I may be a cockeyed optimist about the technical aspects of that great work, but I think that modeling consciousness is more likely to yield to statistical work with Big Data than any method that's been tried before. Indeed, progress is being made today in terms of statistical models of everything from word frequencies and grammatical structure to neurobiology and the accuracy of advertising targeting.

These things are starting to say important things about consciousness and sentience. At some point there will be a synthesis or the development of a body of work that starts to explain these diverse descriptions of aspects of ourselves, and that will allow for a very different formulation of the classic questions of ethical and social criticism.

Bill Benzon said,

September 29, 2013 @ 3:40 pm

I may be a cockeyed optimist about the technical aspects of that great work, but I think that modeling consciousness is more likely to yield to statistical work with Big Data than any method that's been tried before.

Well, sure.

That's part of it, but I don't think it'll get us the whole way there. The Gärdenfors book I'd mentioned (Conceptual Spaces 2000) is quite interesting. It's mostly an argument for an "intermediate conceptual level, vector calculations, coordinate transformations, as well as other geometrical operations are in focus", between subconceptual processing, which is going to be largely statistical in nature and symbolic processing. I'm quite sypathetic to that.

Bill Benzon said,

September 30, 2013 @ 9:41 am

The essay seemed a bit incomplete to me but it's taken my awhile to work out an end game. Here's a draft:

Ethical Criticism and Naturalist Criticism (Slight Return)

Let us return to Wayne Booth on ethical criticism. He argues, correctly I believe (The Company We Keep, p. 81), that “theoretical defenses of ethical criticism disappeared precisely because nobody could any longer believe that ethical appraisals referred to any independent reality attributable to texts or readers.” He then goes on to outline four arguments in favor of ethnical criticism, the first of which is (p. 82): “Appraisals of narratives can refer to something real in the narratives, not just to the appraiser’s preferences; the widespread claim that “poems” have no power or value in themselves is confused and misleading.”

Of course Booth provides arguments on that point. And recent naturalist criticism backs him up. For example, psychologist Keith Oatley recently synthesized a large literature on the psychology of reading fiction, a substantial part of which has been published since Booth’s book: Such Stuff as Dreams: The Psychology of Fiction (2011). Here’s a representative bit (160-161):

Further, as I conceive it, much of the “small scale” program of naturalist criticism, that is, the research devoted to the study of individual works and small groups of texts, would be devoted to arguing that point. Thus my own programmatic statement, Literary Morphology: Nine Propositions in a Naturalist Theory of Form, is built around a series of propositions; eight of them nine are about the formal properties of individual texts and two of those are about how those properties have evolved over long periods of time. The remaining proposition simply states that the meaning of texts is elastic.

Let us return to Booth. After quoting from a Chekov story (“Home”) he observes (p. 484)

“But how” Booth asks (p. 484), “should we make those choices?” We take advantage of the fact that fiction is a “relatively cost-free offer of trial runs” – Keith Oatley talks of simulation, literary experience simulates life. Booth observes:

In this view my proposed large-scale investigation of romantic love is an investigation of the way in which centuries worth of simulated experience has affected how we have actually changed our ways of life. While I have conceived of that enterprise as a naturalistic investigation into what has actually happened, surely the outcome has implications for the rules we set ourselves as we move forward into the future.

If we are indeed shackled to our genes, then those rules had best be conservative ones. If however our biology allows for considerable free play, then those rules will have to have a different character, for they will have acknowledge a pluralist world in which there are various (and possibly conflicting) ways to live a good life. Again, my bias shows.

Joe said,

September 30, 2013 @ 1:00 pm

"What, I hear someone objecting, aren’t you assuming a lot when you assert computational mechanisms? Yes, I am, but just what I’m assuming, that’s not at all obvious."

Another assumption is the perceived definiteness of the textual "grapheme". Liberman's forays into hip hop shows that transcription ain't easy and transcribers themselves make assumptions on what to include/exclude in the final textual rendering of a song. If an account of courtly love is to be made "naturallistically", one should also take into account how much a written lyric might be an "ethical criticism" of, say, the music performed by troubadors of that time. For example, the paradox of adultery and chivalry may be perceived because the written lyric is not adequately transcribing the semantic richness of a troubador's performance. Another thing transcription doesn't capture very well is improvisation – the grapheme's definiteness necessarily glosses over differences between performances and considers a single written lyric as definitive of all of them.

The answer may be that the textual grapheme is the only thing we can measure at this point – but, I think, we should be careful about betting the whole farm on it. The grapheme is just another interpretation. For example, did the troubadors set lyric poetry to music or was it the other way around? We should recognize that we tend to prioritize text above all else because that's typically all we have.

Bill Benzon said,

September 30, 2013 @ 7:22 pm

Yes, texts are what we have. But the standard story of courtly love doesn't rest on troubador texts alone. No one's betting the farm on them.

That use of "interpretation" seems to me overwrought in the same way that the standard lit crit usage of "reading" is overwrought.

What difference does it make?

Literary critics have been aware of this issue ver since Parry/Lord on the oral nature of the Homeric poems

Bill Benzon said,

October 4, 2013 @ 6:34 am

I've now taken the main post and the conclusion I stuck into that long comment and edited them together into a single document, with some cleaning-up and relatively small additions here and there. I then added a perosnal postscript.

I turned the final document into a PDF which I've uploaded to my SSRN page: Two Disciplines in Search of Love.