Journalist Falls Flat in Comprehension Test

« previous post | next post »

Or, as Kevin Drum put it, "New Test Shows Severe Shortcomings in Nation's Press Corps". Or maybe the headline should be "Onion Takes Over WSJ Education Beat".

According to Stephanie Banchero, "Students Fall Flat in Vocabulary Test", Wall Street Journal 12/6/2012:

U.S. students knew only about half of what they were expected to on a new vocabulary section of a national exam, in the latest evidence of severe shortcomings in the nation's reading education.

Eighth-graders scored an average of 265 out of 500 in vocabulary on the 2011 National Assessment of Educational Progress, the results of which were made public Thursday. Fourth-graders averaged a score of 218 out of 500.

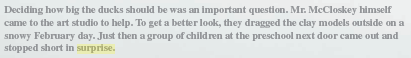

The results showed that nearly half of eighth-graders didn't know that "permeates" means to "spread all the way through," and about the same proportion of fourth-graders didn't know that "puzzled" means confused—words that educators think students in those grades should recognize.

Regular readers may recall my rule of thumb ("Ignorance about ignorance", 10/9/2012):

When you read or hear in the mass media that "Only X% of Americans know Y", don't believe it without checking the references — it's probably false even as a report of the survey statistics.

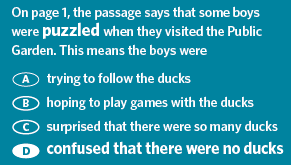

I should have phrased the rule explicitly to cover cases of the form "X% of Americans don't know Y" — but anyhow, let's look at one of Ms. Banchero's examples, as described in "Vocabulary Results From the 2009 and 2011 NAEP Reading Assessments". The evidence that "nearly half of … fourth-graders didn't know that 'puzzled' means confused" comes from the pattern of answers to this question:

The correct answer is D, which 51% of the fourth graders chose. It references the third paragraph of the passage that the students are being asked about:

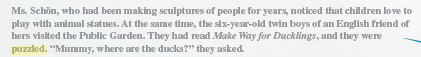

Here's what the NAEP report says about the wrong answers:

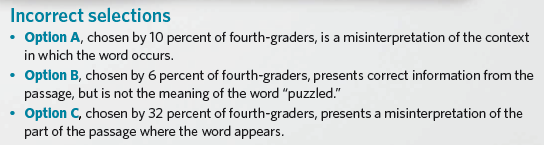

Option C, chosen by 32% of the fourth graders, in fact references the sixth paragraph of the first page of the passage:

It's likely that the students who answered "C" actually did know that "'puzzled' means confused" — they just remembered the second passage on the previous page where children encountered a state of affairs markedly different from what they expected to see, rather than the first one; and they failed to realize that to meet the expectations of the test makers, they needed to reference a passage where the word "puzzled" itself was used, rather than one where its meaning was appropriate. Thus a more plausible interpretation of these results is that at least 51+32 = 83% of fourth graders know what "puzzled" means, and can apply that knowledge in the interpretation of a fairly long and complex text.

OK, we've learned that Ms. Banchero swallowed the NAEP report's alarmism willingly and uncritically — that's the normal bias towards "sensationalism, conflict, and laziness". What's a little out of the ordinary here is indicated by this passage at the bottom of the story:

Corrections & Amplifications

The test results showed that nearly half of eighth-graders didn't know that "permeates" means to "spread all the way through," and about the same proportion of fourth-graders didn't know that "puzzled" means confused. An earlier version of this article incorrectly said that nearly half of eighth-graders didn't know the meaning of "puzzled."

So in an article about "severe shortcomings in the nation's reading education", the Wall Street Journal's ace reporter made an elementary mistake in reading comprehension. But wait, it gets better.

Remember the part about how "U.S. students knew only about half of what they were expected to on a new vocabulary section of a national exam", because "Eighth-graders scored an average of 265 out of 500 in vocabulary" and "Fourth-graders averaged a score of 218 out of 500"?

This is not quite as transparently brainless as the complaint about No Child Left Behind testing attributed to Prof. Fred Hess, director of NU's Center for Urban School Policy:

The tests being used are formulated so that 50 percent of the test-takers will fall below the median score — in effect setting school districts up for failure no matter how much preparation students receive, he said.

But as a flaw in the practical application of elementary quantitative reasoning, it's close. Let me turn the floor over to Kevin Drum:

I'm not crying for the students. I'm crying for the reporter, who apparently believes that students "knew only about half of what they were expected to" because they scored in the vicinity of 250 out of 500. And since 250 is half of 500, that must mean students only knew half of what they should.

This is wrong on so many levels I don't even know where to start. First, these are scale scores, not percentages of correct answers. Second, they're normed scores. Third, this is the same way all the NAEP tests are done, and they all produce scores in the same vicinity. The current eighth grade math average is 284. The reading average is 265. The history average is 266. Etc. And since the scores are scaled so that ten points roughly equals a grade level, fourth graders scored a little more than 40 points lower by definition.

In other words, these numbers in isolation don't tell us anything at all about whether the vocabulary skills of our children are weak or strong. It's like saying someone who scored 100 out of 200 on an IQ test must be a moron. Unfortunately, the reporter was flatly ignorant of all this, so she simply hauled out standard hysterical template No. 4 and decided that the test results represented "severe shortcomings in the nation's reading education" even though they show no such thing.

The facts about what the NAEP numbers really mean are explained at length in "An Overview of Technical Procedures for the NAEP Assessment", easily found from the NAEP's "about" page.

Keith M Ellis said,

December 8, 2012 @ 7:49 pm

If that "puzzled" question was designed to test reading comprehension, it's arguably well-designed. If it's designed to test vocabulary, then it's terrible — surprised and puzzled overlap in many contexts.

With regard to the journalist, in this and similar cases I'm more disappointed with the editors and institution. Individual journalists will always make stupid errors from time-to-time, that's part of why they have editors. But when editors don't catch these kinds of egregious errors — and, honestly, it's particularly the editor's job to detect a journalist's ignorance such as this where the entire thesis of the story is based upon a crucial misconception — the fault then lies not only with the journalist and editor, but with the institutional structures and procedures that should prevent these failures. This is true in science with plagiarism, fraud, and of course simple error in research; it's true in many contexts. Individuals make mistakes, sometimes very stupid mistakes. That's why we build institutions to correct for this.

It seems to me that American journalism is very badly failing as an institution with regard to its reporting on both politics and science because of what amounts to, ultimately, a combination of a sort of willful ignorance and deliberate misrepresentation. Reporters and their editors are inexcusably ignorant about subjects of the stories they report. They don't understand basic science or scientific culture. They don't understand the government policies about which they report, because they don't understand economics or other relevant subjects. And in both cases they deliberately misrepresent stories, or at the very least sensationalize stories, in ways that misleads readers while satisfying readers' hunger for scandal or outrage or their confirmation bias. Journalism talks a lot about its integrity and its virtuous function as the Fourth Estate, but in my opinion it's mostly a bedtime story they tell themselves so they can sleep at night.

There's no question that the decline of the traditional print media has destroyed revenues and jobs and has made it far more difficult to adhere to high standards. But I just don't think it's ever much adhered to those standards, especially in the context we're discussing here: presenting technical information to the audience in such a way that readers result in actually knowing more, and not less (with "less" being the result of being misinformed); and even, or especially, when the matter is of great civic importance.

This particular story is what it is because the reporter was inexcusably ignorant and because its raison d'être was mostly to satisfy readers' desire for a "kids today…" story that confirms their own biases. It almost wasn't at all about informing its readers of the current status of childrens' vocabulary test scores.

Helena Constantine said,

December 8, 2012 @ 8:23 pm

Prof. Hess must come from Lake Woebegone.

Josh Treleaven said,

December 8, 2012 @ 9:36 pm

This is obviously a severe shortcoming in the nation's journalistia.

Ironic and hypocritical, yes, but like the test scores, nothing to pull our collective hair out over.

Still makes for a fun blog post. Apparently us commenters have to be careful too though, because you never know who's watching.

Eugene said,

December 9, 2012 @ 12:41 am

If 4th graders can make sense of those fairly complex passages and handle such puzzling multiple choice questions even 50% of the time, we shouldn't be at all worried about severe shortcomings in the nation's reading education. The kids are alright.

The biggest problem with using testing to evaluate teachers and students and the education system is that very few people understand what tests can measure and what test scores mean.

And, as Keith said, that wasn't a vocabulary question.

Jason said,

December 9, 2012 @ 5:28 am

If 4th graders can make sense of those fairly complex passages and handle such puzzling multiple choice questions even 50% of the time, we shouldn't be at all worried about severe shortcomings in the nation's reading education. The kids are alright.

I'm pretty sure I would have absolutely no idea how to answer that question were it to be given to me in a standardized test. Now I don't know if I'm a vocabulary genius, but I speak two languages, did honors-level philosophy and can define the difference between "intensionality" and "intentionality". Does that qualify me to have an opinion?

If 50% of 4th graders can answer that question "correctly" that just shows how test-wise our 4th graders are getting, presumably as a result of all the coaching they've been getting in mind-reading the framers of the test. Questions that stupid almost makes one wish for the old "plenipotentiary is to diplomat as _____ is to fashion model" questions.

On the bright side, by the time these students graduate high school they will be so familiar with standardized testing that hopefully Ms Branchero's replacement will recognise the words "mean", and "normalization." To not understand that eighth grade students averaged 265 on 500 point scale because the distribution of the aggregate scores across all grades has been normalized so that its mean is at that point is, to use the technical term, buttfuckingly stupid and would in a just world immediately dispatch Ms Branchero to a community college for some remedial statistics for journalists course.

Unfortunately, the story did its job, in that it reinforced the prejudices of its audience and earned page hits and click-throughs, which is the only standard of accuracy that journalism is now held to.

Jason said,

December 9, 2012 @ 5:35 am

For Mean read Median in the above post. See, accuracy matters.

mcdruid said,

December 9, 2012 @ 6:10 am

Google trends shows a sharp uptick for "urbane" the day the story broke.

Matt McIrvin said,

December 9, 2012 @ 8:42 am

Is Hess's complaint "transparently brainless", or is he accusing the people making use of NCLB test results of making the mistake that you're accusing him of making? (The quote isn't in the WSJ article, so I'm not sure what the source is.)

Obviously 50% of students will always be below median, and 50% of schools will be below-median schools, but if being below median functionally is interpreted as failure, then that's the problem.

[(myl) If you had followed the link associated with the quote (to "Attributional Abduction", 1/14/2004), you would have learned that

(1) Prof. Hess was quoted in the Daily Northwestern on 1/09/2004;

(2) The article attributed to him the view that NCLB tests "are formulated so that 50 percent of the test-takers will fall below the median score", which surely does indicate a serious problem in conception or communication;

(3) I had (and have) no idea whether the problem should be attributed to Prof. Hess, or to the reporter, or to an editor, or to a hit squad from The Onion — that was the point of the cited post.

The point of this reply is that you should probably consider following links (which function as footnotes, giving the source of an observation), before commenting on the observation that the link supports.]

Matt McIrvin said,

December 9, 2012 @ 8:57 am

"If 50% of 4th graders can answer that question "correctly" that just shows how test-wise our 4th graders are getting, presumably as a result of all the coaching they've been getting in mind-reading the framers of the test."

Not only that, it's possible to get wise to tests that are written differently from the test you're actually taking. I know that when I was in school, I took plenty of multiple-choice tests that were sloppily enough written that the correct answer would have been similar to the "incorrect" answers there, in which the difference between right and wrong hinges on subtle differences in contextual reference.

Actually, true-false quizzes were the worst. I learned to dread true-false quizzes, because they'd invariably be mind-reading exercises in which the problem was distinguishing between a mistake that you were supposed to notice and mark "false", and a mistake that crept in because the test author was just being sloppy.

CherylT said,

December 9, 2012 @ 9:28 am

The completely unlamented former UK Adult literacy test (for a level roughly similar to mid-secondary education) required similar mind-reading on the part of those taking the test. Not 'What is the right answer?' or 'Which is the best of two reasonable answers?' but 'What answer does the person who wrote this test expect?'. More advanced ESOL students were expected to take the same test as native speakers, with no allowance for different first language scripts or different cultural backgrounds. Teaching even well-educated adults how to take the test could take nearly as much time and effort as teaching them the language skills that were supposedly being tested.

Andrew (not the same one) said,

December 9, 2012 @ 11:38 am

Does 'puzzled' really mean the same as 'confused'? I would say that 'I wonder where the ducks have gone,' was puzzled, while 'Oh gosh, the ducks. What? Where? I don't understand,' was confused. (Possibly the latter is also puzzled, but not vice versa.)

Matt McIrvin said,

December 9, 2012 @ 11:58 am

These kinds of stories make me wonder about the popular statistics that say that some frighteningly huge (but varying) percentage of Americans are "functionally illiterate." Is it really that they have too-poor reading skills to fill out a form, or have they just scored low on some idiotically constructed gotcha test?

[(myl) There are surely genuine problems in many areas of education in many parts of the world, and genuine problems in adults' levels of knowledge and skills as well. But the stories that are told to illustrate these points are often exaggerated, misleading, or flat wrong — see "Freedom of speech: More famous than Bart Simpson", or "A reading comprehension test", for a couple of notable examples.

And overall, I'm less worried about how fourth-graders perform on the NAEP's tests than I am about whether the WSJ's reporters can read and understand and summarize the NAEP's reports, and whether the WSJ's readers can put the WSJ's summary into socio-political context in a half-way sensible fashion.]

Jeff Carney said,

December 9, 2012 @ 7:22 pm

Exactly. When influential media like WSJ get people riled up, including legislators and curriculum designers, we're apt to get sweeping changes that address the wrong problems. Worse, we may get "solutions" that have little empirical evidence demonstrating that they have a hope in hell of working.

Mark F. said,

December 9, 2012 @ 11:02 pm

I think the way to describe the students' performance on the ducks question would be to say that almost half of them failed to figure out that they had to find an exact match in the text for the italicized word in order to answer the question.

richardelguru said,

December 10, 2012 @ 7:37 am

Jason "plenipotentiary is to diplomat as _____ is to fashion model"

Anorexic?

:-)

Dan M. said,

December 10, 2012 @ 12:42 pm

Am I the only one who thought from the title of this post that it was going to discuss the misuse of "fall flat"?

In my ideolect at least, "fall flat" means "to fail to elicit the desired reaction from an audience". So, a report can fall flat by not impacting policy or not conveying the result wanted by its sponsor, and students can fall flat with a musical or comedy sketch, but it's impossible for students to fall flat in a test.

Is there another usage of "fall flat" that I've failed to learn?

chris said,

December 10, 2012 @ 4:41 pm

some remedial statistics for journalists course

Unfortunately, the mention of such a thing presupposes (I suspect incorrectly) that there is some standard level of comprehension of statistics that is expected of journalists, such that failing to achieve it warrants a remedy.

Ken Brown said,

December 10, 2012 @ 5:50 pm

And since when did the range of use of "permeates" imply all the way through?

Dan M. said,

December 10, 2012 @ 9:11 pm

To be fair, in the context where "permeates" is used, it would mean all the way through, if indeed the passage means anything at all. But that context is quite odd. From the PDF linked in the OP (emphasis mine):

Obviously, that's a sentence fragment, but even recognizing that good writing sometimes uses such fragments, I cannot make this grammatical for myself.

If the sentence were "Permeated it with mint syrup.", then I could see it as having elided the subject, but as it stands it sounds like an adjective phrase, and I see no grammatical way of telling what it modifies; "glass dish" is nearest, but obviously senseless.

If the phrase were connected to the previous sentence by a common, it would then seem to modify the "it" that was served. That fits the apparent meaning, but is, at least to me, simply not one of the possible things expressed by the punctuation used.

It's hardly a wonder if students were having trouble telling what the author meant by the sentence fragment and failed over to choosing the unconnected true statement in (D).

Joe said,

December 11, 2012 @ 3:06 am

@Dan M.

I think there is a typo somewhere in your question. The one that I have states:

"On page 1, the author says that mint syrup PERMEATED the saved ice. This means that the mint syrup . . ."

As with the case of "puzzled," you really need to know the questions and what students responded to be able to interpret the result. 24 percent of students selected option D, which states that "made the shaved ice taste better." I can certainly see why students would have selected this answer. The question doesn't say, "the word 'permeate' means in this context . . . Instead, it says "this means. . . " (it's obvious to someone used to answering such questions that "it" refers to the boldfaced word, but the question is badly worded). One could certainly argue that students who said that "this means that the mint syrup made the shaved ice taste better" had an understanding of what "permeate" means: they just didn't correctly identify what the question was asking. So it looks like to me that you could arguably say that 75% of students had at least some understanding of what permeates means.

http://nces.ed.gov/nationsreportcard/pdf/main2011/2013452.pdf

Dan M. said,

December 11, 2012 @ 4:36 am

I see that in accordance with Skitt's Law, I need to make the substitution s/common/comma/

Joe said,

December 11, 2012 @ 6:48 am

@Dan M.

Sorry, I misread what you had posted. I was referring to the question that was asked, but now I see you were referring to the text they read from. So I thought the typo was an incorrect copy of the question that students were asked. I wasn't referring to your own post. Sorry.

undergrad said,

December 12, 2012 @ 12:23 pm

Thanks for the link, Joe.

Maybe I've failed the comprehension test provided by the color-coded charts on page eight, but if I haven't… Does anyone think it's an interesting coincidence that the Chair and Vice Chair of the National Assessment Governing Board are from two of the three states that scored higher than the national average for all grade levels tested (MA & NH)?

A reporting fail on failing reports. | greg walklin said,

December 19, 2012 @ 8:59 am

[…] a little late to this link, but the always-excellent Language Log has a great post eviscerating a recent Wall Street Journal […]

On Bloomberg and the Financial Times, AP and Homophobia, 2012 in Review and Mannying « Project Chiron (Beta) said,

December 21, 2012 @ 6:29 am

[…] Economist in a piece on “shaken but not stirred.” In another Language Log post, Mark Liberman questioned the accuracy of Stephanie Banchero’s interpretation of the results of the 2011 National Assessment of Educational […]