Literate programming and reproducible research

« previous post | next post »

From the Scientific and Technical Achievements section of last week's Academy Awards:

To Matt Pharr, Greg Humphreys and Pat Hanrahan for their formalization and reference implementation of the concepts behind physically based rendering, as shared in their book Physically Based Rendering. Physically based rendering has transformed computer graphics lighting by more accurately simulating materials and lights, allowing digital artists to focus on cinematography rather than the intricacies of rendering. First published in 2004, Physically Based Rendering is both a textbook and a complete source-code implementation that has provided a widely adopted practical roadmap for most physically based shading and lighting systems used in film production.

I believe that this is the first time that an Academy Award has been given to a book. And this (ten-year-old) book was written in a way that deserves to be better known and more widely imitated.

The publisher's blurb for Physically-based rendering: From theory to implementation:

From movies to video games, computer-rendered images are pervasive today. Physically Based Rendering introduces the concepts and theory of photorealistic rendering hand in hand with the source code for a sophisticated renderer. By coupling the discussion of rendering algorithms with their implementations, Matt Pharr and Greg Humphreys are able to reveal many of the details and subtleties of these algorithms. But this book goes further; it also describes the design strategies involved with building real systems—there is much more to writing a good renderer than stringing together a set of fast algorithms. For example, techniques for high-quality antialiasing must be considered from the start, as they have implications throughout the system. The rendering system described in this book is itself highly readable, written in a style called literate programming that mixes text describing the system with the code that implements it. Literate programming gives a gentle introduction to working with programs of this size. This lucid pairing of text and code offers the most complete and in-depth book available for understanding, designing, and building physically realistic rendering systems.

As the Wikipedia article on Literate Programming explains,

Literate programming is an approach to programming introduced by Donald Knuth in which a program is given as an explanation of the program logic in a natural language, such as English, interspersed with snippets of macros and traditional source code, from which a compilable source code can be generated.

Donald Knuth's own explanation, from the 1984 article where he first laid out the idea:

The past ten years have witnessed substantial improvements in programming methodology. This advance, carried out under the banner of “structured programming,” has led to programs that are more reliable and easier to comprehend; yet the results are not entirely satisfactory. My purpose in the present paper is to propose another motto that may be appropriate for the next decade, as we attempt to make further progress in the state of the art. I believe that the time is ripe for significantly better documentation of programs, and that we can best achieve this by considering programs to be works of literature. Hence, my title: “Literate Programming.”

Let us change our traditional attitude to the construction of programs: Instead of imagining that our main task is to instruct a computer what to do, let us concentrate rather on explaining to human beings what we want a computer to do.

Although 1984 was 30 years ago, and Knuth's idea is obviously a good one, its impact has been surprisingly limited. The proportion of the programs written last year that were implemented in a "literate" mode is, to several significant digits, zero.

The best-known and most widely used systems now used for Literate Programming are Sweave and Knitr, both providing support for reproducible research in R. The application to reproducible research shifts the goal a bit — instead of "explaining to human beings what we want a computer to do", the aim is to "explain to human beings what we made the computer do in running the analyses or simulations under discussion, so as to allow them to reproduce and extend our work".

In other words, a Knitr document is typically not a program at all — though it can be executed. Rather, it's a scientific or technical paper, which happens also to include the code and data needed to reproduce the claimed results, in a way that makes the relationship to the paper's numbers and tables and graphs completely transparent. The advantages to readers, to authors, and to the field at large are obvious.

As I interpret the publisher's description, Physically Based Rendering is actually a third kind of thing: a tutorial or textbook that is also transparently executable.

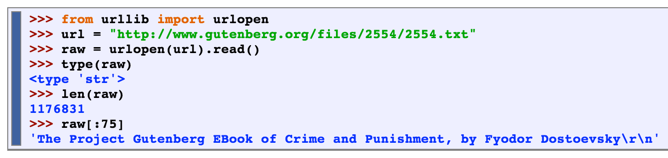

It's common for tutorial texts to include code examples — see e.g. the NLTK Book for some excellent examples. These are typically snapshots of interactive sessions, including what the computer prints out as well as what the user types in:

And there is often source code available online, of course. Is there a significant advantage to full "literate programming" in such tutorial explanations? Maybe not; which might help explain why the idea hasn't had a greater impact.

But still, every time I pick up a project that I've put aside for a few years (or months, or weeks), I find myself wishing that I'd been using a "literate programming" mode from the beginning, rather than just a set of notes about what I did and how I did it.

bks said,

February 22, 2014 @ 11:08 am

Computer programs are not written in a literate manner because the immediate goal is to

make the compiler understand the program, not a human reader, and because the frequent

cutting and pasting of computer program text would lead the final product to read like a

William S. Burroughs novel. Also many programmers are not particularly literate to

begin with. At my last job I had difficulty convincing HR (when did we stop calling it

"personnel"?) that English fluency was important for programming positions.

–bks

Eric P Smith said,

February 22, 2014 @ 11:13 am

To several significant digits, zero? Think about it!

[(myl) Let's suppose that the proportion of programs written in this paradigm is one in ten million. I get e.g. in R

> round(1/10000000,digits=5)

[1] 0

What's your result? ]

D.O. said,

February 22, 2014 @ 12:59 pm

But what about executable blogposts?

Ben said,

February 22, 2014 @ 1:31 pm

I did a project using literate Haskell, and it was part of a larger paper on some ideas. I think literate programming is still by far the best way to use code to explain the nuances of certain algorithms.

When I'm working professionally, we're intentionally trying to hide the complexity of what we're doing. We want to make the smallest set of promises that deliver everything our users need, because if they take advantage of some clever trick we used, we're stuck supporting that clever trick, even if we later decide to do it a different way.

And that model of interface hiding implementation is, by far, the dominant model in software engineering, simply because systems are so complex you affirmatively don't want to expose the details of the system you're designing.

"Also many programmers are not particularly literate to begin with."

I'd like to see some numbers to justify that claim.

Noah Diewald said,

February 22, 2014 @ 1:42 pm

@D.O. There is a tool for that:

http://hackage.haskell.org/package/BlogLiterately-0.2

Craig said,

February 22, 2014 @ 1:45 pm

bks makes a good point. Asking semi-literate programmers to write literate programs is obviously rather problematic. In my nearly 30 years (at this point) as a professional software engineer, I have only occasionally come across programmers who actually comment their code in a really helpful way. Many hardly comment their work at all, and some who write lots of comments might as well not have bothered (my all-time favorite example being the guy who commented the statement "x = x+1;" with the words "Increment x" . His code had quite possibly the highest comment to code ratio I've ever seen, but 99% of those comments were completely worthless).

Some of the claimed benefits of Knuth's system have since been achieved in other ways. Software such as Doxygen can produce nicely-formatted documentation in a variety of formats (HTML, PDF, etc.) from source code comments if the programmers follow Doxygen's conventions. Of course, for such documentation to really be useful, the comments have to be written in a way that is actually helpful, and they have to be maintained as the software evolves, which they often are not. Knuth's system is not a solution to these problems, but rather something that would be beneficial only after these problems have been solved.

I wish university CS programs had an actual class on how to document code. The problem, though, is that you'd have to get someone who really understood how to document code properly to teach it, and it doesn't seem there are very many of them around.

Ben said,

February 22, 2014 @ 2:15 pm

Incidentally, part of the reason literate programming never took off may be because of Knuth's execution. This is not to say the basic idea is bad, but what people may have seen is that literate programs weren't particularly readable.

Here is an example of a literate version of "wc":

http://www.cs.tufts.edu/~nr/noweb/examples/wc.html

Here is the source of a version with a few sparse comments:

https://www.gnu.org/software/cflow/manual/html_node/Source-of-wc-command.html

That's a pretty straightforward command, and the literate version comes across to me as a mess. Maybe the literate version makes sense if you can't read C, but if that's the case, why not simply read the man page?

Ben said,

February 22, 2014 @ 2:17 pm

"His code had quite possibly the highest comment to code ratio I've ever seen, but 99% of those comments were completely worthless"

That's what code review is for. Generally, the guys who have been doing it for 30 years should be teaching the new guys, instead of just spouting off about them behind their backs.

bks said,

February 22, 2014 @ 2:50 pm

The very literate Stan Kelley-Bootle wrote a very funny column in _Unix Review_ about an AI computer that ignored the code and compiled the comments in which case Craig's example would be the height of literacy.

–bks

Craig said,

February 22, 2014 @ 3:13 pm

"That's what code review is for. Generally, the guys who have been doing it for 30 years should be teaching the new guys, instead of just spouting off about them behind their backs."

Well, there you go making assumptions about a situation you know nothing about.

This was several years ago, and neither of us work for that company anymore. Formal code reviews were not part of normal practice there, and the programmer in question was not a "new guy" in any sense. At that point, he probably had at least ten years of professional experience since earning his Master's. He had also been at the company much longer than I had. He wasn't a bad guy, and he got the job done, but elegance was not his strong point.

I'm also not sure how any sensible person could infer, from what little I said above, that I never said anything to him about his comments. In fact, I did. He grinned and shrugged it off, just as he did similar remarks from other colleagues.

I don't want to repeat your error by inferring too much about you based on one comment, but your reference to "spouting off about them behind their backs" is downright childish and makes me wonder what emotional sore spot of yours I've inadvertently stepped on. I think that drawing on real-world experience is a good way to illustrate one's arguments; do you disagree? It's not as if I gave the guy's name or any information that could be used to identify him, the company we worked at, or even the industry or country we worked in. I'm not trying to embarrass him or damage his reputation. So what exactly is the problem?

Rubrick said,

February 22, 2014 @ 3:14 pm

I think a key reason most programming is not done in a more literate, comment-rich style is simply that for most programmers, writing code is fun, but documenting it is a drudge. It's very difficult to force yourself to spend a lot of your time performing a task you don't enjoy, even if you know that everyone (including yourself) will benefit in the long run. Mea culpa.

Jeroen Mostert said,

February 22, 2014 @ 4:17 pm

I very rarely find myself adding explanatory comments. For those rare times that I do, I can definitely see the advantage to a literate approach where code and comments share a close structural relationship.

The thing is this: most code I write (and read) in a professional capacity is boring. And I don't mean boring in the sense that it's page after page of funny squigglies that most humans would find tedious to drudge through — I mean boring in the sense that most of the time, you don't want to see what the code is doing because it's obvious from context. This is the good kind of boring, incidentally. Whatever there is to document is not on the code level, but in the explanation of why, on the highest level, we are doing what we are doing, which is independent of my particular implementation. Interspersing this with the code is possible, but doesn't seem to have any particular advantage.

Things look quite different when you've got a tricky bit of mathematics to work into the code, or the code itself cannot be made obvious because of performance concerns, workarounds for compiler bugs, legacy cruft people don't dare touch and similar artifacts of complexity — in other words, when the plain code isn't all there is to the program. Being able to integrate the tricky code and your human-readable explanation for it increases the odds of both staying relevant. Even so, code and natural language have different goals and different rhythms and integrating them takes possibly even more skill than writing them separately. In this sense, to paraphrase Chesterton, literate programming hasn't been tried and found wanting, rather it hasn't been tried (Knuth's initial efforts notwithstanding). Educating people (including, potentially, yourself) to understand what you're doing is, unsurprisingly, harder than just doing it.

All this is quite different from actual documentation, as in, the bits of text people need to use what you've written without having to read it. What's needed here depends on your scenario (maybe it's a user's manual, maybe it's an API), but if you don't write it, your code is are useless at best and dangerous at worst. Programmers don't like doing this either, but that doesn't have much to do with literate programming. It's a shame, because clear writing promotes clear thinking, and what could possibly be of more use to a programmer than that?

Jeroen Mostert said,

February 22, 2014 @ 4:25 pm

"…your code is are useless at best…" Omit the "are", obviously. There's probably more things wrong because I used the phrase "clear writing", and the gods of irony just don't miss opportunities like that.

Julian said,

February 22, 2014 @ 8:01 pm

I understand the idea of Literate Programming took a heavy hit in a famous 1986 article in the Communications of the ACM.

Knuth was given a simple task (find the most common k words in a text file) and wrote a beautifully literate piece of code, with a complicated data structure that took over 6 double-column pages to describe.

It was then followed by a review of the code by Doug McIlroy, who produced a simple six line shell-script with a 12 line description.

Fair or not, it put the advantages of Literate Programming into doubt.

Jonathan Badger said,

February 22, 2014 @ 11:08 pm

@Julian

Obviously a shell script is at a much higher level of abstraction from the algorithm though. It takes things like "sort" as a given, not something that needs to be implemented and explained. But then again, that is kind of the reality of modern programming — the problems that need to be solved are so complex that working "from scratch" just isn't feasible anymore.

AntC said,

February 23, 2014 @ 3:09 am

@myl The proportion of the programs written last year that were implemented in a "literate" mode is, to several significant digits, zero.

Thank you Mark, and of course thank you to the Academy, for drawing attention to literate programming. (No, this isn't another Oscar acceptance speech!)

But I have to question your facts in this case. Or at least your sample basis. Most of the programs I see on blog posts are in literate style. Indeed they encourage you to load the blog into your compiler, as several posts here have mentioned. Perhaps this is limited to the Haskell and Higher-Order Languages community. Many compilers recognise literate style as a syntactic variant of the source code: unmarked lines are comment; code lines need special introduction.

Simon Wright said,

February 23, 2014 @ 7:31 am

@myl: “significant digits” is the number of digits that contribute to the significance of the result; so Avogadro’s constant (6.02e+23) and Planck’s constant (6.63e-34) are both written here with 3 significant digits, even though the first is huge and the second tiny.

Simon Wright said,

February 23, 2014 @ 7:35 am

I haven’t made many attempts at LP, but I’ve found it particularly useful when interfacing between different programming languages; for example, a sample program involving HTML, JavaScript and Ada at http://embed-web-srvr.sourceforge.net/ews.pdf .

John Roth said,

February 23, 2014 @ 7:54 am

Literate programming has a niche: write once, read many programs which are maintained by a single person and which seldom have many major improvements.

This simply doesn't describe most projects that I've been associated with in a lifetime (I'm now retired) of professional software development. It's not a surprise that Literate Programming is the product of a university professor, whose job is exposition to students, rather than the tens of thousands of working software developers who have to work with code on a daily basis.

The core fact of documentation is that the structures needed for a developer to work with a program are in the programmer's head; the purpose of documentation is to get them there efficiently and accurately. Once there, the documentation is of no further use until another developer needs it.

The standard documentation strategy in industry today is obvious code with well chosen names. There are good reasons for this: transparently obvious code has fewer defects, and it is never out of date.

That approach can't carry the entire load, but it reduces the need for ancillary documentation significantly. A great deal of the remainder is carried by professional-level development environments, which are designed for working programmers by working programmers to solve their every-day problems.

Eric P Smith said,

February 23, 2014 @ 9:44 am

@myl The function 'Round' rounds to 5 decimal digits, and returns 0. By contrast, 5 significant digits means 5 digits after the leftmost non-zero digit. The decimal expansion of the number zero has no non-zero digits, and therefore nowhere to start counting significant digits from.

Apologies if my first comment was trite and unnecessary, but that was the thinking behind it. As a mathematician I can get a bit pedantic about such things!

Eric P Smith said,

February 23, 2014 @ 9:45 am

Sorry: 5 digits starting from (and including) the leftmost non-zero digit.

KWillets said,

February 23, 2014 @ 9:54 am

I don't believe that linear text is suitable for programming documentation, for a number of reasons:

1. Comment drift, where the text becomes out-of-sync with the code.

2. Over-verbosity– I often need to see an algorithm shrunk down to a page or less, not developed over pages and pages of description that I either already know or need to think about separately before comparing to the code.

3. Contexts vary widely. The person reading the code may have absolutely no interest in the topics exposed in the comments. For instance porting code to a new platform may involve looking at low-level operations, but not high-level design or even correctness.

This isn't to say code shouldn't be augmented with other text, but in practice I've found that the hypertext model of having many links to discussions, other implementations, bug reports, etc. is more relevant than a single comment narrative, and often these links can be discovered most efficiently by searching, cutting and pasting error messages into search engines, etc., so even commenting becomes superfluous.

There's a joke about "how did people program before Stack Exchange" (a question-and-answer site for technical questions), and I once suggested that there be a programming language that automatically enters its error messages into web searches, but it's a valid model for documentation and discussion that avoids many of the problems of inline commenting.

davep said,

February 23, 2014 @ 11:59 am

Craig said: ""Well, there you go making assumptions about a situation you know nothing about."

The fact is that no one (who isn't a rank new employee) should be writing those sorts of comments in a production environment. That fact that you saw such code (from an employee with some experience) means somebody (not necessarily you!) wasn't doing their job (reviewing code).

davep said,

February 23, 2014 @ 12:14 pm

The problem with "literate programming" is that it doesn't really help programmers (who are experienced in the language being used).

"Literate programming" might be useful for conveying information about an algorithm publically (for an audience unfamiliar with the language being used) but it's a waste for professional programming.

One can write code that is hard or easy to follow. I'd much rather have programmers put more work into easier-to-follow code (with good variable names) than into writing comments. Comments, used "sparingly", still might be helful (but, ideally, there should not be a lot of them).

(Some programmers might find adding comments easier than writing clear, easy-to-follow code but comments are less valuable.)

Programmers have to understand the actual code they have to change/maintain (whatever comments might say the code is doing). The purpose of comments is to assist with that task (but not get in the way of that task). Since comments aren't tested, they might not actually be correct (which means they are a hinderance).

James P said,

February 23, 2014 @ 4:06 pm

Have you come across the IPython Notebook? It's similar in concept to sweave or knitr, but for Python.

Here's an example Notebook that mingles executable code (which can be run in-place on the page, if you download it and load it in the Notebook on your system) with explanations of what's happening

http://nbviewer.ipython.org/github/ptwobrussell/Mining-the-Social-Web/blob/master/ipython_notebooks/Chapter1.ipynb

Piyush said,

February 23, 2014 @ 4:29 pm

To add to Sweave and knitr, I believe IPython (via its "notebooks" feature) provides a nice system for literate programming for reproducible research.

Jonathan D said,

February 23, 2014 @ 5:26 pm

"As I interpret the publisher's description, Physically Based Rendering is actually a third kind of thing: a tutorial or textbook that is also transparently executable."

While that is quite believable, I don't think the publisher's description even hints at that interpretation.

Chris C. said,

February 23, 2014 @ 5:43 pm

I agree with John Roth. I can furthermore see a literate program easily becoming a maintenance nightmare. It would be very easy for the the embedded code to become decoupled from the natural language narrative surrounding it, and it will happen the first time a change is under any kind of schedule pressure to just get it working and released. A maintainer who later tries to rely on the narrative to tell him what's going on in the code will be worse off than if the narrative wasn't there at all.

Robert Forkel said,

February 24, 2014 @ 4:38 am

+1 for IPython notebookes. I think as scientific computing platforms get more feature rich and correspondingly code using it for analyses gets shorter, systems like IPython notebook actually can become a replacement for scientific papers.

Here's an example (tutorial) showing how to work with a language catalog (http://glottolog.org)

http://nbviewer.ipython.org/gist/xflr6/9050337/glottolog.ipynb

and this website lists many notebooks used for lectures as well as a coupe of papers reformatted as notebooks:

http://jrjohansson.github.io/

In the spirit of reproducible research, linked from the above site is also a IPython plugin to include version information of all relevant software packages to a notebook.

Jeremy said,

February 24, 2014 @ 6:53 am

This is really cool. Greg Humphreys chaired my Ph.D. committee, but I haven't seen him in a few years and wasn't aware of this.

Dennis Paul Himes said,

February 24, 2014 @ 10:49 am

I'll occasionally put jokes into my comments, for the benefit of whatever future programmer is tasked with maintaining it. I've also been known to rant a bit about whoever wrote this API I have to deal with. I've seen others' rants as well. Comments are a way for programmers to communicate with fellow programmers, possibly years in the future, and not only about what the code is currently doing.

Joe said,

February 24, 2014 @ 1:12 pm

If programming is literate, then could we read code and discuss it like a book club? Here's gigamonkeys take on literate programming: http://www.gigamonkeys.com/code-reading/

Ken Brown said,

February 24, 2014 @ 7:40 pm

I write extensive comments describing in natural English what each section of code is for, and sometimes why it was implemented that way. The reason I do that is because the most likely person to have to fix my code in a hurry is me.

Dennis Paul Himes said,

February 25, 2014 @ 10:22 am

Ken Brown wrote: "I write extensive comments … why it was implemented that way."

Some of the most useful comments I write are the ones which explain why I didn't implement something in the way which seems obvious at first glance.

Mark Dowson said,

February 25, 2014 @ 6:03 pm

Sorry, I submitted an incomplete comment above. Here is the full version.

In the real world, programs are read much more often than they are written, so a program needs to be clear – not just to the author and his team, but to the poor sod who, several years later, needs to fix, modify, extend, or re-use it.

There are a lot of ways to enhance the clarity and readability of code. For example, use of meaningful identifier names instead of cryptic abbreviations. To pick an example of this from some current work, "ProgramRequirementName" is much better than "pgmrqnm".

Use of English language comments (commentary) to enhance clarity and readability is a contentious issue. In a book on programming style which I edited (Ada Quality and Style, Van Nostrand Reinhold 1989), we took a clear position:

"The biggest problem with comments in practice is that people often fail to update them when the associated source text is changed, thereby making the commentary misleading"

"… commentary should be minimized; restricted to comments that emphasize the structure of code and draw attention to necessary violations of [guidelines and standards]"

" – Make comments unnecessary by trying to make the code say everything

– When in doubt, leave out the comment

– Do not use commentary to replace or replicate information that should be provided in separate documentation such as design documents

– Where a comment is required, make it succinct, concise, and grammatically correct"

In this context, it's worth retailing a remark by Edsgar Djikstra, one of the "fathers" of computer science:

"If, god forbid, I was in the business of selecting computer programmers, I would choose them for their excellent command of their native language"

James Wimberley said,

February 26, 2014 @ 8:37 pm

Idle question: do programmers ever start out by writing down in natural language the steps of the program, and then translate these intentions step by step into the code that the computer needs?

If they do, the natural-language parts could be seen as a design drawing. They are not necessarily useful to later readers.

Mark Dowson said,

February 26, 2014 @ 9:52 pm

@James Kimberly

This is quite a complicated issue. Some programming languages explicitly support writing a high-level description of of the steps involved in a chunk of code (in Ada, for example, this is called a "package specification") before writing the detailed code which will implement (provide the nitty-nitty details of) that description. In these cases, the compiler (which turns the program into something that the computer operating system/hardware can use) can check – at least to some extent – whether the detailed code is consistent with the high-level description.

In cases where the language doesn't support that, programmers may (but don't always) first write "pseudo-code" in something much closer to natural language. This similarly describes the steps that the code they write will implement. The pseudo-code may then get translated into English language explanatory comments interspersed with the the actual code. I do this, but I'm very far from being a professional programmer, so will use any help I can get. I am not sure how common this is amongst professional programmers (I can ask), but my impression is that it is reserved for particularly complicated chunks of program.

Mark Dowson said,

February 26, 2014 @ 9:58 pm

Sorry – James Wimberley, not "Kimberly"

And, yes, pseudo-code descriptions are often useful to later readers, but they often end up in design documentation rather than as comments interspersed with the code.

Garrett Wollman said,

February 26, 2014 @ 11:02 pm

@James Wimberley: There are many popular development methodologies these days, and of course many programs are developed without the help of any particular methodology. One with which I am passingly familiar is test-driven development (TDD), in which the program's specifications are written first, in the form of executable tests, and then the program itself is built so that it passes the test. When correctness bugs are discovered, tests are written to ensure that the problem is actually fixed, and remains fixed. If the specification changes, the tests are altered to check for the new behavior, and then the code is modified until it passes the new tests.

Matt McIrvin said,

February 26, 2014 @ 11:18 pm

I think Bjarne Stroustrup also came down on the side of writing as few comments as possible, and instead making code "self-documenting" through useful identifiers, transparent code structure, etc. His main concern, as several people have stated above, was comment drift, in which code gets modified in various ways and the comments are not.

Self-documenting code is a fine idea in theory. In practice, I find that the problem with it is that everyone already thinks their own source code is self-documenting. They understood it when they wrote it, after all. Comment drift happens, but I suspect that at least some of the fretting about it is really cover for programmers just not wanting to write documentation.

I try to write comments or external documentation about anything that I had to spend a significant amount of time thinking about, or that I had to learn in order to write the code. Obviously, ending a line with a direct English transcription of the code in that line is not helpful, except possibly as a guide for people who don't know the programming language at all.

Matt Pharr said,

February 27, 2014 @ 2:15 pm

Hi, I'm one of the authors of the aformentioned book. This has been an interesting discussion; there are a few things I thought I might offer my opinion on…

First, I (not surprisingly) disagree with @bks:

Computer programs are not written in a literate manner because the immediate goal is to make the compiler understand the program, not a human reader, and because the frequent cutting and pasting of computer program text would lead the final product to read like a William S. Burroughs novel.

He (and others commenting here) might find it interesting to read a chapter or two from the book; two are available for free download here: Chapter 4, 2nd ed, Chapter 7, 1st ed

I do believe that the end result is more readable than most of the work of Burroughs.

(Regarding John Roth's university professor comment: I've been a professional software developer ever since leaving grad school.)

To the folks who have suggested that literate programming isn't very useful to readers, I might appeal to authority and point to the fact that they gave us this award: this is indeed the first book that has received an Academy Award, and the award was entirely due to the impact that the book has had in the movie production industry. (In the end, that is what they give these awards for.) While it's conceivable that we could have written some other textbook that wasn't a literate program that might have had this impact, my personal belief is that this never would have happened if we hadn't adopted literate programming.

Our goal with the book was to thoroughly explain the concepts behind physically based rendering to readers. There are a fairly broad set of mathematical concepts underlying the topic (a lot of geometric computation, linear algebra, probability and statictics, Monte Carlo integration, sampling theory and reconstruction (Fourier analysis), …) as well as a lot of physics (how to measure and quantify light, modeling the scattering of light from surfaces, …).

(With that in mind, I also disagree with John Roth's suggestion that the combination of good variable names professional development environments end up providing equivalent functionality as a literate program.)

Given all that there is to cover, there's a big learning curve for people who want to do work in rendering, and I think our approach of providing something that teaches people the ideas, the math and the physics, all together with a working software system that provides a working implementation of something that performs these computations has worked out to be a fairly effective way for people to learn about this area. The source code / software system part of it helped forced us, as authors, to put our money where our mouths were: if we say that X algorithm works to compute Y operation, the fact that the reader can run our code to confirm that for themselves is fairly useful to readers, I think.

I don't believe that literate programming is necessarily appropriate for all software; as many folks have pointed out, a lot of software isn't so complex that it's necessary, and the literate programming approach definitely introduces additional burden. I'd estimate that the total work involved is basically the sum of the work to write a software system plus the work to write a book. As it turns out that writing a book is a big undertaking, this is a pretty substantial effort. Many programmers certainly aren't good writers or don't enjoy writing.

Finally, I'd second Jeroen Mostert's point:

In this sense, to paraphrase Chesterton, literate programming hasn't been tried and found wanting, rather it hasn't been tried (Knuth's initial efforts notwithstanding). Educating people (including, potentially, yourself) to understand what you're doing is, unsurprisingly, harder than just doing it.

I do wish there was more literate programming in the world, though I'm not sure I'm optimistic enough to hope that the current state of affairs will change in the future…

Mike Maxwell said,

March 1, 2014 @ 11:51 am

@Matt: Beautiful book!

You remark in the preface that you are aware of only two other literate programs published as books (besides Knuth's). We're trying–we have electronic forms of a couple book-length grammars that use literate programming to describe the morphology and phonology of some languages, with the express purpose (going back to Mark's title for this blog posting) of making the grammars reproducible research. But in the printed version of the Pashto grammar (just out from Mouton), we omitted the literate programming rules, in part because ours are expressed in XML, which would just constitute uglification. Beautifying them is in our plans.